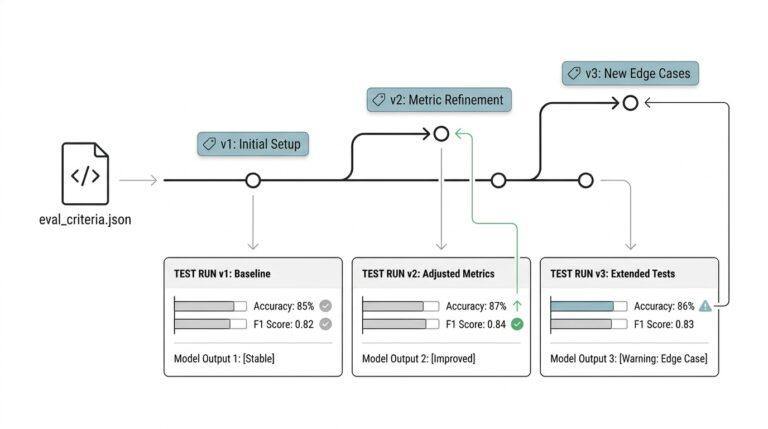

Version Your AI Evaluation Criteria Like Code for Better Machine Learning Testing

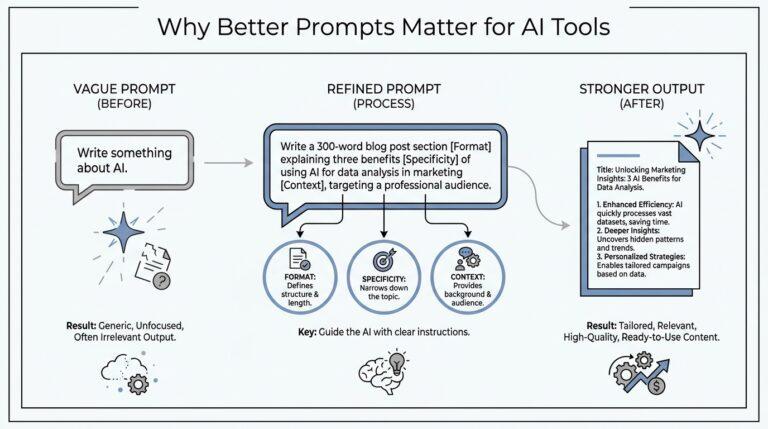

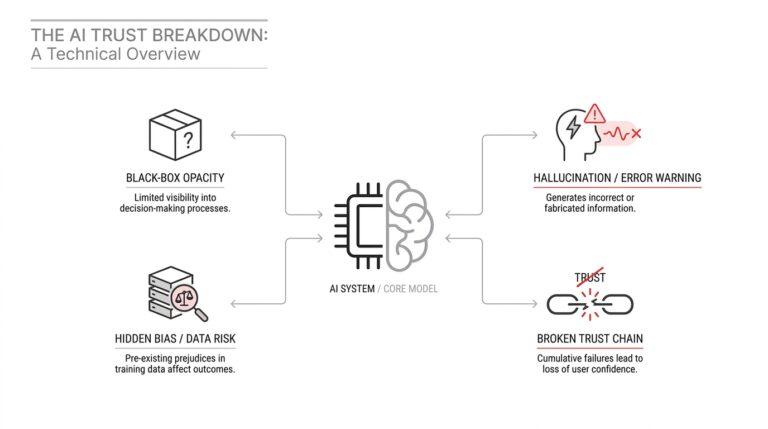

Define Clear Evaluation Rubrics When you first sit down to define evaluation rubrics, it can feel a little like trying to describe the taste of soup with a spreadsheet. You know when a model feels helpful, off-track, or unreliable, but those impressions are slippery unless you pin them to clear