Understanding the Challenges of Deploying Transformers

When moving transformer models from experimental phases to real-world production, organizations often encounter a set of complex challenges unique to this class of deep learning models. Unlike traditional machine learning deployment, transformers are resource-intensive, data-hungry, and require special consideration for scalability, latency, and maintenance. Understanding these challenges is essential to ensure a smooth and successful transition to production.

1. Computational Resources and Scalability

Transformers, particularly large language models like BERT, GPT, and their derivatives, are notorious for their high demand on computational resources. These models often contain hundreds of millions or even billions of parameters, necessitating powerful hardware such as GPUs or TPUs for efficient inference. The scaling challenge is twofold: providing the necessary hardware when usage spikes, and ensuring the infrastructure can automatically scale down to avoid unnecessary costs during periods of low demand. Containerization technologies such as Kubernetes and serverless deployment options have become popular to address these concerns. Additionally, cloud providers like Google Cloud Vertex AI and Azure Machine Learning offer managed solutions specifically designed to handle these high-performance workloads.

2. Latency and Real-Time Performance

Achieving low-latency responses with transformer models is a significant challenge. While GPUs accelerate inference, the sheer size and complexity of transformers can lead to delays, which are unacceptable in real-time applications such as chatbots, search engines, or recommendation systems. To mitigate this, techniques such as model distillation, quantization, and pruning are employed—each aiming to reduce the model size or optimize its architecture without sacrificing accuracy. For more on this, consider exploring resources from Neural Magic on model optimization strategies for deployment.

3. Model Monitoring and Drift

Once in production, transformer models are subject to changing data distributions, known as data drift or concept drift. Continuous monitoring is necessary to ensure model predictions remain accurate and unbiased. Implementing robust monitoring pipelines that track key metrics such as input data characteristics, prediction distributions, and latency is crucial. Tools like Evidently AI provide frameworks for real-time monitoring and alerting, enabling organizations to detect issues early and retrain models proactively.

4. Cost Management

Running transformer models at scale can quickly lead to ballooning operational costs. Besides compute, there are costs associated with data storage, bandwidth, and model retraining. Many organizations adopt strategies such as multi-tiered model architectures—using smaller, faster models for most requests and reserving the largest models for more complex cases. Another cost control technique involves batch processing requests during off-peak hours rather than handling each one individually. A detailed discussion about the economics of AI deployment can be found in research from arXiv.

5. Security and Privacy

Deploying transformers also introduces new security and privacy concerns. Large models can inadvertently memorize sensitive information from their training data, which may be exposed during inference. Ensuring compliance with privacy regulations such as the General Data Protection Regulation (GDPR) is imperative. Organizations are now exploring privacy-preserving machine learning techniques like federated learning and differential privacy to address these risks.

Tackling these challenges requires a multidisciplinary approach involving data engineers, model architects, DevOps, and compliance officers. By anticipating these issues and taking a proactive, technology-driven stance, organizations can unlock the true power of transformers in production environments. For deeper technical guidelines and case studies, see the MLSys Conference proceedings on modern infrastructure for AI deployment.

Assessing Infrastructure Requirements for Production Deployments

When preparing to deploy transformer models in a production environment, it’s crucial to thoroughly assess your infrastructure requirements to ensure scalability, reliability, and efficiency. Jumping straight from research to production without appropriate planning can result in performance bottlenecks, unexpected costs, and even system failures. Here’s how to comprehensively evaluate your infrastructure needs:

1. Evaluate Compute Resources

- GPU/TPU Availability: Transformer models, especially those based on architectures like BERT and GPT, are computationally intensive. Assess whether your workload demands GPUs or TPUs for inference, and what cloud providers offer such resources: Google Cloud TPU, AWS EC2 P4, or Azure NC-series.

- CPU Considerations: Lightweight transformer variants (like DistilBERT or TinyBERT) can often run efficiently on CPUs, which can be cost-effective. Benchmark model latency and throughput to determine feasibility.

- Memory Needs: Transformers are heavy on RAM consumption. Assess the model size and batch processing requirements to estimate the memory footprint. Refer to recommendations from resources like academic benchmarks.

2. Sizing for Load and Latency Expectations

- Peak Traffic Analysis: Assess your expected and peak user traffic. Will you support real-time inference, batch processing, or both? Calculate the worst-case scenario usage to prevent unexpected slowdowns. Resources like O’Reilly’s guide to production ML systems provide excellent frameworks for analyzing workload patterns.

- Latency Constraints: Many business applications require sub-second response times. Profile your model’s performance in a staging environment that mirrors production.

- Autoscaling: Set up autoscaling policies to dynamically adjust resources. Major clouds and Kubernetes offer autoscaling features (Kubernetes HPAs).

3. Data Pipeline Integration

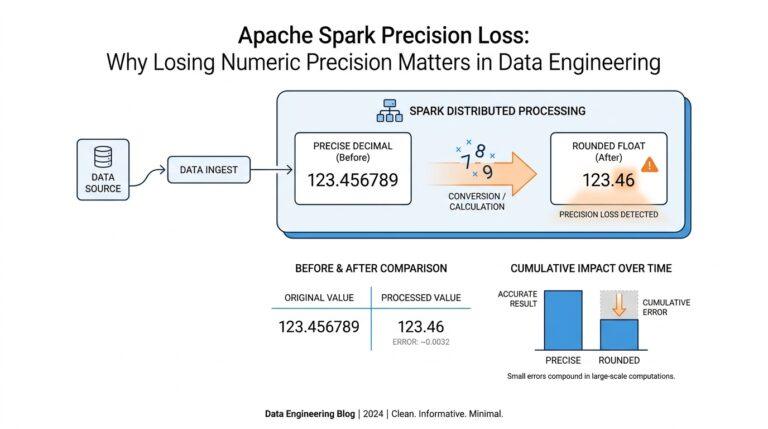

- Input/Output (I/O) Optimization: Transformers often process large texts or streams. Optimize your data pipelines with solutions like Apache Spark or TensorFlow Extended (TFX) for efficient data transfer and pre-processing.

- Batching and Queueing: Use request batching to maximize resource utilization and reduce latency. Consider message queues like Apache Kafka for robust pipeline orchestration.

4. Security and Compliance

- Access Control: Ensure only authorized services and users can run inference or access model endpoints. Implement robust authentication and authorization policies using industry standards like OAuth 2.0.

- Data Privacy: Abide by relevant regulations (GDPR, HIPAA). Sensitive data should be encrypted in transit (TLS) and at rest. Guidance is available from trusted institutions such as NIST’s Privacy Framework.

5. Monitoring and Observability

- Model Performance: Continuously monitor latency, throughput, and memory usage. Utilize tools like Prometheus and Grafana for real-time application metrics.

- Alerting: Set proactive alerts for anomalies and resource saturation. Establish policies for automated remedial actions.

- Logging: Implement detailed logging, capturing both system and prediction-level data. Comprehensive logs enable troubleshooting and auditing.

Taking the time to assess these infrastructure requirements in advance ensures a smoother, more reliable, and scalable transition of transformer models into production. For more in-depth technical discussions and recommended practices, the MLSys conference and Papers with Code Deployment Task are excellent resources.

Optimizing Model Size and Inference Speed

Successfully deploying Transformer-based models in production environments requires a strategic approach to optimally balance resource use, speed, and model performance. Here’s an in-depth guide on how to optimize for both model size and inference speed while ensuring robust deployment in real-world scenarios.

Understanding the Challenge of Model Size

State-of-the-art Transformer models, such as BERT or GPT-3, can contain hundreds of millions to billions of parameters, leading to significant memory and storage requirements. Deploying such large models without optimization can result in:

- Increased costs (hardware/cloud resources)

- Longer loading and inference times

- Challenges in scaling for multiple users

Therefore, reducing model size is crucial for cost-effectiveness and scalability. Some widely adopted techniques include:

- Model Pruning: Eliminate redundant parameters or neurons, focusing only on critical pathways. This can be done structurally (removing entire neurons/layers) or unstructurally (zeroing individual weights). Read more from Google AI Blog.

- Quantization: Convert 32-bit floating-point weights to lower-precision formats (such as 8-bit or even 4-bit), dramatically reducing storage needs with minimal loss in accuracy. Learn about quantization in this seminal paper on quantizing BERT.

- Knowledge Distillation: Train a smaller “student” model to replicate the behaviors of a large “teacher” model, achieving close performance with far fewer parameters. See practical examples from Hugging Face.

Accelerating Inference Speed

Inference speed in production impacts user experience and system throughput. There are several methods for maximizing throughput and minimizing latencies:

- Batch Processing: Serving multiple requests in batches enables efficient parallel computation on GPUs or specialized hardware. This approach is often built into deployment frameworks like TensorFlow Serving and NVIDIA Triton.

- Hardware Acceleration: Deploying models on optimized hardware such as GPUs, TPUs, or custom accelerators can yield significant performance gains. The Edge TPU from Google is tailor-made for fast, low-power inference.

- ONNX and Model Conversion: Converting models to the ONNX format allows deployment across various hardware backends, leveraging platform-specific optimizations for low-latency inference.

Step-by-Step Example: Optimizing a Transformer for Production

- Select a Suitable Pre-trained Model: Start with a compact Transformer variant, such as DistilBERT or TinyBERT, instead of a full-size BERT. This reduces initial model size and complexity.

- Apply Quantization and Pruning: Use libraries like PyTorch Quantization or TensorFlow Model Optimization to quantize and prune weights systematically.

- Distill Larger Models: Transfer knowledge from a large, fine-tuned parent model to a smaller, faster one using techniques described in the original distillation paper by Hinton et al.

- Leverage Low-Latency Frameworks: Convert to ONNX and deploy using optimized inference servers or edge devices to further cut inference times.

- Monitor and Profile: Continuously track latency, memory, and throughput in real production settings, and iterate on optimizations as needed. Use open-source tools such as TensorFlow Profiler for deep diagnostics.

As the field evolves, new research and tools continue to provide innovative ways to streamline Transformers for production. Stay updated through reputable sources like arXiv and industry blogs from AI leaders. With the right strategies, you’ll be able to serve accurate, lightning-fast predictions at scale.

Implementing Robust Monitoring and Logging

Achieving reliable and effective deployment of Transformers in production environments demands more than model performance—it hinges on solid monitoring and comprehensive logging strategies. Without these, teams risk undetected failures, user-facing errors, and data drift that can degrade NLP outcomes over time. Let’s break down how to foster robust observability and traceability as part of your model operations (MLOps) workflow.

Why Monitoring Matters

After deployment, your Transformer model interacts with real-world data that may differ significantly from training distributions. Changes in user behavior, new slang, or domain shifts can throw off even the best models. Continuous monitoring helps you detect data drift, performance degradation, and system anomalies before they translate into business impact. As highlighted by DeepLearning.AI, monitoring is foundational for responsible AI, enabling proactive maintenance and improvement.

Steps for Effective Monitoring

- Define Key Metrics: Track both model-centric metrics—such as prediction confidence, response latency, and error rates—and business-centric outcomes, like task completion or user satisfaction. Consider incorporating advanced metrics like calibration and fairness where appropriate.

- Set Alerting Thresholds: Decide what constitutes abnormal behavior. For instance, create alerts if prediction confidence drops below a threshold or if input characteristics diverge significantly from those seen at training time.

- Automate Visualization and Reporting: Utilize dashboards to visualize trends, spikes, and anomalies over time. Open-source tools like Prometheus + Grafana or managed MLOps platforms can make this process seamless and scalable.

- Log Model Inputs and Outputs: Record representative samples of incoming data and model predictions, while adhering to data privacy guidelines. This data is invaluable for debugging, model audits, and retraining initiatives—as noted by Microsoft Research.

Building Robust Logging Pipelines

Rich, structured logs are essential for diagnosing issues and tracing model behavior. A robust logging system for Transformer deployments should:

- Capture Contextual Metadata: For every prediction, log the request ID, timestamp, model version, data source, and (if possible) user session. This allows you to reconstruct sequences and identify where things went awry.

- Handle Sensitive Data Carefully: Tokenize or redact personal data in logs to meet privacy and security standards. Refer to guidance such as NIST’s Privacy Framework for best practices.

- Enable Traceability Across Services: In production, Transformer APIs might be part of a larger microservices architecture. Implement centralized logging and distributed tracing—see OpenTelemetry—to follow requests end-to-end through your system.

- Leverage Log Analytics: Use log management platforms such as ELK Stack or Google Cloud Logging for indexing, querying, and correlating logs, speeding up issue diagnosis and root-cause analysis.

Example: Detecting Inference Failures in Real Time

Suppose you’re running a large language model to power customer support chatbots. Deployment logs capture every request’s input, output, response time, and error states. You establish an automated check: if the model returns unusually high uncertainty (low softmax probability) or responds slower than 500ms, an alert is logged. Engineers quickly investigate spikes in failed responses, correlate them to a change in upstream data, and issue a rollback—minimizing business disruption.

By thoughtfully implementing monitoring and logging tailored to Transformer models, organizations can preempt failures, streamline retraining, and maintain the trust of users and stakeholders. For a practical guide on monitoring machine learning in production, explore this Google MLOps resource.

Ensuring Scalability and High Availability

Deploying transformer models in production is about more than just achieving good performance metrics in a controlled, isolated environment. It’s crucial to guarantee both scalability and high availability so that your application can handle real-world demands, unexpected traffic spikes, and seamless failover—all while maintaining speed and reliability.

Designing for Scalability

Scalability refers to your system’s ability to grow and handle increased workloads without compromising performance. When deploying transformers, particularly large language models or sequence-to-sequence architectures, scalability strategies are essential:

- Horizontal Scaling: Instead of ramping up resources on a single server (“vertical scaling”), distribute the workload across multiple servers or instances. This approach enables seamless scaling as traffic grows and provides redundancy, which decreases downtime. Tools like Kubernetes can help orchestrate and automate container-based deployments, making it easier to scale transformer workloads.

- Model Parallelism & Sharding: Large transformer models may not fit into the memory of a single GPU or node. By partitioning the model across multiple processors (model parallelism) or distributing the data (data parallelism), you can efficiently manage memory and speed up inference. Frameworks such as Megatron-LM and TensorFlow Mesh are designed to help scale models using parallelism techniques.

- Asynchronous Processing: Transformers often have high latency, which can choke throughput under load. Asynchronous task queues—for example, with Celery—can queue up requests ahead of time and process them as GPUs become available, smoothing out spikes and ensuring responsiveness under heavy demand.

Ensuring High Availability

High availability means your transformer models remain accessible and functional, even when some parts of the infrastructure fail or undergo maintenance. To achieve this, consider the following strategies:

- Redundant Deployment: Deploy transformer models across multiple availability zones or data centers. If one location fails, requests are seamlessly rerouted to other active zones. Cloud providers like AWS and Azure offer built-in support for redundancy and failover management.

- Load Balancing: Use robust load balancers (such as NGINX or HAProxy) to distribute inference requests across available model instances. This not only improves response times during traffic surges but also automatically reroutes traffic if one server goes down, ensuring no single point of failure.

- Automated Health Checks: Implement automated health checks and monitoring (using tools like Prometheus and Grafana) to continually assess the health of your transformer services. These tools can automatically attempt restarts or raise alerts if issues are detected, helping you resolve problems before they escalate.

Real-World Example: Transformer API at Scale

Consider a real-time translation service powered by transformer models. To ensure that millions of users worldwide access translations instantly, engineers deploy multiple replicas of the model behind a cloud-native load balancer. Each replica processes incoming requests, while a message queue buffers overflow traffic during peak hours. Auto-scaling policies automatically start up new model containers as request rates climb and spin them down during lulls, optimizing cost and response times. Continuous monitoring ensures that, even if one compute node fails, the service as a whole remains available and reliable. This design, recommended by experts at DeepMind, exemplifies best practices for deploying advanced language models in production.

By focusing on these strategies, organizations can ensure that their transformer deployments are robust, future-proof, and capable of delivering mission-critical language intelligence at scale. To dive deeper, consult academic overviews from arXiv and guidelines from the Apple Machine Learning Research group for more technical insight into scaling machine learning systems.

Integrating Security and Compliance Best Practices

Ensuring robust security and compliance when deploying Transformers in production is a critical concern—not only for safeguarding sensitive data but also for meeting regulatory expectations across industries. Here’s how you can incorporate security and compliance best practices when integrating advanced language models into your workflows:

Adopt Secure Model Deployment Strategies

First, evaluate where your models are hosted and how they are accessed. Deploying Transformers on-premises allows for tighter security control but often requires significant infrastructure investment. Alternatively, trusted cloud providers such as Google Cloud and AWS offer comprehensive security frameworks, including encryption at rest and in transit, automatic patch management, and multi-factor authentication. Always ensure that client-to-server and server-to-server communications are secured using protocols like TLS 1.3 to mitigate the risk of data interception.

Integrate Role-Based Access Control (RBAC)

Strict access controls are crucial for reducing the attack surface. Implement role-based access control (RBAC) to restrict model access based on user roles and responsibilities. For instance, only grant developers access to model training environments, while production deployment tools should be limited to operations teams. Regularly audit and update user permissions to ensure least-privilege access is maintained across all systems.

Establish Comprehensive Logging and Monitoring

Effective monitoring is essential for detecting unauthorized activity and ensuring compliance with data protection laws like GDPR and HIPAA. Implement centralized logging for inference requests, system events, and access logs. Use monitoring solutions, such as Splunk or Prometheus, to automatically detect and alert on anomalous behaviors, such as repeated failed login attempts or unusual data access patterns.

Implement Data Anonymization and Redaction

Transformer models often process sensitive information. Ensure all input and output data is anonymized or pseudonymized where possible. Adopt data redaction techniques to mask personally identifiable information (PII) before it reaches the model or is logged. For example, use regular expressions to filter out Social Security numbers, email addresses, or payment card data from logs. Refer to guidelines by the NIST Privacy Framework to select the right anonymization standards for your environment.

Regularly Conduct Security Audits and Compliance Checks

Security isn’t a “set and forget” process. Schedule periodic third-party audits of your Transformer infrastructure and processes. Use automated compliance tools such as Chef Compliance or HashiCorp Sentinel to continuously assess your deployments against regulatory benchmarks like ISO 27001. Document the workflow for producing audit trails, so you’re always prepared for inspections or regulatory reviews.

Stay Informed on AI-Specific Compliance Requirements

Regulations around artificial intelligence are rapidly evolving. Stay up-to-date by tracking organizations such as the European Commission and NIST for AI governance updates. Integrate change-management policies that adapt to new legal requirements, such as model explainability, record-keeping for inferences, or user opt-out mechanisms. By doing so, you prepare your deployment for emerging regulatory frameworks like the EU AI Act.

By methodically weaving security and compliance principles into every phase of your Transformer deployment, you protect your organization and customers while fostering responsible AI adoption. Investing in best practices today ensures resilience, trust, and competitive advantage for tomorrow’s AI-enabled solutions.