What is Context Engineering?

At its core, context engineering is the discipline focused on enabling artificial intelligence (AI) systems to understand, remember, and utilize the context of their interactions with humans. Unlike basic machine learning models that process isolated bits of data, context engineering involves creating frameworks and algorithms that give machines a sense of continuity—allowing them to recall prior exchanges, user preferences, and the nuances of ongoing conversations.

Imagine talking to a friend who forgets everything you said the instant after you say it. Frustrating, isn’t it? That’s how traditional machines have operated for much of computing history. Context engineering seeks to transform this by equipping machines with the ability to “remember our stories”—the aggregate of our inputs, corrections, emotions, and histories—with the end goal of tailoring responses that make interactions more human-like and meaningful.

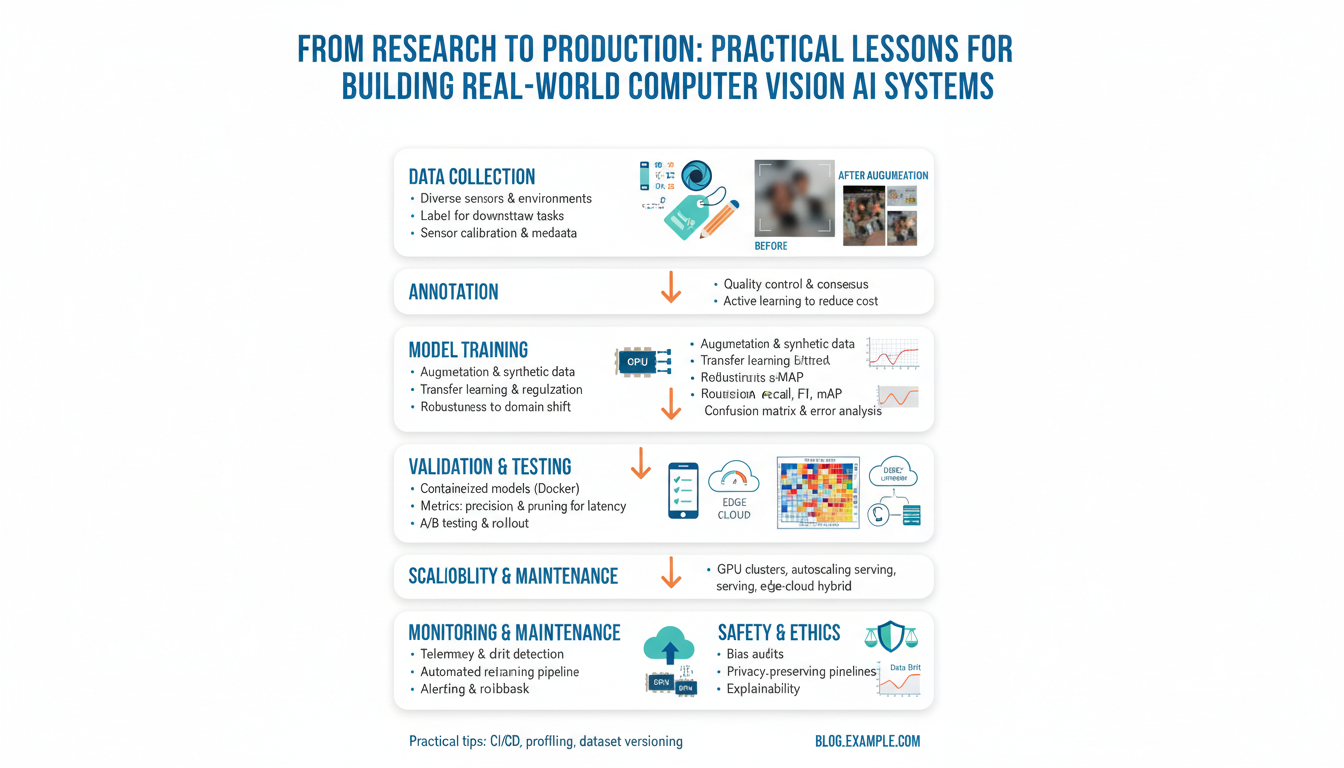

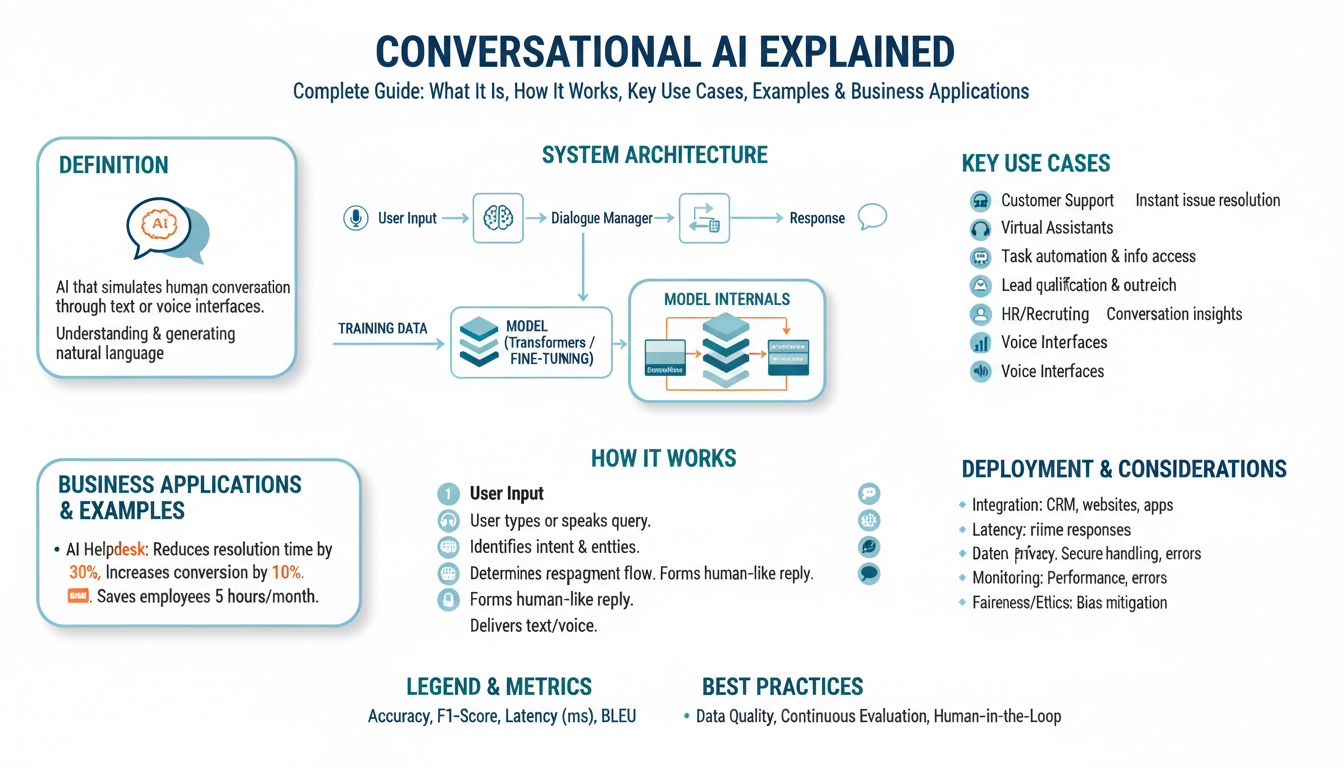

To understand the practical implementation of context engineering, consider an AI-powered customer support chatbot. Instead of answering each question in isolation, an advanced bot recalls previous queries, recognizes when a user returns with a related problem, and adapts its responses based on ongoing troubleshooting history. This memory-driven approach not only improves user satisfaction but also propels the shift towards more proactive, helpful AI systems. For a deeper dive into how large language models maintain context, you can explore this illustrative explanation from DeepMind.

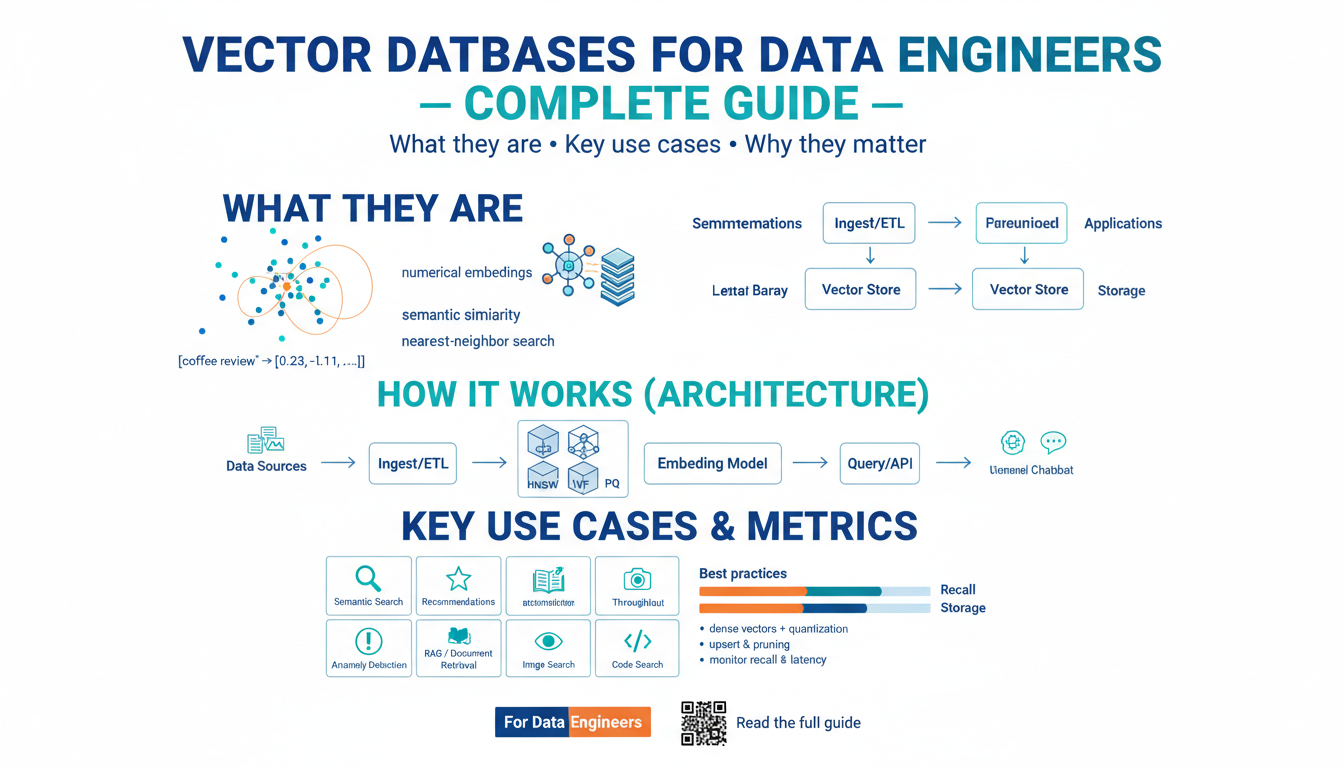

From a technical standpoint, context engineering requires the use of sophisticated memory architectures. Models like Transformers and augmented recurrent neural networks (RNNs) are integrated with mechanisms such as attention layers, which allow the system to weigh the importance of prior information and dynamically refer back to it as needed. Moreover, engineers often implement external data stores known as “memories” or “context buffers” so these models can keep track of relevant user data across sessions.

Yet, context engineering is not only about technology—it also raises important questions around privacy, security, and ethical memory. Deciding what machines should remember and for how long requires careful thought and regulation, as the ultimate goal is to balance personalization with respect for individual privacy rights. Organizations and AI developers are increasingly turning to frameworks like GDPR or privacy-preserving machine learning techniques to ensure ethical context engineering.

In summary, context engineering is both a science and an art. It lies at the intersection of memory architectures, ethical considerations, and human-centered design. By teaching machines to remember our stories, engineers are paving the way for AIs that are more empathetic, reliable, and seamlessly embedded into our digital lives. For more insights, check out this detailed resource from Stanford HAI on the advances and future outlook of context-aware AI.

Why Machines Need to Understand Context

Imagine having a conversation with someone who doesn’t remember what you said a few minutes ago. It would be frustrating, confusing, and ultimately unproductive. The challenge machines face when they process information without context is remarkably similar. Without understanding the “what, where, and why” behind data, machines can only provide shallow or generic responses, failing to truly grasp human intent or nuance. This is why context is crucial.

When machines understand context, they move from being mere repositories of data to becoming intelligent collaborators. For instance, a search engine that recognizes context can distinguish between “Apple” the company and “apple” the fruit based on your previous queries, your location, or even the device you’re using. This ability to personalize results enhances relevance and utility, making digital interactions more seamless and human-like. The importance of contextual understanding in artificial intelligence has been explored in detail by researchers at MIT and other leading institutions.

Context is not just about remembering keywords—it’s about forming connections. Consider a virtual assistant that schedules a meeting after you mention “next Friday”. For it to succeed, the machine must understand your calendar, time zones, preferences, and maybe even your colleagues’ habits. This depth of comprehension turns basic automation into genuine assistance. A primer on the challenges and advancements in contextual AI can be found through MIT Technology Review, which explains how context is redefining digital intelligence.

Context engineering addresses several real-world challenges:

- Improving Communication: When chatbots and virtual assistants understand prior interactions, they can continue conversations naturally, reducing user frustration.

- Enhancing Relevance: Recommendation engines, as seen on platforms like Netflix, analyze context—such as viewing history and user profiles—to suggest content you’re more likely to enjoy.

- Boosting Security: Context-aware systems can better detect anomalies, like unauthorized login attempts by recognizing when a location or device doesn’t match normal patterns. This is a key aspect of modern cybersecurity strategies outlined by organizations such as IBM.

In essence, machines that understand context can personalize, adapt, and anticipate human needs, building trust and opening new realms of collaboration. The journey to this level of intelligence is at the heart of context-aware AI, which is already shaping the way we interact with technology every day.

Key Techniques in Teaching Machines to Remember

To teach machines how to remember our stories effectively, a blend of intelligent methodologies and advanced algorithms is required. By integrating a handful of key techniques, we can empower artificial intelligence systems to understand, store, and retrieve information with contextual awareness that edges ever closer to human memory. Let’s dive deep into some essential strategies in machine memory engineering and explore how developers and researchers are shaping the future of context-aware AI.

1. Embedding Context through Sequence Models

At the core of machine memory lies the ability to embed context—a process crucial when dealing with stories or extended conversations. Recurrent Neural Networks (RNNs) and their more advanced variants like Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks have revolutionized the way machines process sequences and remember relevant context over time. These architectures can retain information across multiple steps, much like remembering character arcs within a novel. For example, LSTM networks have shown remarkable success in natural language processing by remembering important story elements across paragraphs, not just sentences. For a comprehensive overview, see Chris Olah’s explanation of LSTMs and their applications.

2. Attention Mechanisms and Transformers

A recent breakthrough in machine learning involves the introduction of attention mechanisms and transformer architectures. Unlike traditional models that sequentially process information, transformers can “attend” to different parts of the narrative simultaneously. This means when a machine processes a story, it can focus on both the beginning and end—recalling a protagonist’s early choices while interpreting their actions in the final act. These systems form the foundation of some of today’s most advanced language models, providing machines with a richer, more nuanced sense of memory that balances immediacy and contextual depth.

3. Knowledge Graphs and Semantic Memory

While sequence and attention models allow machines to recall storylines, knowledge graphs enable them to connect facts, relationships, and meaning across vast webs of information. By mapping entities (such as people, places, and events) and their interrelations, these graphs offer an external, persistent memory. For instance, if an AI is reading a biography, it can store facts (birthplaces, milestones) and use them contextually in dialogue or summary. Google’s use of knowledge graphs in its search algorithms is a prime example of this technique in action (source).

4. Episodic Memory and Memory-Augmented Networks

A more human-like approach involves episodic memory, where machines remember specific events or experiences. Memory-augmented neural networks, such as Neural Turing Machines and Differentiable Neural Computers, allow models to read from and write to external memory. These structures let AI systems reference previous interactions, much like a storyteller recalling past moments to enrich present narratives. This is vital for applications like conversational AI or personal digital assistants, enabling long-term personalization and coherence throughout interactions.

5. Reinforcement Learning for Narrative Recall

Reinforcement learning gives machines an incentive-based mechanism to optimize memory retention. When combined with attention and episodic memory, AI can learn which details of a story are critical to recall at key moments. For example, in interactive storytelling or game AI, the system might be rewarded for referencing important past events, leading to more engaging, coherent narratives. Learn more about reinforcement learning techniques at DeepMind’s research hub.

These techniques, when woven together, represent the cutting edge of context engineering. Not only do they help machines remember our stories, but they also empower AI to understand, refine, and retell them in ways that make technology an ever-more attentive storyteller. As developments continue, we edge closer to machines that don’t just process data, but actively participate in the ongoing narrative of human experience.

The Role of Artificial Intelligence in Contextual Memory

Artificial intelligence (AI) has made remarkable strides in processing and understanding information, but its true potential emerges when it goes beyond simple data analysis to encompass contextual memory. This capability enables machines to “remember” previous interactions, understand the nuances of human conversation, and personalize responses. Let’s delve into how AI is developing contextual memory and why it matters for human-computer interaction.

Understanding Contextual Memory in AI

At its core, contextual memory allows AI systems to retain and leverage relevant information from prior encounters. Rather than treating each query or command as isolated, advanced AI frameworks build a memory of previous inputs to inform current and future behavior. For instance, virtual assistants like Google’s Assistant or Amazon’s Alexa can track ongoing conversations, switch between topics, and recall user preferences over time. This ability is made possible through ongoing advancements in machine learning architectures such as large language models, which are designed to handle sequences of data and recognize patterns across vast textual contexts.

The Mechanics of Contextual Memory

Contextual memory in AI involves several technical steps:

- Data Embedding: Machine learning algorithms encode words, phrases, and even entire passages into numerical representations called embeddings. These embeddings help models relate current input to past data and discern context.

- Attention Mechanisms: Innovations such as Transformer architectures use attention layers that allow AI to “focus” on the most relevant parts of previous conversations, mirroring the human tendency to recall specific details that matter most.

- Long-Term Memory Modules: Recent research is exploring how to embed long-term memory into AI systems, inspired by human cognition, so they can remember relevant information across extended time periods, not just within a single session. Projects like DeepMind’s episodic control demonstrate models that learn from past “episodes” or experiences.

Impactful Use Cases of Contextual AI Memory

Context engineering is not just theoretical—it powers several practical applications:

- Personalized Recommendations: Services like Facebook and Netflix rely on contextual memory to recall user preferences and predict content, making experiences uniquely tailored.

- Healthcare Solutions: AI models can track and aggregate patient interactions, symptoms, and history to provide highly contextual medical support.

- Conversational AI: Chatbots and customer service agents now draw from entire histories of interaction to deliver more natural, human-like support, rather than asking users to repeat themselves.

Challenges and Ethical Considerations

With great memory comes great responsibility. AI’s ability to store and recall massive amounts of contextual data brings privacy and ethical concerns. Ensuring transparency, user consent, and robust security measures is essential, as outlined by organizations like the World Economic Forum. Balancing advanced contextual memory with individual rights and data protection is key to AI’s continued responsible evolution.

By developing richer contextual memory, artificial intelligence is progressing toward genuinely understanding human stories, remembering our preferences, and responding in ways that feel intuitive and empathetic. The art of teaching machines to retain and recall our stories is quickly becoming a cornerstone in the next generation of computing.

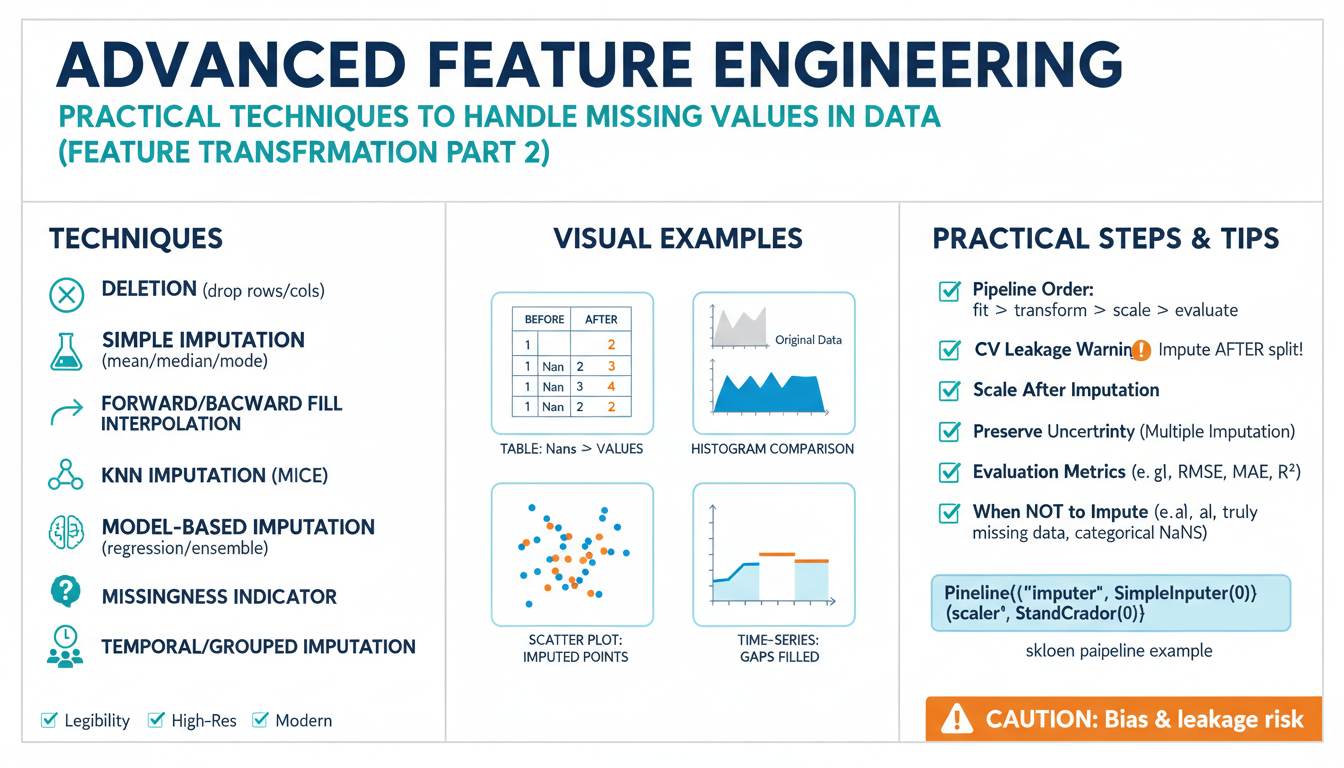

Challenges in Context Engineering

One of the foremost challenges in context engineering is data sparsity. Machines often struggle to capture the richness of human stories because real-world context is not easily quantifiable or always present. For instance, the way a machine interprets a sentence can change drastically depending on prior conversations, emotional tone, or cultural references. This discrepancy highlights the need for scalable and efficient methods to collect, store, and retrieve context. Researchers at MIT have detailed experiments showing how insufficient context leads to AI hallucinations and misunderstandings, emphasizing the importance of robust context frameworks.

Another persistent issue is context drift. As conversations continue over time, the original topic or intent may shift, causing machines to lose track of what truly matters. This phenomenon, described in studies by Stanford Artificial Intelligence Laboratory, shows that even advanced language models can get “lost” without deliberate tracking and updating of context clues. Engineers combat this by designing context-aware algorithms that continuously adapt to new information and correct for drift, though this is far from a solved problem.

Context engineering also faces significant privacy and ethical dilemmas. Capturing context often means storing sensitive personal data — a complex challenge given worldwide privacy regulations such as GDPR. Balancing the need for highly personalized machine intelligence with the imperative to protect user confidentiality pushes the boundaries of current privacy-preserving technologies like homomorphic encryption. Transparency, user consent, and data minimization have become guiding principles but require ongoing vigilance and technical innovation.

Finally, representing context in a machine-readable manner poses design and implementation hurdles. Humans can effortlessly recall emotions, sarcasm, and subtext, but encoding even a fraction of these subtleties for algorithms is remarkably challenging. Recent research at University of Cambridge explores new representations like knowledge graphs and vector embeddings, making incremental progress. For example, context engineering in dialogue systems involves not merely storing previous user statements, but encoding their meaning, relevance, and relationships to future exchanges.

In tackling these challenges, context engineers must blend technical advances with thoughtful design, always mindful of the delicate nature of human stories machines are set to remember.

Real-World Applications: How Machines Remember Our Stories

Imagine a future where a machine remembers your favorite book as easily as your closest friend—or a company’s chatbot seamlessly carries on a customer conversation across months. This is not science fiction; these breakthroughs are happening today, thanks to the concept of context engineering. Let’s explore several real-world applications illustrating how machines now recall the ongoing narrative of our lives, sometimes better than we do ourselves.

1. Healthcare: Patient Histories That Stay With You

In healthcare, context engineering enables medical systems to retain and interpret patient histories, symptoms, and treatment plans across multiple visits. For instance, Electronic Health Records (EHRs) aggregate and store vast troves of data, but the real leap is in how AI systems are now leveraging this context to assist doctors. When a patient visits a new physician, the system can surface nuanced patterns—such as subtle changes in blood test results or recurring symptoms—across years of data. Research from institutions like Mayo Clinic highlights how contextually aware systems are reducing errors and improving diagnostic accuracy.

2. Customer Support: Effortless and Personalized Assistance

Have you ever experienced the frustration of re-explaining an issue every time you contact a company? Context engineering in customer support changes that experience. AI-powered chatbots and virtual assistants now retain and reference previous interactions. For example, if you reported a broken device last month, the system can greet you by referencing that same issue, offering updates or follow-up questions without missing a beat. Major providers like IBM Watson Assistant use sophisticated memory models to achieve these personalized, context-rich interactions, creating smoother, less repetitive customer journeys.

3. Personalized Digital Assistants: Anticipating Your Needs

Digital assistants such as Google Assistant and Amazon Alexa excel at remembering user preferences and routines. Over time, these systems build a timeline of your requests, reminders, and even the context in which you ask for information. If you routinely request weather updates before your morning commute, your assistant might proactively provide forecasts at the right time. For deeper technical insights, consult the work at Google AI Blog on learning to remember rare events—key to truly personalized assistance.

4. Education: Personalized Learning Journeys

Educational platforms now leverage AI to remember and adapt to each learner’s story. Platforms like Khan Academy track a student’s progress, strengths, and areas for improvement. The application of context engineering allows these systems to recommend personalized learning paths, review forgotten concepts, or skip mastered material. The Stanford Graduate School of Education explores how AI-driven context retention enhances learning outcomes and engagement.

5. Legal and Compliance: Threading Through Complex Cases

Legal AI solutions sift through mountains of case law, briefs, and historical data to surface relevant precedents and facts for ongoing cases. By remembering intricate details from prior interactions and filings, these systems help law professionals save countless hours and reduce errors. For a deeper dive, the University of Pennsylvania Law School discusses how context-aware AI is transforming legal research and compliance tasks.

The art of context engineering isn’t simply about storing information—it’s about recalling, relating, and adapting it to each new situation. Through these examples, it’s easy to see why the ability for machines to remember our stories is setting a new standard for intelligence, empathy, and efficiency across industries.