Start With Business KPIs

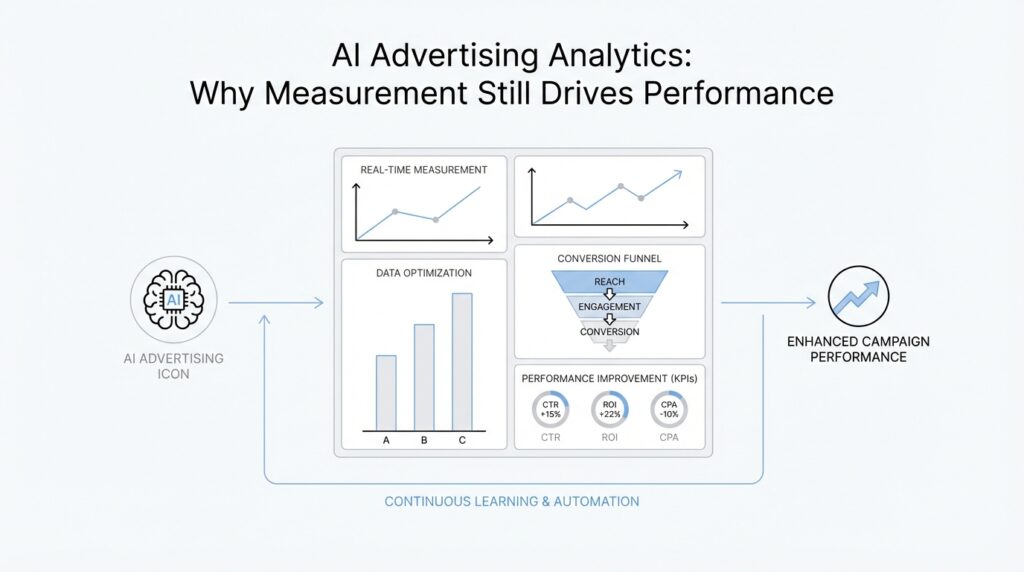

Building on that foundation, the first move in AI advertising analytics is not to open a dashboard full of clicks and impressions. It is to name the business outcome you actually care about. A KPI, or key performance indicator, is a measurable signal that tells you whether you are moving toward a goal, and the strongest measurement plans work backward from that goal rather than forward from whatever the platform happens to show. If your business wants sales, profit, or qualified leads, that target should shape the rest of the conversation.

That choice matters because not every metric deserves equal weight. A campaign can generate plenty of activity and still miss the point if it does not help the business grow in a meaningful way. Google Ads explicitly recommends choosing a bidding strategy that aligns with your main business goal, and for goals like sales, profit, or qualified leads, it points you toward conversion value and target ROAS, which stands for return on ad spend. In other words, the system should be asked to optimize for business value, not vanity numbers.

How do you know whether a campaign is actually helping the business? Start by matching the KPI to the way value is created. If you sell products, revenue and conversion value usually matter more than raw traffic. If you generate leads, then a lead is not the finish line; the real KPI is often a qualified lead or another downstream signal that predicts revenue. Google Analytics also treats important interactions as key events, and those events can be turned into conversions for reporting and optimization, which is a useful reminder that measurement should follow the real customer journey, not just the last click.

This is where AI advertising analytics becomes much more useful. Once you name the business KPI, the model has something meaningful to chase. Google’s Meridian measurement framework, for example, is built around a target KPI and often uses sales data as that target, alongside media data and control variables that also influence the outcome. If your business does not measure success in revenue, Meridian notes that the KPI itself can become the outcome to model. That is the key idea: the KPI is the anchor, and everything else exists to explain or improve it.

From there, the practical work becomes clearer. You pick one primary business KPI, then decide which supporting metrics tell you whether the KPI is healthy or just temporarily inflated. That might mean pairing revenue with profit, or qualified leads with lead-to-sale rate, so you do not mistake busy activity for real progress. Google Ads also emphasizes expressing conversion value in a way that reflects your business, including revenue, profit, offline conversion value, or lifetime value, which is another way of saying that measurement should tell the truth about what matters. Once that truth is in place, the next step is to connect it to the signals your campaigns and models can actually learn from.

Build a Clean Data Foundation

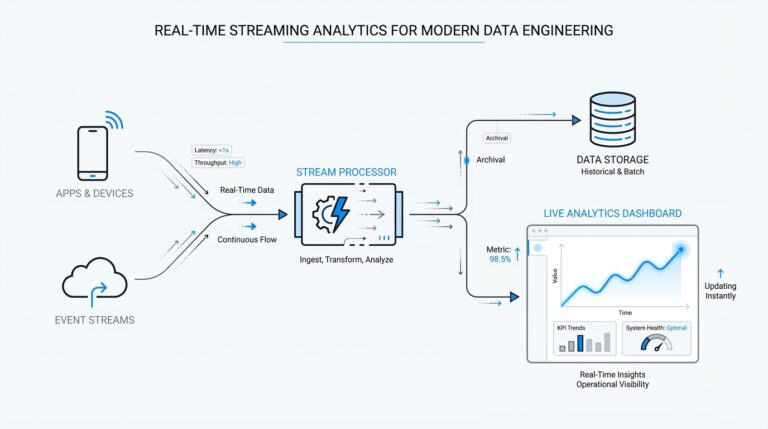

Building on this foundation, the next challenge is making sure your AI advertising analytics is fed by data you can trust. Think of it like cooking: a smart recipe cannot save bad ingredients, and a model cannot learn cleanly from duplicate purchases, missing values, or events that mean different things in different tools. How do you build a clean data foundation when your ad platforms, analytics stack, and CRM all speak slightly different languages? You start by making the inputs consistent, validated, and tied to the same business outcome.

The first piece is event design, which means deciding what should count as a meaningful action before you ask any system to optimize it. In Google Analytics 4, these meaningful actions are called key events, and you can mark them before or after they are sent; those key events can also be imported into Google Ads for reporting and optimization. That matters because it keeps the model focused on real progress, not on whatever happened to generate the most activity. In practice, this is where clean data stops being a technical detail and starts becoming a business decision.

Before those events ever touch production, it helps to test them the way you would test a new bridge before letting traffic cross it. Google recommends using the Measurement Protocol validation server or the Event Builder to verify events, and the validation endpoint uses /debug/mp/collect; events sent there do not appear in reports. That is a helpful habit because malformed events are often invisible until they have already polluted your numbers, and by then the cleanup is much harder. In other words, validation is not extra polish; it is part of the foundation itself.

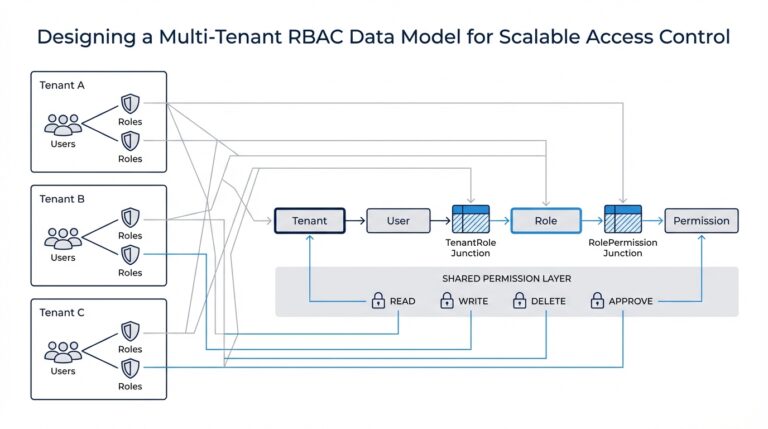

Once the events are valid, the next job is making sure the same person or session can be recognized across systems. This usually means keeping identifiers like client_id, user_id, or ad click IDs such as gclid aligned so that website activity, ad clicks, and downstream conversions can be connected instead of treated like separate stories. Google’s BigQuery guidance even shows how to join the gclid from Google Analytics event data to the Google Ads transfer, which is a practical way to connect ad interactions to outcomes. When that stitching works, you can ask better questions about which campaigns truly moved the KPI.

This is also where measurement modeling becomes much stronger. Meridian, Google’s open-source marketing mix modeling framework, expects a target KPI, media data, and control variables; those control variables are the outside forces that affect both the KPI and the media signal, like search demand or seasonality. If the KPI is noisy or the supporting data is inconsistent, the model starts guessing instead of explaining, and the answer becomes less useful. So a clean data foundation is not about collecting everything; it is about collecting the right things in a way that the model can compare fairly.

With that in place, you can treat values with more nuance instead of pretending every conversion is identical. Google Ads recommends reporting revenue or conversion values when some conversions matter more than others, then using Maximize conversion value with an optional target ROAS, which stands for return on ad spend. That advice fits the larger pattern here: the cleaner your data, the more confidently AI advertising analytics can optimize toward value instead of volume. Once the foundation is steady, the next step is to let those signals flow into the models that will turn them into decisions.

Use Attribution for Direction

Building on this foundation, attribution is where AI advertising analytics starts to answer the question behind the numbers: which touchpoints actually helped move the result? An attribution model is the rule that decides how much credit each ad interaction gets on the way to a conversion, and Google uses these models to help you understand performance and optimize across a customer journey instead of staring at a single last touch. That shift matters because the path to a sale often looks more like a winding trail than a straight line.

Think of attribution as a compass, not a courtroom verdict. In Google Ads, you can compare the old last-click view with data-driven attribution, which uses Google AI to allocate credit based on how customers actually convert, and that comparison can reveal keywords, ad groups, campaigns, or devices that looked weaker under last click than they really were. In Google Analytics 4, data-driven attribution assigns credit based on how adding each ad interaction changes the estimated probability of a key event, which is a useful way to see influence instead of only the final step.

This is where AI advertising analytics becomes more practical. If one model gives more weight to a brand campaign and another points toward a generic search campaign, we should treat that gap as a clue, not a contradiction to panic over. Google’s own guidance warns that changing attribution models can change bidding behavior; for example, moving from last click to data-driven attribution without adjusting targets can lead to underbidding on brand and overbidding on generic traffic. In other words, attribution tells you where to look next, but it does not replace judgment.

Now that we understand the idea, it helps to separate optimization from reporting. In Google Ads, the primary conversion columns are what bidding systems use, while conversions (platform comparable) is a reporting-only view meant for cleaner comparisons across platforms. That distinction matters because AI advertising analytics is strongest when you know which numbers are steering the machine and which numbers are only helping you interpret what happened. If you mix those two roles together, it becomes much harder to tell whether the model is learning from the right signal.

A good way to work with attribution is to read it like a weather forecast. You do not need it to predict every raindrop perfectly; you need it to tell you whether the storm is approaching, passing, or still building. So when a model consistently gives more credit to certain channels, use that pattern to guide budget reviews, creative testing, and funnel analysis, then check whether the same story appears in your business KPI. That is the real value of attribution in AI advertising analytics: it turns scattered touchpoints into a direction you can act on, even when the journey is messy. And if there is no Google Ads click in the path, GA4’s data-driven attribution falls back to paid and organic last click, which is another reminder that attribution is a guided view of reality, not reality itself.

Prove Lift With Experiments

Building on this foundation, the next question is the one that really tests AI advertising analytics: how do you know the ads caused the lift, and not just the timing, the season, or the people who were already going to buy? That is where experiments come in. An experiment is a structured test that compares a group exposed to your ads with a similar group that is not, so you can see the difference your media actually makes. Think of it like leaving one batch of bread in the oven and another on the counter; if only one rises, you have learned something real about the heat.

This matters because attribution can tell us where credit appears to land, but experiments help us prove incrementality, which means the extra results created by advertising above what would have happened anyway. That distinction is small in words and huge in practice. A campaign can look impressive in a dashboard and still be borrowing sales that would have arrived on their own. When you use AI advertising analytics well, you stop asking, “What got credited?” and start asking, “What changed because we ran this?”

So what does a lift test look like in practice? Usually, we split the audience, the geography, or the time window into two parts: a test group that sees the ads and a control group, often called a holdout, that does not. Then we compare the KPI we care about, such as revenue, qualified leads, or conversion value, across those two groups. The difference between them is the lift, and if the test is designed carefully, that lift gives you a cleaner view of causality than attribution alone can provide.

Now that we understand the basic shape, the real art is making the groups comparable. If one group contains more loyal customers, more high-income regions, or a stronger seasonal pattern, the result can fool you. That is why clean data and good experiment design go hand in hand in AI advertising analytics. You want the two sides of the test to look as similar as possible before the ads run, so that the main difference left is exposure to the campaign itself.

How do you choose the right experiment? The answer depends on what you are trying to learn. If you want to test a broad media strategy, a geo experiment, which compares performance across different regions, can be a strong fit because it measures real market-level impact. If you want to test a specific audience, a user holdout can work better because it isolates the effect of showing ads to some people but not others. The best choice is the one that matches the decision you need to make, not the one that looks most sophisticated on paper.

This is where experiments become especially valuable for AI-driven bidding and optimization. A model can only optimize toward the signal you give it, and if that signal is inflated by noise, the machine will happily learn the wrong lesson at scale. Experiments give you a reality check. They help you see whether the high-performing campaign is truly adding value or simply taking credit for demand that was already there, and that makes budget decisions far more confident.

It also helps to run experiments with a clear question in mind. Are you trying to prove that a brand campaign lifts direct conversions? Are you checking whether retargeting adds real value, or only harvests people already close to purchase? Those questions matter because not every tactic deserves the same testing setup. In AI advertising analytics, the experiment is not the finish line; it is the moment when measurement gets honest, and honesty is what lets you scale the channels that actually move the business forward.

Calibrate With Marketing Mix Modeling

Building on those experiments, the next step is to teach your broader measurement system what the tests have already revealed. This is where marketing mix modeling comes in: it is a statistical method that looks at historical spend, sales, and outside influences like seasonality, promotions, and market shifts to estimate how each channel contributes to the business result. On its own, marketing mix modeling can give you a helpful wide-angle view, but the real power comes when you calibrate it with what you learned from lift tests and holdouts. That calibration keeps the model from drifting into a neat-looking story that is not quite true.

Think of it like tuning a musical instrument after a rehearsal. The model may already be close, but experiments tell you which notes were sharp, which were flat, and which instruments were louder than they seemed on paper. When you feed those results back into marketing mix modeling, you are not starting over; you are tightening the fit between the model and reality. How do you know when that matters? Usually, it shows up when one channel looks unusually efficient in the model, but your test says the real-world lift was smaller, slower, or more conditional than expected.

This is especially useful because attribution and experiments answer different questions, while marketing mix modeling tries to connect them into one planning view. Attribution helps you see which touchpoints appear to assist conversions, and experiments help you prove incremental lift, but MMM helps you understand the bigger pattern across time. It can show how paid search, social, video, promotions, and even offline factors all move together. Once you calibrate MMM with experiment results, you get a model that is not only directional but grounded in observed business impact.

The practical value shows up when you start making budget decisions. Suppose a brand campaign looked expensive in the short term, but a geo holdout showed that it lifted demand in nearby channels and supported later conversions. A calibrated marketing mix modeling system can absorb that evidence and avoid underpricing the campaign’s true value. On the other hand, if retargeting appears to win too much credit in the model, a calibration step can pull that estimate back toward the lift test and prevent overspending on an audience that was already close to buying.

This is also where the word “calibrate” matters most. Calibration means aligning the model’s estimates with trusted reference points, not forcing every result to match perfectly. In practice, we use the experiment as an anchor and let MMM fill in the wider market story around it. That matters because a single test usually covers one region, one audience, or one time window, while the model must help you plan across the full business. Marketing mix modeling becomes far more useful when it can honor the local truth from the test and still explain the broader pattern.

Now that we have that picture, the process becomes easier to trust. You begin with a model built from historical data, then compare its channel estimates against the incremental lift you observed in experiments. If the gap is large, you revisit the inputs, the lag assumptions, the seasonality controls, or the way conversion value was defined. If the gap is small, you gain confidence that the model is learning the right lesson, and that confidence matters when budgets start shifting quickly.

That is the real reason calibration belongs in AI advertising analytics. It turns marketing mix modeling from a retrospective report into a decision engine that can guide the next round of investment. We are no longer asking the model to guess in isolation; we are asking it to learn from measurement, correct itself, and stay close to business reality. Once that happens, the model stops feeling like an abstract statistic and starts acting like a practical planning tool you can rely on.

Apply Insights to AI Optimization

Building on this foundation, the real work begins when measurement stops being a report and starts becoming a decision. That is the heart of AI advertising analytics: you take the KPI, the clean data, the attribution clues, and the experiment results, then you feed them back into the system so it can improve the next round of spending. If you have ever wondered, “How do you turn all these signals into better performance?” the answer is that you build a feedback loop, not a one-time dashboard. The goal is to let the machine learn from reality while we keep steering it toward the business outcome that matters.

The first move is to translate insight into action at the right level. A model cannot optimize for a lesson it cannot understand, so we need to convert what we learned into the signals it uses every day. If experiments showed that one audience drives higher incremental lift, we can give that audience more budget. If attribution suggests a campaign helps earlier in the journey, we may keep it active even when it looks weak on last-click reporting. AI advertising analytics works best when each finding becomes a practical instruction, not a note that sits in a slide deck.

This is where optimization becomes more disciplined than reactive. Instead of chasing the highest click-through rate, we ask which levers actually improve the KPI we named earlier. That might mean adjusting bidding strategy, shifting budget across channels, changing creative, or tightening audience definitions so the model spends more on high-value users. It may also mean keeping a channel in the mix because it supports downstream conversions, even if it looks modest at the top of the funnel. In other words, we are not asking the system to spend more; we are asking it to spend smarter.

Now that we understand the basic loop, the next question is where the model should be allowed to learn on its own and where humans should stay in charge. AI advertising analytics is powerful, but it is not a substitute for judgment. You want automation to handle fast-moving tasks like bid adjustments and budget pacing, while people decide the business rules, the guardrails, and the moments when context matters more than historical patterns. For example, a seasonal promotion, a product launch, or a margin change may require a temporary change in target ROAS, audience mix, or conversion value.

That balance matters because optimization can drift if nobody checks the assumptions underneath it. A model trained on stale data may keep rewarding yesterday’s winning pattern long after the market has changed. So we watch for signs like rising spend with flat incrementality, a sudden drop in qualified leads, or a channel that looks efficient in platform reporting but weak in holdout results. These are the moments when measurement becomes a safeguard, reminding us that the best AI advertising analytics systems are still grounded in business reality.

A useful habit is to separate tactical signals from strategic ones. Tactical signals tell you what to adjust this week, such as keyword bids, audience exclusions, or ad frequency. Strategic signals tell you whether the broader plan still makes sense, such as whether a channel deserves more budget next quarter or whether a creative theme is actually moving the KPI. When you keep those layers distinct, optimization becomes calmer and clearer, because you are not overreacting to short-term noise or ignoring long-term movement.

The same idea applies to testing creative and message. A model can surface which ads are getting attention, but measurement tells you which messages are creating value. If one version of an ad drives more visits while another drives more qualified conversions, the better choice depends on the KPI we chose at the start. That is why AI advertising analytics should not stop at media allocation; it should also shape what you say, who hears it, and when it appears. The insight is not just “this ad worked,” but “this message worked for this audience under these conditions.”

As we discussed earlier, experiments and calibrated modeling give us a reality check. Now we use that same truth to keep optimization honest over time. We compare predicted performance with actual performance, update the inputs, and look for patterns that repeat across campaigns. When the same lesson shows up in attribution, incrementality testing, and MMM, it becomes much safer to automate around it. That is how measurement turns into momentum: not by replacing human thinking, but by sharpening it.

In the end, applying insights to optimization means treating every data point as part of a conversation with the business. The KPI tells us where we want to go, the models suggest the route, and the tests tell us whether the map is still accurate. When those pieces work together, AI advertising analytics becomes less about managing dashboards and more about guiding growth with confidence.