Why Normalize Your Schema

Data redundancy is a silent productivity tax: it makes writes brittle, increases storage and backup time, and turns simple updates into multi-step operations you must test repeatedly. Database normalization and a well-designed schema push that redundancy down to a single authoritative place, which prevents update, insert, and delete anomalies that surface as bugs in production. How do you decide when to normalize versus denormalize? Starting from the principle that each fact should have exactly one home clarifies those choices and reduces long-term operational risk.

One major reason to normalize is preserving data integrity. When you separate customer contact details from individual order records, a single change to a customer’s phone number updates one row rather than dozens, eliminating the risk of inconsistent copies. Referential integrity enforced by foreign keys prevents orphaned rows and encodes business invariants into the database itself, which is far more reliable than sprinkling integrity checks through application code. That single-source-of-truth model directly reduces bug surface area and simplifies audits and compliance reporting.

Normalization also improves maintainability and schema evolution. Normalized schemas map closely to domain concepts—customers, products, orders—so when requirements change you can reason about a single table instead of a matrix of denormalized columns and derived fields. This makes migrations smaller and safer: adding an attribute to the canonical table is less error-prone than updating ETL pipelines that populate duplicated fields in many tables. Moreover, normalized schemas are easier to index effectively because each table has a clear set of access patterns we can optimize for.

Performance trade-offs are a practical concern: normalization increases joins, which can hurt read latency for complex queries. For OLTP workloads that perform many small transactions, normalized schemas usually win because writes are cheaper and lock contention is reduced. For read-heavy or analytical workloads, you may deliberately denormalize or add materialized views and read replicas to avoid expensive joins. The right pattern is pragmatic—use normalization to guarantee consistency and then selectively denormalize where measured latency or throughput requirements demand it.

Consider a concrete example. A naïve orders table with repeated customer data looks simple but is brittle:

-- denormalized orders

CREATE TABLE orders (

order_id SERIAL PRIMARY KEY,

customer_name TEXT,

customer_email TEXT,

product_id INT,

quantity INT,

order_ts TIMESTAMP

);

Splitting this into normalized tables removes duplication and enables constraints:

CREATE TABLE customers (customer_id SERIAL PRIMARY KEY, name TEXT, email TEXT UNIQUE);

CREATE TABLE orders (order_id SERIAL PRIMARY KEY, customer_id INT REFERENCES customers(customer_id), product_id INT, quantity INT, order_ts TIMESTAMP);

This change makes updates and deduplication straightforward, enables efficient indexing on customer_email, and allows us to add business rules (unique emails, cascading deletes) where they belong.

Normalization also affects operational costs and backups. Less duplicated data means smaller backups, faster restores, and reduced storage bills—especially important at scale. It also simplifies analytics pipelines: when source tables are normalized, ETL jobs can join authoritative tables instead of reconciling conflicting copies and guessed canonical records. That reduces the time data engineers spend debugging upstream data quality issues.

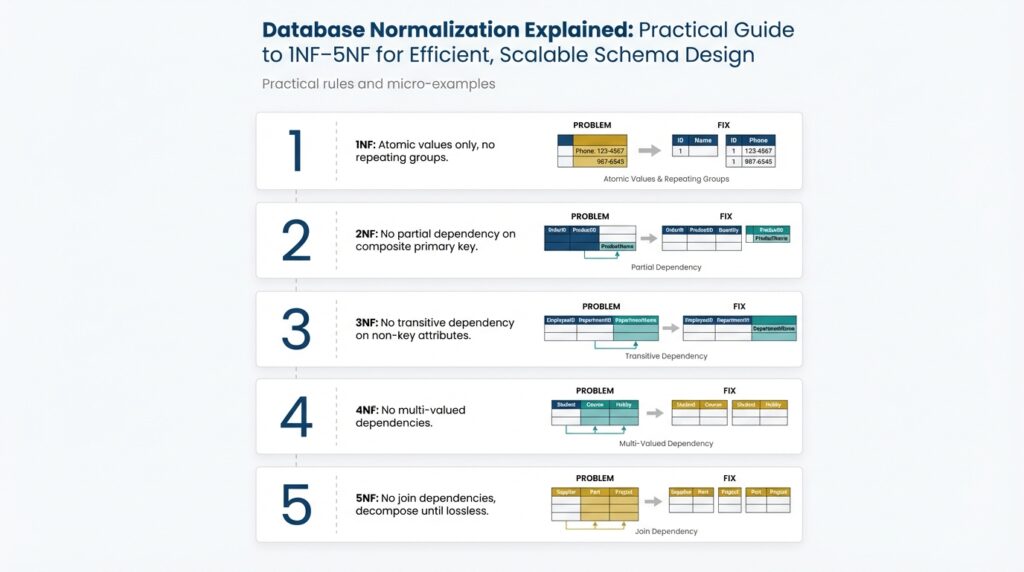

Finally, think of normalization as a toolkit, not blind orthodoxy. The goals—consistency, reduced redundancy, clear domain modeling—map directly to the normal forms (1NF–5NF) you’ll apply in practice. Use normalization to encode business rules and transaction boundaries, then layer caching, materialized views, or targeted denormalization for performance-critical reads. Building on this foundation, the next section will walk through the normal forms and show when to stop normalizing and start optimizing for real-world workloads.

Keys, FDs, and Anomalies

Normalization fails in production not because of theory but because keys, functional dependencies, and anomalies weren’t identified early. In the first 100 words here we’ll focus on functional dependency, primary key, foreign key, and anomalies so you immediately understand what to test in a schema review. You should treat keys as the contract of a table: they define identity, determine permissible functional dependencies, and drive which anomalies can appear when data changes. Building on the earlier discussion of single-source-of-truth, this is where theory turns into actionable schema hygiene.

A primary key is your canonical row identifier; a candidate key is any minimal set of attributes that functionally determines the row. Choose a surrogate primary key when your natural key is large or mutable; choose a natural key when it encodes immutable business identity and simplifies queries. Composite keys are fine for join tables or when uniqueness is inherently multi-attribute, but they change how you design foreign keys and indexing. When you declare a foreign key, you’re asserting a referential rule that prevents orphaned rows and organizes facts into authoritative tables rather than scattered copies.

Functional dependency (FD) is the precise relationship you must model: attribute A functionally determines attribute B (A -> B) when a single value of A always maps to one B. We use FDs to decide decomposition: if a non-key attribute depends on part of a composite key or on another non-key attribute, that indicates a dependency you should normalize away. In practice, identify FDs by asking whether a given attribute can be derived from a candidate key or another column — if it can, it belongs with the determinant. Explicitly reasoning about FDs prevents hidden redundancy and the anomalies that follow.

Consider a denormalized orders table: orders(order_id, customer_name, customer_email, product_id, qty, order_ts). Here order_id -> customer_name, customer_email holds, but customer_email also depends on customer_name (or better: on customer_id if you had one), creating overlapping FDs. That overlapping dependency produces an update anomaly: a customer changes email and you must update many order rows. It also produces insert anomalies (you can’t add a customer until they place an order) and delete anomalies (deleting the last order erases contact info). Decomposing into customers and orders enforces customer_id -> customer_email and moves the determinant to the right place.

Anomalies are symptoms, not root causes. Update, insert, and delete anomalies stem from improper assignment of determinants and keys; they manifest under real workloads as bugs, race conditions, and operational overhead. To mitigate them, decompose tables along functional dependencies so each fact has one owner, enforce foreign keys and unique constraints to codify invariants, and add indexes that support the new join patterns. Remember the trade-off: decomposition increases joins; measure read latency and consider materialized views or targeted denormalization only after you’ve eliminated correctness issues.

How do you confirm you’ve removed the anomalies you care about? Start by profiling FDs against sample data and by writing representative transactions: simulate an email update, a bulk insert, and a customer deletion to see whether operations produce extra work or data loss. Then apply a minimal decomposition guided by offending FDs, enforce referential integrity with foreign keys, and add uniqueness constraints to capture candidate keys. By testing transactions against the refactored schema we can validate that keys and functional dependencies now prevent anomalies, and we’ll be ready to apply these patterns while walking through the specific normal forms that follow.

1NF: Atomicity and Uniqueness

Building on this foundation, the first boundary we enforce is that every column value should be indivisible and every row uniquely identifiable — think of this as the schema’s contract for atomicity and uniqueness. Atomicity here means a single cell represents one fact: a phone number, a status flag, a quantity — not a comma-separated list or JSON blob you treat like a string. We call this condition the first normal form because it’s the cheapest, highest-impact check you can run during a schema review: remove multi-valued attributes and make identity explicit. How do you spot violations quickly in a code review or migration plan? Look for string parsing in queries, repeated parsing logic in application code, or WHERE clauses that use LIKE ‘%tag%’.

Atomicity violations often show up as ergonomics problems that turn into correctness problems under load. For example, an orders table with tags TEXT storing "promo,new-customer" pushes parsing and deduplication into every query and update, which breeds bugs when two concurrent transactions add tags. Instead, model tags as rows: order_tags(order_id, tag) and declare (order_id, tag) as a composite primary key or unique constraint. This guarantees each tag is a single atomic fact, makes joins and indexes straightforward, and prevents update anomalies where one transaction overwrites another’s parsed string.

Uniqueness is the flip side of atomicity: identity must be exact and enforceable by the database rather than application logic. Candidate keys, surrogate keys, and UNIQUE constraints are the tools you use here. Choose a surrogate id when natural keys are mutable or large; choose a natural key when it encodes immutable business identity and simplifies queries. For example, enforce UNIQUE(email) on a customers table to prevent duplicate accounts; for many-to-many join tables, prefer PRIMARY KEY (left_id, right_id) to both enforce uniqueness and optimize joins. These constraints encode business invariants and reduce the need for expensive deduplication jobs downstream.

In modern databases you have pragmatic options that feel like exceptions to atomicity — arrays, JSON(B), and hstore — but they carry trade-offs you must understand. Arrays and JSON are useful for semi-structured or ephemeral attributes that don’t participate in strong functional dependencies, but they make uniqueness and indexing harder: you can index individual JSON fields in Postgres with GIN and expression indexes, yet enforcing a cross-row uniqueness rule inside a JSON array is either impossible or expensive. Therefore, reserve JSON for attributes that are read-heavy, schema-flexible, and not subject to transactional invariants; otherwise normalize them into tables and constraints so customer_id -> primary_email remains provable by the DBMS.

Make practical tests part of your CI so atomicity and uniqueness stay enforced as the schema evolves. Add migration-time checks that detect multi-valued columns, write unit tests that simulate concurrent writes to parsed fields, and include quick queries that surface duplicates (SELECT col, COUNT(*) FROM t GROUP BY col HAVING COUNT(*)>1). Building these checks into your pipeline prevents anomalies from creeping into production as developers add convenience columns. Taking these steps now keeps your single-source-of-truth promise intact and sets up the clean functional dependencies we’ll decompose further as we move to the next normal forms.

2NF: Removing Partial Dependencies

Building on this foundation, the second normal form (2NF) eliminates a very specific source of redundancy: partial dependencies. A partial dependency exists when a non-key attribute depends on only part of a composite primary key instead of the entire key, which creates update, insert, and delete anomalies in the same way we discussed earlier. How do you spot a partial dependency in a real schema? Look for tables defined with composite keys where some columns describe an entity implied by only one key component rather than the relationship as a whole.

Consider a common example that surfaces in analytics and transactional schemas: an enrollment table where (student_id, course_id) is the primary key, but course_title and instructor_name are stored on the same row. The table looks convenient, yet course_title is determined solely by course_id — that’s a partial dependency. This violates 2NF: although the table may satisfy 1NF atomicity and uniqueness, non-prime attributes must be fully functionally dependent on the entire candidate key. Leaving course_title where it is forces you to update many enrollment rows whenever course metadata changes.

Detecting these issues with queries is practical and fast. Run a query that groups by the component suspected of being a determinant and checks for multiple distinct values in the dependent column, for example: SELECT course_id, COUNT(DISTINCT course_title) FROM enrollments GROUP BY course_id HAVING COUNT(DISTINCT course_title) > 1; If that returns rows, you either have inconsistent data or a design problem where course_title belongs in a separate courses table. These checks belong in schema review checklists and in CI migration tests so partial dependencies don’t reappear as the schema evolves.

When you refactor, move attributes that depend on only part of a composite key into their own authoritative table and reference them with a foreign key. In the enrollment example we split into courses(course_id, title, instructor_id) and enrollments(student_id, course_id, grade). That enforces course_id -> title as a functional dependency inside the DBMS, centralizes updates, and allows a unique constraint on course_id. Decide whether to use a surrogate key or keep course_id as the natural key based on mutability and query patterns; a surrogate id helps when course identifiers change or when the natural key is large.

There are pragmatic trade-offs to weigh when pushing to 2NF. The immediate benefits are reduced update anomalies, simpler migrations for entity attributes, and clearer indexing strategies for joins. However, decomposition increases the number of joins for reads that previously read a single denormalized row. For OLTP workloads these extra joins are usually acceptable because you remove write amplification and locking contention; for read-heavy paths instrument your system first and consider caching, materialized views, or selective denormalization only after measuring query latency under realistic load.

Not every composite key requires decomposition: if a non-key attribute semantically belongs to the relationship and truly depends on the whole composite key (for example, role or grade in a membership table), keeping it where it is preserves locality and avoids unnecessary joins. The practical rule is this: if you can derive an attribute from a single component of the composite key, move it to the appropriate entity table; otherwise keep it in the relationship table. Taking this concept further, the next step is to examine transitive functional dependencies and remove attributes that depend on other non-key fields so each fact has one clear owner and your schema remains robust under real-world operations.

3NF & BCNF: Eliminating Transitives

Building on this foundation, transitive functional dependencies are a common source of subtle redundancy that survives 1NF and 2NF and bites you during updates and deletes. You’ve already removed multi-valued cells and partial dependencies; now we focus on attributes that depend on other non-key attributes rather than directly on a candidate key. Left unchecked, these transitive dependencies create update anomalies, force awkward migration scripts, and hide business invariants from the DBMS. Treating them explicitly during schema review keeps your normalization work from being cosmetic.

A transitive dependency exists when A -> B and B -> C imply A -> C, where B is a non-key attribute; put plainly, some column is derived through another non-key column instead of the primary key. This matters because the database cannot enforce B -> C as an invariant if B is not the primary authority for that fact, so changes to B require coordinated updates to rows that should not own C. Identifying transitive dependencies requires you to list functional dependencies and ask whether each non-key attribute is determined by a candidate key or by another non-key attribute. How do you detect transitive dependencies in your schema and data at scale?

Start detection with simple aggregation queries that surface inconsistent determinants, for example grouping by the suspected intermediary and checking for multiple dependent values (SELECT dept_id, COUNT(DISTINCT dept_name) FROM employees GROUP BY dept_id HAVING COUNT(DISTINCT dept_name) > 1). If that returns rows you either have dirty data or a design smell: dept_name belongs to a departments table, not repeated on employee rows. Enforce the corrected dependency with a foreign key and unique constraint so the DBMS becomes the source of truth. These pragmatic checks belong in CI migration tests so a refactor that reintroduces a transitive dependency fails early.

In practice, normalizing to the third normal form means decomposing a relation so that every non-prime attribute is non-transitively dependent on every candidate key; in other words, remove attributes that depend on other non-key attributes. For an employees example, refactor employees(emp_id, dept_id, dept_name) into employees(emp_id, dept_id) and departments(dept_id, dept_name). Implementing this decomposition enforces dept_id -> dept_name inside the departments table and eliminates update anomalies when department metadata changes. Use concise migration steps: create the new table, backfill distinct determinants, add foreign keys, and then drop the redundant columns once application traffic routes to the new schema.

There are cases where 3NF still leaves odd dependencies because the left-hand side of an FD isn’t a candidate key; that’s where BCNF becomes relevant. BCNF (Boyce–Codd Normal Form) tightens the rule: for every non-trivial functional dependency X -> Y, X must be a superkey. Choose BCNF when overlapping candidate keys or atypical determinants create anomalies that 3NF doesn’t remove, for example when two different natural keys imply distinct attributes. Migrating to BCNF can require additional joins or new surrogate keys, so weigh the complexity against the consistency benefits for your workload.

Apply these rules pragmatically: normalize to 3NF to eliminate transitive dependencies that cause correctness issues, and escalate to BCNF when you discover determinants that violate the superkey requirement. Measure read performance after decomposition and use materialized views, targeted denormalization, or read-side caching for hot read paths rather than reintroducing redundancy as a quick fix. Instrument representative transactions in staging to confirm that updates, deletes, and inserts no longer produce multi-row edits or data loss.

Taking this approach ensures each fact has one owner and that the DBMS can enforce your functional dependencies instead of application code. With transitive dependencies removed and BCNF applied where necessary, your schema will be easier to evolve, safer under concurrent writes, and more straightforward to index—preparing us to examine join dependencies and higher normal forms next.

4NF–5NF and Denormalization Tradeoffs

Building on this foundation, the last two normal forms address the kinds of redundancy that survive even careful 3NF/BCNF decompositions: multi-valued dependencies and join dependencies. How do you know when to normalize all the way to 4NF or 5NF, and when to accept a denormalization for pragmatic performance reasons? In practice you should treat 4NF and 5NF as precision tools—use them when the data model encodes independent multi-values or when relations can only be losslessly reconstructed via complex joins, and avoid them when the operational cost outweighs consistency benefits.

A multi-valued dependency (MVD) occurs when a single key maps independently to two or more sets of attributes. In plain terms: one entity has two lists of facts that do not depend on each other. For example, if a product has multiple compatible platforms and multiple shipping zones independent of platform, storing both lists in one table creates exponential duplication. Normalizing to 4NF splits those independent lists into separate tables—product_platform(product_id, platform) and product_zone(product_id, zone)—so you enforce each functional relationship in one place. Applying 4NF removes the class of update and delete anomalies that come from MVDs and keeps normalization focused on correctness rather than query convenience.

5NF (projection-join normal form) tightens the requirement again by addressing join dependencies: a relation is in 5NF if every join dependency is implied by the candidate keys. Practically, 5NF matters when a single table represents a ternary or higher-arity relationship that cannot be decomposed into smaller binary relations without losing information. Consider a supply-chain case where part, supplier, and project combinations are constrained—some suppliers supply a part only for particular projects. If you can’t represent those constraints as independent binary relations, you either keep the original relation or decompose into several tables and rely on lossless joins. Enforcing 5NF removes hidden constraints from application logic and pushes them into referential structure the DBMS can reason about.

The trade-off is straightforward: every additional normalization step increases the number of joins required to answer the most common read queries, and joins cost CPU, memory, and latency. For OLTP systems with frequent writes, the reduction in redundancy and locking contention often justifies 4NF/5NF because updates touch fewer rows and invariants are enforced centrally. For read-heavy or latency-sensitive paths, however, those joins can become the bottleneck. We must measure the cost of joins under realistic concurrency, not just rely on theoretical purity.

When should you denormalize? Denormalization is appropriate when measured read latency, throughput, or operational simplicity demands it. Use targeted denormalization for hot read paths: add a materialized view, maintain a compact projection that duplicates a small set of fields, or introduce a cached summary table to avoid multi-table joins. The critical discipline is to control where denormalization occurs: document which tables are authoritative, implement idempotent background sync or transactional updates for derived copies, and keep the duplicated surface area minimal so you don’t reintroduce the broad update anomalies normalization eliminated.

In production, practical patterns reduce risk. Maintain the normalized canonical tables for writes and consistency, and serve reads from materialized views or read-only denormalized projections that you refresh incrementally. Use transactional upserts, write-ahead change capture, or database triggers only when you can guarantee retry semantics and idempotence. Monitor divergence with simple checks (row counts, checksum comparisons) and automate reconciliation jobs so eventual consistency becomes observability rather than a surprise in incident response. These patterns let you get the operational benefits of normalization while pragmatically addressing performance.

Measure, instrument, and iterate: profile end-to-end transactions, benchmark joins that 4NF/5NF introduce, and simulate peak concurrency before you commit to heavy decomposition or broad denormalization. If your latency budgets require it, denormalize selectively and keep the normalized schema as the source of truth. Taking this approach keeps your schema design defensible: you gain the correctness guarantees of advanced normal forms where they matter and the practical speedups of denormalization where they’re proven by data—setting up the next step of introducing caching and materialized aggregates for high-volume read patterns.