RAG overview and benefits

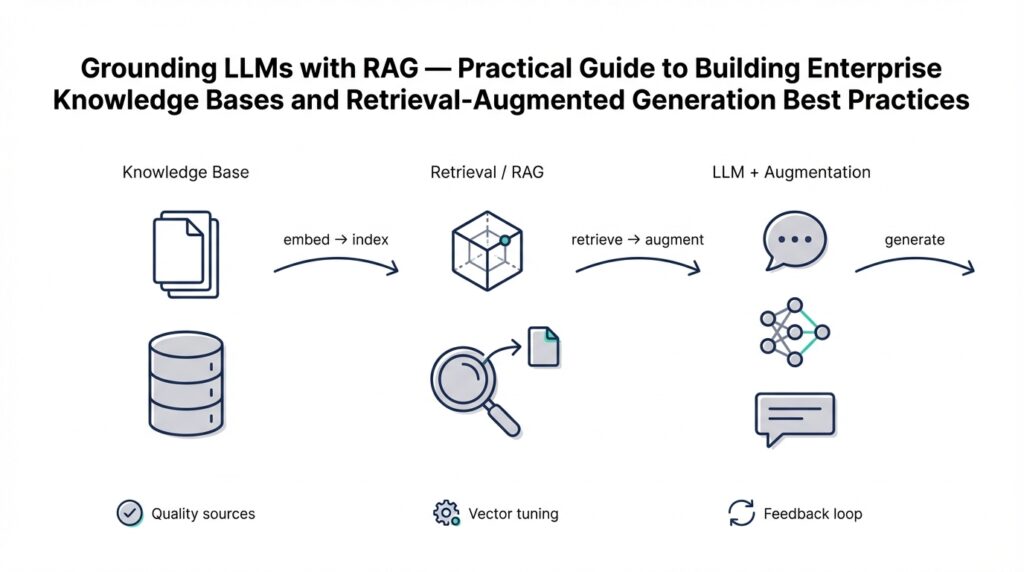

Few things drain confidence faster than a model that invents plausible-sounding but incorrect answers; grounding language models with retrieval fixes that at the system level. Building on our architecture discussion, Retrieval-Augmented Generation (RAG) combines a retriever and a generator so the model reasons over real documents from a managed knowledge base before producing output. By front-loading retrieval into the prompt pipeline you reduce hallucination, increase explainability, and make updates to facts as simple as reindexing documents rather than retraining weights. For teams operating enterprise knowledge bases, RAG becomes the practical bridge between static LLM capabilities and dynamic business content.

At its core, the pattern separates concerns: a dense or sparse retriever finds relevant context, and the generator composes an answer conditioned on that context. How does RAG actually reduce hallucinations? Because the generator receives evidence snippets fetched at query time, its token-level predictions are constrained by factual context instead of relying solely on parametric memory. Concretely, you often see pipelines like: emb = embed(text), store.add(id, emb, meta), then hits = store.search(q_emb, top_k=10), prompt = assemble(hits, query) — this decoupling makes debugging and metrics (retrieval precision, answer faithfulness) far more tractable.

Consider a customer-support knowledge base where product docs, incident logs, and SLA tables coexist. In practice we chunk long manuals into ~400–800 token passages, compute embeddings, and store them in a vector DB alongside structured metadata (version, source, sensitivity). At query time you fetch the top-k passages, optionally re-rank with a cross-encoder, and include those passages in a prompt template that instructs the LLM to cite sources. This retrieval-first approach lets you answer “Which API changes affected authentication in v2.3?” with direct citations from changelogs instead of vague guesses, demonstrating how retrieval-augmented generation materially improves traceability for auditors.

The benefits extend beyond accuracy. Grounding with retrieval provides freshness: you can add a breaking doc or patch note and have the model reflect that change within minutes by reindexing, which is far cheaper and faster than model updates. RAG also optimizes cost-performance: small, cheaper models can deliver high-quality answers when supplied with high-signal context retrieved from your knowledge base, so you can reserve larger models for synthesis-heavy tasks. Finally, it improves developer velocity—content owners update documents in place, and the retrieval layer absorbs the changes without pipeline rewrites.

Operationally, the success of a RAG deployment depends on indexing and retrieval choices as much as model selection. Use hybrid retrieval (sparse BM25 + dense vectors) when you need recall for keyword-heavy enterprise documents; choose chunk sizes that preserve semantic boundaries (typically 500 tokens for manuals, 200–300 for FAQs). Set top_k and re-ranking thresholds based on downstream QA metrics rather than intuition; a common starting point is top_k=10 with a fast re-ranker to prune noise. Monitoring should include retrieval precision, answer faithfulness, and latency percentiles so you can balance relevance and responsiveness.

Security, compliance, and governance are often the deciding factors for enterprise adoption. Because RAG surfaces source passages with answers, you gain stronger audit trails and the ability to redact or policy-filter content at the retrieval layer before it ever reaches the generator. Segment your knowledge base by sensitivity, enforce access controls on vector indices, and log retrievals alongside responses to satisfy compliance reviews. Measure impact with end-to-end tests that compare retrieval-backed answers to ground-truth documents to validate both correctness and safe behavior.

Taking this concept further, we’ll next translate these operational principles into implementation patterns and code-level examples that help you build a production-grade enterprise knowledge base. That next step shows how to choose embedding models, configure vector stores, and architect retriever–generator orchestration so your RAG system becomes a reliable, auditable, and maintainable part of your platform.

Plan your knowledge base

RAG and a well-structured knowledge base are only as useful as the design decisions you make before a single document is indexed. Start by defining the knowledge base’s purpose: who will query it, which business questions it must answer, and the service-level constraints for freshness, latency, and auditability. How do you prioritize sources when product docs, incident reports, and policy manuals overlap? Clarifying intent up front—support diagnostics, compliance audits, developer onboarding, or customer-facing answers—drives downstream choices in chunking, metadata, and access controls that directly affect retrieval-augmented generation quality.

Define a clear content ownership and lifecycle policy so your repository stays accurate and defensible. Assign canonical owners for each source type, require a version or change-log field on ingested documents, and set reindex triggers (manual, scheduled, or event-driven) for breaking updates. These governance primitives let you answer operational questions like “who changed this passage?” during audits and enforce retention rules for sensitive material. We’ve found teams reduce drift and improve faithfulness by mapping owners to index partitions and automating reindexing when a source’s version identifier changes.

Design your content model around semantic boundaries, not arbitrary file sizes. Choose chunking rules tailored to document type—preserve sections for manuals (~400–800 tokens), keep FAQs as single entries (~100–300 tokens), and treat log streams as time-windowed records. Include deterministic chunk IDs and a compact metadata schema (source, version, doc_type, language, created_at, sensitivity) so you can upsert or retire passages without reprocessing entire documents. A minimal metadata example we use looks like this:

{"id":"auth-v2-changelog-0001","source":"changelog","version":"2.3.1","doc_type":"changelog","sensitivity":"public","created_at":"2025-11-04"}

Choosing embedding and indexing strategies early prevents painful migrations later. Select an embedding model that balances semantic fidelity with cost and standardize an embedding-version field so you can re-embed selectively as models improve. Decide whether the system will support online streaming inserts or batch upserts; for high-change sources use streaming with incremental re-ranking, while archival datasets can be batched nightly. Plan for soft-deletes and tombstones in your vector DB to preserve audit trails while ensuring retired passages don’t leak into answers.

Segmenting indices and enforcing retrieval-time policies is your last line of defense for compliance and relevance. Put sensitive content in separate, access-controlled indices and apply policy filters before hits reach the generator; this prevents accidental disclosure and simplifies redaction. Log retrievals with query context, returned IDs, and decision rationale so you can reconstruct any response for audits or dispute resolution. We recommend implementing a short, policy-layer transform that can mask or remove fields (PII, credentials, contract numbers) as part of the retrieval pipeline rather than relying on the model to redact post hoc.

Plan evaluation and iterative improvement as part of the knowledge-base roadmap. Define measurable signals—retrieval precision at k, citation recall, end-to-end answer faithfulness, and latency percentiles—and treat them as product metrics you optimize against. Run A/B experiments with different chunk sizes, top_k settings, and re-ranker configurations, and instrument user feedback loops to capture failure modes that automated tests miss. Building on this foundation, the next step is to translate these planning decisions into concrete implementation patterns and code-level orchestration so your RAG system consistently returns accurate, auditable answers from the right parts of your knowledge base.

Ingesting and preprocessing data

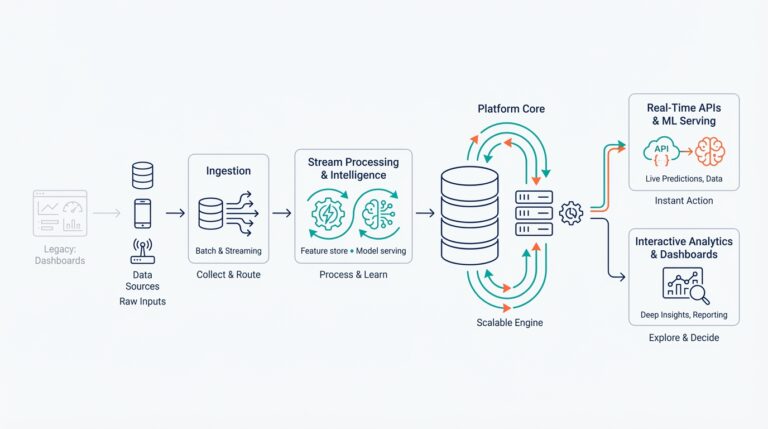

Getting reliable answers from a retrieval-augmented system starts well before you call the LLM: the quality of your data ingestion and data preprocessing pipeline determines recall, faithfulness, and latency. We begin by treating ingestion as a transformation pipeline rather than a file dump—normalize formats, extract clean text, and attach minimal but authoritative metadata so the retriever can make informed decisions. How do you handle messy enterprise sources like scanned PDFs, HTML knowledge bases, and mixed-format changelogs? You design deterministic parsing rules, OCR fallback, and schema-driven converters that produce consistent passages for downstream embedding and indexing.

First, normalize and canonicalize every source so you reduce noise at the root. Convert proprietary formats to text while preserving structure (headings, tables, code blocks) because semantic cues in headings and table context materially improve retrieval relevance; when OCR is required, run language detection and confidence-based filters before accepting a passage. We recommend tagging each passage with source, version, doc_type, language, created_at, and sensitivity fields—these metadata keys let you filter and policy-enforce at query time without reprocessing content. Deterministic filenames and stable IDs let you upsert or tombstone passages reliably during reindexing.

Chunking decisions are the most consequential preprocessing choice for RAG quality, so pick strategies that preserve semantic boundaries and enable precise citations. For long-form manuals preserve sections and subsections as single chunks, for FAQs keep one Q–A together, and for logs use time-windowed slices that capture causal context. Use overlapping sliding windows with a stride (for example, 50% overlap) only when you need positional robustness; otherwise prefer section-based splits to avoid redundant context in top-k hits. How do you pick the right chunk size for a given source? Benchmark end-to-end QA metrics—citation recall and answer faithfulness—across several chunking presets instead of using one-size-fits-all heuristics.

Next, compute and manage embeddings with versioning and selective re-embedding in mind. Choose an embedding model that balances semantic fidelity and cost, and add an embedding_version field to every vector record so you can re-embed only changed or high-value passages later. For batch pipelines use vectorized upserts; for high-change sources use streaming inserts and small, frequent re-ranking windows. A minimal upsert pattern looks like this:

chunk = {"id": id, "text": text, "meta": meta}

emb = embed_model.encode(text)

vector_db.upsert(id=chunk["id"], vector=emb, metadata=chunk["meta"])

This pattern makes selective reindexing and rollback straightforward while keeping audit trails intact.

Treat metadata and policy transforms as active preprocessing stages rather than afterthoughts. Enforce sensitivity labels and access control at index-time by partitioning or tagging vectors; implement a policy layer that masks or strips fields before hits reach the generator so you never rely on the model to redact. Implement soft-deletes (tombstones) and a compact change-log field so you can reconstruct who changed a passage and when—this simplifies dispute resolution and compliance audits. Logging retrieval context (query, returned IDs, scores) at ingestion and retrieval time connects changes to observed model behavior in postmortem analysis.

Finally, instrument your pipeline with validation checks and monitoring that feed product decisions. Run synthetic QA suites that compare retrieved passages to ground-truth answers, surface drifted embeddings with a periodic sample test, and track operational metrics like index latency, upsert throughput, and retrieval precision@k. We also recommend a feedback loop that captures user corrections as labeled examples for re-ranking and chunking experiments. Building on this foundation, the next step is choosing embedding models, configuring your vector database, and orchestrating retriever–generator handoffs so that retrieval quality consistently scales with your knowledge base.

Embeddings and vector indexing

High-quality embeddings are the backbone of reliable retrieval: they convert your normalized passages into dense vectors that capture semantic relationships across manuals, logs, and policy docs. Choose an embedding model with explicit trade-offs in mind—semantic fidelity, dimensionality, throughput, and cost—because those choices determine how well similar concepts cluster in vector space and how much storage and compute your vector store will require. We recommend front-loading an embedding_version field in every record so you can re-embed selectively as models improve or budget changes. Early investment in consistent embedding metadata pays off during selective reindexing and drift analysis.

Selecting an embedding model is as much about operational constraints as signal quality. Use smaller, faster models for high-ingest streams where near-real-time indexing matters, and reserve high-fidelity embeddings for canonical sources or high-value passages where citation accuracy is critical. Dimensionality and normalization matter: compare cosine similarity on normalized vectors versus dot-product on raw vectors for your chosen model, and benchmark retrieval precision@k rather than relying solely on intrinsic model scores. We also version embeddings in the metadata so you can A/B different encoders without invalidating your audit trail.

The choice of vector indexing algorithm shapes your latency and recall curve: approximate nearest neighbor (ANN) methods like HNSW and IVF reduce search time at the cost of tunable recall, while exact indexes give deterministic results but scale poorly. When you configure an ANN index, tune parameters (efConstruction, M for HNSW; nlist, nprobe for IVF) against realistic queries and throughput; these knobs directly affect memory, build time, and query latency. Consider hybrid retrieval—combine a sparse keyword pass (BM25) with dense vector search—to catch keyword-heavy queries that pure semantic embeddings may miss, and follow up with a cross-encoder re-ranker when you need precision for top-k answers.

Building on our ingestion and chunking practices, enrich every vector with compact metadata that drives policy, filtering, and provenance. Partition or shard indices by sensitivity, product area, or language so access controls and compliance filters execute before vectors reach the generator; this prevents accidental exposure and simplifies redaction workflows. Implement upserts and tombstones in your vector DB to support soft-deletes and rollbacks, and log retrieval IDs and scores to construct end-to-end audit trails. These metadata-driven patterns keep retrieval explainable and expedite incident investigations.

Tuning retrieval for downstream QA is an experimental discipline, not a one-time setting. How do you choose top_k and re-ranker thresholds for your workload? Instrumentations and experiments answer that: start with top_k=10 and measure citation recall, then run a lightweight re-ranker to trim noisy hits while tracking latency percentiles. Normalize and calibrate scores across different indices and embedding versions so your re-ranker sees consistent inputs, and add user-feedback signals—clicks, upvotes, dispute reports—to continuously refine both ranking and chunking strategies.

Operationally, treat your vector architecture as a service that requires lifecycle policies: automated re-embedding for changed sources, incremental index updates for streaming content, and caching for hot-query results to shave tail latency. Monitor index build times, query p95/p99 latencies, and drifted embeddings using periodic synthetic suites so you can plan selective reindex windows with minimal user impact. Taking these indexing and embedding patterns into production prepares you for the next step—configuring your vector database and orchestrating retriever–generator handoffs so retrieval consistently supplies high-signal context to the generator.

Integrating LLMs and prompting

Building on this foundation, the practical work of wiring retrieval into your LLM pipeline lives in prompt engineering and orchestration. If you want reliable, auditable answers from a RAG system, you must treat prompting as a runtime contract between the retriever and the model: the retriever supplies evidence, and the prompt constrains how the LLM uses that evidence. How do you design that contract so answers are faithful, citable, and performant?

Start by defining a deterministic prompt scaffold as part of your orchestration layer. The first sentences in every prompt should set role and scope: a compact system instruction that tells the model it must only use provided passages, a user query, and a provenance requirement (IDs, snippets, or both). Place high-value signals—source IDs, version, sensitivity—near the top of the prompt to prioritize them in the model’s attention window. We assemble context with a repeatable template so behavior remains consistent across runs and A/B tests.

Use explicit grounding instructions to reduce hallucination and improve citation reliability. For example, instruct the model: “Answer using only the passages labeled below and include in-line citations to passage IDs; if the answer cannot be derived from the passages, respond ‘No answer found’.” Combine that with one or two few-shot examples demonstrating correct citation format and refusal behavior. These patterns teach the model the expected output shape—when to synthesize, when to say unknown, and how to surface provenance for auditors.

Manage context budget by mixing top-k retrieval with compression and iterative retrieval. If your top-k passages exceed the model’s input window, prefer a two-stage approach: first pass with a compact re-ranker to drop low-signal hits, then optionally summarize high-signal passages into a condensed context. Use hierarchical retrieval when queries require long chains of evidence—fetch supporting passages for the most relevant candidate, re-rank, then re-query the LLM with the distilled evidence. This hybrid of re-ranking and selective summarization balances latency and faithfulness.

Decide when to rely on chain-of-thought vs explicit evidence chaining. For auditable responses, avoid free-form chain-of-thought that cannot be externally verified; instead instruct the model to produce a concise answer followed by a numbered evidence chain that maps each claim to specific passage IDs and quoted snippets. That gives you machine-checkable provenance and makes automated faithfulness tests feasible. If you must use internal reasoning traces for complex synthesis, keep them hidden from end-users and preserve them for forensic logs only.

Treat safety and policy enforcement as a pre-prompt stage rather than an afterthought. Filter or redact sensitive fields at the retrieval layer based on sensitivity metadata, then inject a short policy reminder into the system instruction enforcing data-handling rules. This prevents the model from being asked to reason over sensitive content it shouldn’t expose and reduces post-hoc redaction errors. Log the policy decision, returned IDs, and the final prompt so you can reconstruct why a passage was excluded.

Instrument and iterate on prompts as product features. Run A/B experiments on scaffold variations, measure end-to-end faithfulness (does each claim map to a source?), and track latency consequences of top_k and re-ranker choices. Automate synthetic QA suites that assert expected citations and refusal behavior, and capture user corrections as labeled examples for prompt tuning or re-ranker training. Taking these steps prepares the pipeline for production: the retriever supplies high-signal passages, the prompt enforces a contract, and the LLM becomes a predictable, auditable synthesis step—ready for the implementation and orchestration patterns we’ll explore next.

Evaluation, monitoring, and governance

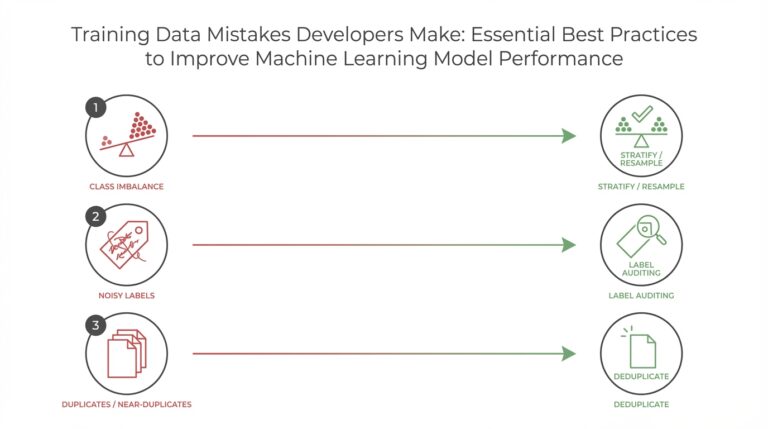

Building on this foundation, reliable production behavior comes from treating evaluation and monitoring as first-class features of any RAG deployment rather than afterthoughts. You need concrete signals that connect retrieval quality in the vector DB and embeddings to the generator’s final output, because retrieval-augmented generation systems introduce failure modes that pure LLM deployments do not (stale passages, index leakage, or misranked evidence). How do you detect a drop in faithfulness versus an increase in irrelevant retrievals? Asking that question up front shapes what telemetry and labeled tests you create.

Start evaluation with precise, measurable metrics that reflect end-to-end goals. Define retrieval precision@k and citation recall as retrieval-layer metrics, and define answer faithfulness (the percent of model claims grounded in retrieved passages) as the primary downstream metric; faithfulness here means each asserted fact can be traced to a passage with sufficient overlap or an explicit citation. Instrument automated checks that assert every generated claim maps to a passage ID and quote; a simple pattern is: for each claim, assert contains(retrieved_snippets, claim_spans) or mark as ungrounded. Track these metrics over time and tie them to business KPIs like support deflection or mean time to resolution.

Design evaluation suites that blend synthetic, adversarial, and human-labeled tests. Synthetic QA tests let you run thousands of deterministic cases (change a changelog line, reindex, and assert new answers appear), while adversarial tests probe hallucination—feed queries that purposely combine similar product names or obsolete API calls to see whether the pipeline cites correct sources. Complement automation with periodic human review: sample low-confidence answers and use a small adjudication panel to label failure modes (misattribution, omission, or unsafe synthesis). Use stratified sampling across user types, query lengths, and top_k buckets so your evaluation reflects real-world query distributions.

Monitoring must be continuous and actionable, not just dashboards. Instrument retrieval logs with query, top_k IDs, scores, embedding_version, and prompt template, and retain those logs in an auditable store so you can reconstruct any response later. Monitor latency percentiles (p50/p95/p99) for both retriever and generator, monitor index health (build time, shard size, efConstruction/efSearch drift for HNSW), and add anomaly detectors for embedding drift by sampling similarity distributions to canonical exemplars. Configure alerts that triage by impact—failed faithfulness checks and policy-filter hits should create higher-severity incidents than isolated latency spikes.

Governance practices reduce risk and make audits tractable while preserving usefulness. Enforce retrieval-time policy filters—partition sensitive documents into separate indices with strict ACLs and apply redaction or masking transforms before hits reach the generator—so you never rely on the model to remove PII downstream. Log access decisions (who queried what, which index returned hits, why a passage was excluded) and maintain a change-log for every upsert and tombstone in your knowledge base. Combine automated retention and soft-delete mechanics in your vector DB with periodic compliance reviews so you can demonstrate chain-of-custody for any passage.

Operationalize evaluation and governance as part of your CI/CD pipeline for the knowledge base and the RAG stack. Treat prompt scaffolds, re-ranker weights, and embedding_version as deployable artifacts: run A/B experiments for top_k and re-ranker thresholds, continuously train re-rankers on corrected examples from the human-review queue, and schedule selective re-embedding windows driven by drift signals. By folding monitoring, evaluation, and governance into release pipelines we ensure retrieval-augmented generation remains auditable, performant, and aligned with organizational policy as the system scales—next we’ll translate these controls into concrete orchestration patterns and code-level checks you can plug into your platform.