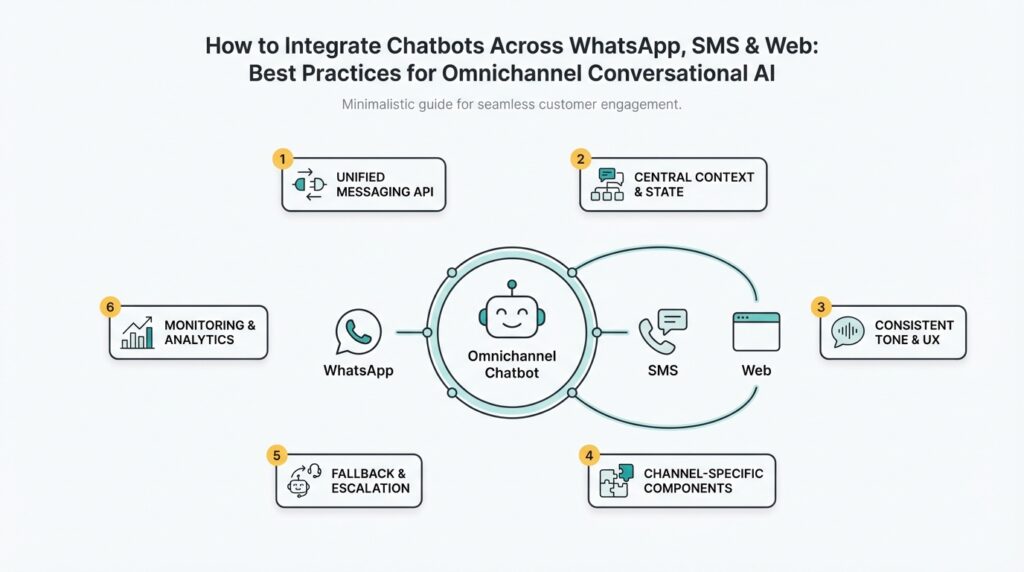

Define Omnichannel Goals and KPIs

Building on this foundation, the first priority is translating business outcomes into a small set of measurable objectives that guide engineering trade-offs for omnichannel conversational AI chatbots. Start by naming the top one or two outcomes you need—reduce contact center volume, increase self-serve conversions, or improve customer satisfaction—and make those outcomes the north star for every metric you collect. When you frame goals this way, instrumenting and prioritizing work (NLP model improvements, rich media on WhatsApp, or latency optimizations on web) becomes a direct line to business value rather than an abstract engineering exercise.

Differentiate primary KPIs from supporting metrics so you don’t chase noisy signals. Primary KPIs should map directly to the business outcome: for cost reduction that might be contact deflection rate or cost per conversation; for revenue it will be conversion rate or average order value influenced by chat; for experience it’s CSAT or end-to-end response latency. Supporting metrics—intent recognition accuracy, fallback rate, handoff frequency, and session persistence—explain why the primary KPI moved and drive engineering decisions. Choose one primary KPI per outcome and a handful of supporting metrics so your dashboards remain actionable rather than cluttered.

Channel characteristics should shape which KPIs you prioritize for WhatsApp, SMS, and web. WhatsApp and web chat let you leverage rich messages and persistent threads, so measure session completion rate and media-handling success in addition to CSAT. SMS is constrained by length and delivery variability, so track deliverability, message truncation incidents, and response latency as first-class metrics. For instance, a commerce flow on the web may prioritize conversion rate and time-to-purchase while a WhatsApp support bot might prioritize first-contact resolution and retention of context across sessions.

Instrument events and design an analytics schema that makes cross-channel comparison reliable. Emit a unique conversation_id, channel tag, event_type (e.g., message_received, intent_detected, handoff_initiated, conversation_resolved), timestamps, and outcome flags for each terminal state. This lets you compute clean metrics—median bot response time = median(timestamp_bot_response – timestamp_user_message) grouped by channel—or calculate containment rate as conversations without handoff_initiated divided by total conversations. Store raw events in your data warehouse and derive daily aggregates; that separation preserves diagnostic fidelity while keeping dashboards performant.

How do you operationalize targets and guardrails? Define service-level objectives (SLOs) and set alert thresholds on the most critical signals—for example, SLA breaches on median response time, sudden rises in fallback rate, or a drop in intent accuracy after an NLP model deploy. Pair A/B experiments with power calculations before rollout so you can attribute KPI deltas to model changes or UI tweaks. Finally, translate KPI movement into cost/benefit terms: compute cost per deflected conversation and compare it to contact center staffing costs to prioritize roadmap items that improve ROI.

Take a cadence-based approach to governance so metrics lead product decisions rather than react to incidents. Create a weekly KPI review with product, data, and ops where the primary KPI, supporting diagnostics, and any experiments are discussed; maintain a cross-channel scoreboard that highlights channel-specific priorities. This gives you a repeatable process to tune conversational AI behavior across WhatsApp, SMS, and web, and sets the stage for instrumenting experiments and scaling the bot confidently as we move into implementation.

Map User Journeys Across Channels

Building on this foundation, the next practical step is turning KPI-driven intent and event instrumentation into concrete, cross-channel user journey maps that engineers and product teams can act on. Start with your primary outcomes (for example, contact deflection or conversion) and trace the end-to-end flow a real user takes across WhatsApp, SMS, and web chat. A clear journey map is not just a diagram; it’s an executable specification that captures entry points, channel-specific constraints, state transitions, identity links, and handoff triggers so your engineering choices directly support the KPIs you already defined.

Map each journey from the user’s perspective first, then translate to events and state models. Ask: how does a user start the flow, where might they pause, and what causes the bot to escalate to a live agent? For each step record the channel, the expected message shape (text, template, media), the intent(s) you expect to detect, and the terminal outcomes (resolved, handed off, converted). This approach forces you to reconcile WhatsApp’s rich-media templates, SMS’s length and delivery variability, and web chat’s session continuity into a single, testable specification for omnichannel conversational AI.

Turn those human-readable journeys into an event schema that your analytics and orchestration layers can consume. Emit a canonical conversation_id, a channel tag, event_type (e.g., message_received, intent_detected, handoff_initiated), timestamps, and minimal context payloads so you can reconstruct a session from raw events. For example: {"conversation_id":"...","channel":"whatsapp","event_type":"intent_detected","intent":"order_status","timestamp":"..."}. That standardized schema makes it straightforward to compare containment rates, median response times, or model fallbacks across channels without manual reconciliation.

Implement an identity and state rehydration strategy so context follows the user rather than the channel. Use a lightweight session store (for example, Redis or a fast key-value store) keyed by conversation_id and by any persistent identifiers you can capture (phone number, user_id, or hashed identifiers). When a user moves from web chat to WhatsApp or returns via SMS, rehydrate the last known state, slot values, and the last detected intent before resuming. This avoids repeated questions, reduces friction, and materially improves first-contact resolution rates in practice.

Design explicit channel transition points and guardrails in your flows to prevent context loss and loops. Define which actions are safe to continue when a user switches channels (viewing order status) and which require re-authentication (changing payment method). For templates and rich messages, provide graceful degradation rules: if a WhatsApp template is unavailable, fall back to a concise SMS summary with a link to web chat. These rules reduce error cases and keep KPIs comparable across channels by making behavior deterministic.

How do you validate these journey maps? Run instrumented experiments and trace individual conversation replays from event logs to verify state continuity and outcome attribution. Implement synthetic user tests that simulate channel switches, message delays, and delivery failures to measure how often context is lost or a handoff is triggered incorrectly. Feed those diagnostics back into the supporting metrics you set earlier—fallback rate, handoff frequency, and session persistence—so the journey map becomes a living artifact you tweak with data-driven confidence.

When you craft these cross-channel journeys thoughtfully, you convert abstract KPIs into repeatable engineering work: reusable event contracts, reliable session rehydration, and deterministic channel-fallback behavior. This makes omnichannel conversational AI measurable and maintainable, and it gives you a repeatable path to improve the exact metrics that matter—conversion, containment, and customer satisfaction—as you scale across WhatsApp, SMS, and web chat.

Design Unified Conversation Flows and Context

Building on the journey maps and KPI foundation we already described, the most important engineering challenge is making context travel reliably between channels so the user experience feels like a single conversation. Omnichannel conversational AI must preserve intent, partially completed forms (slots), authentication state, and UI affordances when a customer moves from web chat to WhatsApp or SMS. How do you avoid asking the same question twice after a channel switch, and how do you present rich content when a channel is text‑only? We’ll show concrete state patterns, reconciliation rules, and testing strategies you can apply to keep context coherent across WhatsApp, SMS, and web chat.

Start by defining a canonical, versioned conversation state that every component—NLP, orchestration, UI renderer—reads and writes. The state should be explicit about which fields are authoritative and which are derived: for example, last_intent, slots (partially filled form values), auth_level (unauthenticated, soft_auth, strong_auth), last_channel, sequence_number, last_activity_ts, and schema_version. Versioning the schema lets you migrate fields safely without breaking older orchestrators; sequence_number enforces ordering guarantees and prevents race conditions during rapid channel switches. Below is a compact JSON example of a canonical state you might persist in Redis or a document store:

{

"conversation_id": "c-123",

"schema_version": 3,

"sequence_number": 42,

"last_intent": "order_status",

"slots": {"order_id": "ABC123", "delivery_zip": null},

"auth_level": "soft_auth",

"last_channel": "web_chat",

"last_activity_ts": "2026-02-15T12:34:56Z"

}

Design rendering rules that are channel-aware and deterministic so your flow logic separates what to say from how to render it. For example, a web chat can show a product carousel and an “Track order” CTA, WhatsApp can send a template with a button or rich media, and SMS must fall back to a concise text message with a short link. Graceful degradation rules should be codified: when rich media is unavailable, truncate to a one‑line summary and include a context token so the web chat can rehydrate the experience later. Also define which actions require re‑authentication—changing payment info should require strong_auth regardless of channel—so security is consistent across WhatsApp, SMS, and web chat.

When a user switches channels, rehydrate context atomically and reconcile conflicting updates. Rehydration is the process of loading the canonical state into the active orchestrator; define a two‑step pattern: (1) fetch canonical state and lock it (optimistic or short pessimistic lock), then (2) apply incoming user event only if its sequence_number is newer than stored state to avoid regressions. Idempotency—ensuring the same event applied twice has no adverse effect—prevents duplicate actions after message retries. If your orchestration layer supports event sourcing, replay the minimal set of events after the last applied sequence_number to rebuild derived fields rather than patching state ad hoc.

Context freshness and conflict resolution need explicit policies so engineering trade‑offs are predictable. Assign TTLs for ephemeral context (e.g., slot filling for a checkout flow might expire after 30 minutes) and treat user‑provided data with the highest freshness priority; automated suggestions or bot‑filled defaults should be lower priority. Use a confidence score from your NLU to break ties when the same user expresses different intents in rapid succession, and implement back‑off rules to ask a single clarifying question rather than immediate escalation. For concurrent updates across channels, prefer last‑writer‑wins only when sequence numbers align, otherwise surface a reconciliation step to the user to confirm ambiguous choices.

Make context integrity measurable and testable: instrument a context_loss_rate metric that counts switches where required slots are missing after rehydration, and track mismatched_intent_after_switch to catch regressions when you release model or schema changes. Run synthetic tests that simulate message delays, duplicate deliveries, and channel transitions; keep a set of “golden conversations” that must replay end‑to‑end with identical terminal states across WhatsApp, SMS, and web chat. Finally, codify privacy and audit trails—record who or what changed auth_level and when—and use these logs both for debugging and compliance. Taking these steps ensures the omnichannel conversational AI you build behaves predictably, reduces repeated questions, and keeps friction low as users move between channels.

Choose APIs, SDKs, And Orchestration Tools

Building on this foundation, the choices you make for APIs, SDKs, and orchestration tools determine whether your omnichannel bot behaves like a fractured set of channel-specific scripts or a coherent conversational service. Start by mapping those choices back to the KPIs and journeys we defined earlier: delivery guarantees and latency affect containment and CSAT; template support and message size affect conversion and media handling. How do you choose between vendor APIs and open-source SDKs? Asking that early avoids refactors that leak channel-specific complexity into your business logic.

Prioritize interoperability, observability, and contract stability when evaluating APIs and SDKs. Look for APIs that expose durable webhooks, delivery receipts, and retry semantics you can reason about; avoid providers that hide rate limits or change template contracts frequently. For SDKs favor typed, well-documented libraries that match your service language and give you control over serialization, retries, and timeout behavior so you can keep intent detection and state reconciliation deterministic across WhatsApp, SMS, and web chat.

Dig into channel provider APIs for operational characteristics that directly affect your flows. Ensure the API provides webhook signature verification, delivery receipts with timestamps, and explicit template lifecycle management so you can instrument metrics like message truncation incidents and deliverability. Implement webhook verification on the server (for example: verify(signature, payload, secret)) and record raw events so your analytics layer can reconstruct conversations for debugging and KPI attribution. These capabilities are nonfunctional requirements that usually break cross-channel parity when overlooked.

Choose SDKs that make the right things easy and the wrong things hard. Use an adapter pattern so your business orchestrator talks to a minimal interface (sendMessage, receiveEvent, getDeliveryState) and concrete SDKs for Twilio/WhatsApp/SMS implement the interface; this reduces vendor lock-in and keeps the orchestration layer channel-agnostic. Pay attention to SDK lifecycle: prefer libraries with active maintenance, changelogs that explain breaking changes, and semantic versioning so you can align SDK upgrades with your schema migrations and NLU model releases.

Pick orchestration tools that match the complexity of your journeys rather than the hype. For simple flows, a lightweight event-driven orchestrator with Redis-backed state and a deterministic state machine is often faster to operate and test than a heavyweight workflow engine. For multi-step commerce or verification flows that require parallel tasks, retries, and clear handoff points, use an orchestration layer that supports durable workflows, idempotent tasks, and observability (traceable sequence_numbers and audit logs). Integrate this orchestration with your session rehydration strategy so the orchestrator can atomically load the canonical conversation state and continue processing without duplication or context loss.

When you implement these choices, iterate with a guardrail-first approach: build a small abstraction layer, capture canonical events, and add channel-specific renderers that translate a single bot intent into channel-appropriate payloads. Instrument delivery metrics and synthetic tests that simulate channel switches, message delays, and template failures so you can validate that the orchestration behaves as intended. Taking this concept further, the next step is to wire rendering and security rules into the orchestrator so UI rendering, authentication level, and handoff logic remain consistent as conversations move between WhatsApp, SMS, and web chat.

Implement Identity Linking and Session Sync

Building on the journey maps and canonical state we discussed earlier, the practical work left to do is reliably tie a human across channels and make that link drive session sync so context follows the user. Identity linking and session sync are the primitives that let a WhatsApp message resume a web chat checkout, or an SMS inquiry pick up where an authenticated web session left off; get these wrong and users repeat information and abandonment rises. How do you perform identity resolution without creating security or privacy holes? We’ll show concrete patterns and minimal API/DB examples you can implement today.

Start with an identity map that separates persistent identifiers from ephemeral conversation IDs. Create a small table (or KV namespace) that stores canonical_user_id, channel, channel_id (phone number or WhatsApp id), last_seen_ts, and a link_status field; use hashed_channel_id for storage and a peppered hash to reduce exposure. Implement a verification-first linking flow: when a user authenticates on web, generate a short-lived link token (10–15 minutes) and display a QR or one-time code the user can send to WhatsApp or SMS. On receipt, exchange the token for canonical_user_id and atomically create the identity map entry. Example schema (SQL-style):

CREATE TABLE identity_map (

canonical_user_id UUID PRIMARY KEY,

channel TEXT,

hashed_channel_id TEXT UNIQUE,

link_status TEXT,

last_seen_ts TIMESTAMP

);

Wire a minimal API endpoint for linking that enforces rate limits and records audit metadata. POST /link-identity should accept {channel, channel_id, link_token} and return 200 only after verifying the token and consent. Record who performed the link and when so you can audit or revert links if a number is recycled.

For session sync, persist a versioned canonical state and implement atomic rehydration with sequence numbers and short locks. When an inbound event arrives from any channel, fetch the canonical state by canonical_user_id or conversation_id, compare the event sequence_number to the stored sequence_number, then apply changes only if the incoming sequence is newer. Use optimistic concurrency where possible: increment a sequence_number on every write and reject writes with stale numbers to prevent regressions after retries. A concise rehydrate flow looks like this in pseudocode: fetch state -> lock or compare sequence_number -> apply event -> increment sequence_number -> release. This pattern preserves idempotency and keeps session sync deterministic across WhatsApp, SMS, and web chat.

When users cross channels, preserve authentication level and explicit consent as part of the rehydrated state. Treat auth_level as authoritative: soft_auth (phone-verified) allows read-only transitions like viewing order status, while strong_auth (session token or 2FA) is required for payment changes. Implement short-lived cross-channel tokens for SSO-like linking: when a logged-in web user wants to move to WhatsApp, create a one-time token that maps to canonical_user_id; when WhatsApp presents the token, your system upgrades the channel link and copies session slots atomically. This avoids asking for full re-authentication for low-risk actions but enforces strong checks for sensitive operations.

Plan for corner cases: phone number reuse, SIM swaps, and shared numbers complicate identity linking. Provide unlink and dispute workflows that require multi-step verification before changing canonical mappings. Respect privacy rules by storing minimal PII, logging link and unlink events, and retaining consent timestamps. If you need to comply with regional regulations, keep an exportable audit trail that shows when consent was given and which channel was linked or unlinked.

Make identity linking and session sync observable and testable. Instrument metrics such as identity_link_success_rate, context_loss_rate after channel switch, and mismatched_intent_after_switch; emit events that let you replay conversations end-to-end. Run synthetic tests that simulate token expiry, duplicate deliveries, and number reassignment to validate rehydration and reconciliation logic. Conversation replay tools that rebuild state from canonical events are invaluable for debugging and for proving that session sync preserves UX.

These patterns let you treat WhatsApp, SMS, and web chat as transport layers rather than separate experiences: link identities securely, sync sessions deterministically, and design clear guardrails for sensitive actions. Taking these steps reduces repeated questions, improves first-contact resolution, and gives you measurable signals to iterate on next—rendering and handoff policies in the orchestrator are the logical next area to wire into this linked-and-synced model.

Ensure Compliance, Security, And Opt‑in

Building on this foundation, the first thing to acknowledge is that omnichannel conversational AI across WhatsApp, SMS, and web chat demands consent and security to be engineering-first concerns, not afterthoughts. How do you prove consent across channels and keep security auditable while preserving a smooth user experience? Start by treating opt-in as a required event in your canonical event model: every consent must be an immutable, timestamped record tied to a canonical_user_id and the originating channel so you can reconstruct who consented, when, and what they agreed to.

Design your consent model to be both minimal and explicit. The topic sentence: keep only what you need and store it durably. Persist a consent record that includes consent_type (marketing, transactional), channel (whatsapp, sms, web_chat), consent_timestamp, consent_source (button, template opt-in, IVR), and a consent_token that can be presented for revocation or dispute. This structure supports audits, allows targeted revocation, and prevents the common pitfall of scattered or ambiguous approvals that break deliverability and compliance later.

Make consent records actionable by exposing a narrow API for recording and checking consent. For example, a POST /consents endpoint should validate inputs, write an append-only record, and return a short-lived verification token you can include in outbound templates. A compact JSON consent record might look like this:

{

"canonical_user_id": "uuid-1234",

"channel": "whatsapp",

"consent_type": "transactional",

"consent_timestamp": "2026-02-28T15:04:05Z",

"consent_token": "cns-abc123",

"consent_source": "web_chat_button"

}

Security controls must be coupled to consent checks and integrated into the orchestration layer. The topic sentence: verify and authenticate every cross-channel action. Apply end-to-end encryption where supported, enforce TLS for webhooks and API calls, verify webhook payload signatures on receipt, and require attested tokens for state rehydration across channels. A concise pseudocode pattern for webhook signature verification looks like:

# pseudocode

def verify_webhook(payload, signature, secret):

expected = hmac_sha256(secret, payload)

return secure_compare(expected, signature)

Tie delivery and template workflows to these checks so your orchestrator refuses to send sensitive content unless consent and auth_level checks pass.

Channel-specific behaviors matter because opt-in rules and security surface differ by transport. The topic sentence: implement channel-aware opt-in flows. For WhatsApp, capture template approvals and a clear opt-in source before sending templates; for SMS, persist opt-in evidence tied to the phone number and keep opt-out fast and observable; for web chat, surface consent during login or via a persistent consent banner and link that to the same canonical_user_id used for WhatsApp/SMS linking. These concrete safeguards reduce legal risk and improve deliverability by aligning behavior with provider policies and carrier expectations.

Auditability and retention policies make compliance operable rather than theoretical. The topic sentence: make every consent and security decision traceable. Emit immutable events for consent_granted, consent_revoked, webhook_verified, and auth_level_changed into your event store and keep an exportable audit trail for the retention period your policy requires. Implement TTLs on ephemeral slots and explicit retention for PII; when a user unlinks a channel or disputes a number, run a deterministic unlink workflow that both revokes consent and records the steps taken for dispute resolution.

Operationalize these patterns with monitoring, synthetic tests, and clear revocation flows. The topic sentence: test and measure compliance as you test latency and handoffs. Add synthetic conversations that exercise opt-in, revocation, and channel-switch paths; set alerts on anomalies such as sudden drops in consent_acceptance_rate or increases in consent_revocation events. Define incident runbooks for suspected breaches, tie them to your SLOs, and ensure you can produce searchable, time-ordered consent logs for legal requests. Taking this approach keeps your omnichannel bot secure, provably consented, and ready to scale across WhatsApp, SMS, and web chat without creating legal or operational surprises.