Problem Overview and Scope

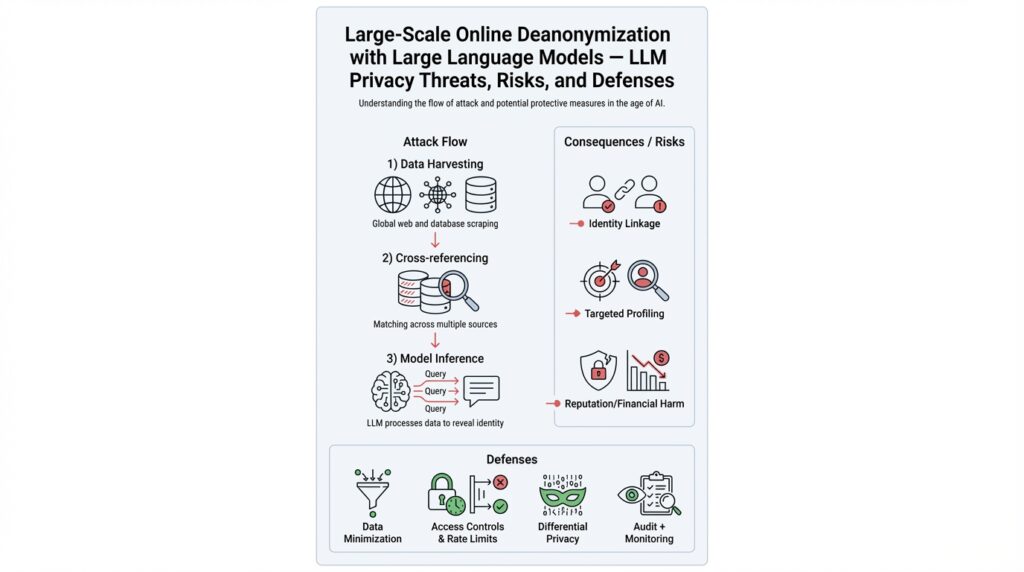

Deanonymization of user data enabled by large language models creates a privacy risk that demands concrete technical framing before we design defenses. Deanonymization, or re-identification, is the process of linking anonymized records back to real identities; large language models (LLMs) change the calculus because they can encode subtle linguistic and contextual signals at scale. How do you quantify the scope of those threats and which parts of your ML pipeline are actually at risk? In this section we pin down the attack surface, attacker capabilities, and the realistic boundaries for scaling deanonymization attacks using LLMs.

LLMs amplify correlation power by turning noisy, fragmented signals into high-confidence matches. Where traditional re-identification relied on structured quasi-identifiers (age, zip, gender), LLMs consume free text, metadata, and embeddings to surface latent identifiers: phraseology patterns, unique entity co-occurrences, timeline signals, and stylistic fingerprints. Consequently, data that seemed “safe” after redaction can still leak identity when combined with auxiliary datasets and LLM-based pattern matching. We’ll focus on practical scenarios—production APIs, fine-tuned models, and embedding indices—because those are where deanonymization moves from theoretical to feasible.

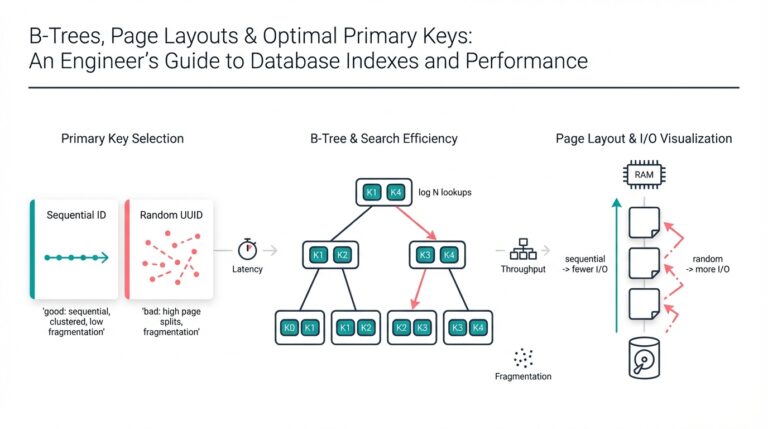

The threat surface spans training corpora, model outputs, embedding stores, telemetry, and developer tooling. For example, embedding-based similarity search used in retrieval-augmented generation creates an index that an attacker can probe: by submitting crafted prompts and measuring returned vectors or text, they can compute similarity to known public profiles and infer identity. In practice you’ll see attacks that compute cos_sim(query_vec, candidate_vec) > 0.9 and then escalate to cross-referencing public social posts or corporate directories. That chaining—from vector similarity to external lookup—is where deanonymization scaling becomes automated and low-cost.

Attacker patterns fall into two families: active probing and passive statistical inference. Active probing uses targeted prompts, few-shot templates, or API fuzzing to coax models into outputting identifying cues; passive inference leverages aggregated model behavior, embeddings, or side-channel metadata (response latencies, token counts) to correlate with auxiliary data. Attackers often orchestrate both approaches: run automated probes to harvest candidate identifiers, then run higher-precision verification queries against scraped public datasets. The key enabler is auxiliary data: without some external ground truth, an LLM’s high-confidence guess remains only a risk, not a confirmed re-identification.

The impact is technical, operational, and legal. From a systems perspective, deanonymization can corrupt training labels, poison feedback loops, and leak confidential relationships encoded in model weights or embedding indices. For users it translates to doxxing, targeted profiling, and higher-risk social engineering. For organizations it raises compliance exposure (data protection laws that require anonymization guarantees) and operational costs—retraining, model patching, or expensive differential privacy measures. You should prioritize models that serve user-provided sensitive content, fine-tuned internal models, and public-facing embedding indices for immediate mitigation.

To make this tractable we scope the analysis to attacker workflows that are automatable with commodity compute and publicly accessible auxiliary data, and to production systems where models or indices are available via API or internal services. We exclude highly specialized nation-state capabilities and hardware-side attacks, which follow different threat models. With these boundaries in place, the next sections will quantify attacker cost, show reproducible proof-of-concept patterns, and map defenses (from access controls to privacy-preserving training) to the specific risks we’ve outlined here.

Threat Model and Assumptions

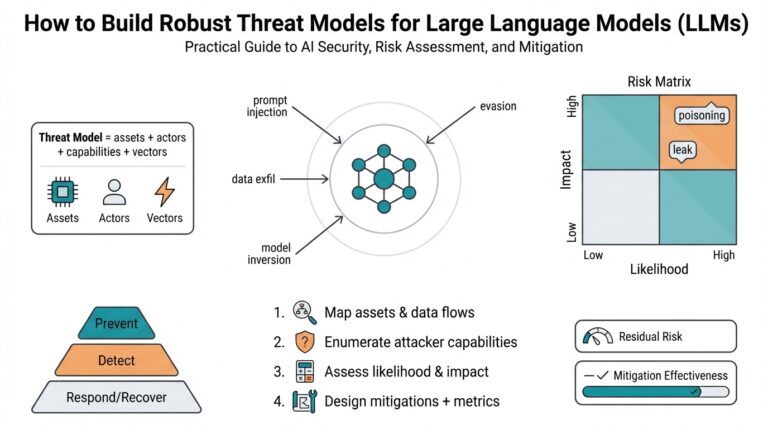

Building on this foundation, we need a crisp, testable framing of who can attack your system, what success looks like, and which assets are in scope—because threat modeling determines which mitigations make sense and how we measure residual risk. Who are we protecting against, and what can they realistically do? In practice that question collapses to a few measurable variables: the attacker’s access to model APIs, access to embedding vectors or indices, and the quantity and quality of auxiliary datasets they can leverage for correlation. If we don’t pin these down, we can’t quantify deanonymization risk or design defensible thresholds.

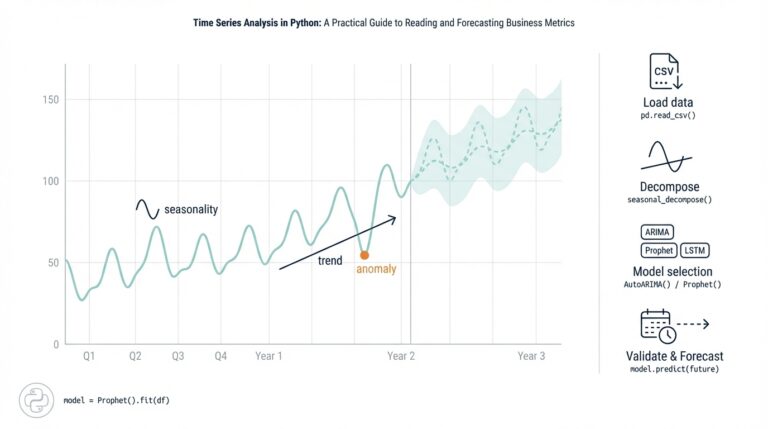

Start by defining attacker goals and success criteria. A re-identification attack’s goal is to map an anonymized record to a real-world identity with acceptable confidence; operationally we treat a success as the attacker producing a candidate identity above a chosen confidence threshold combined with independent external verification. That threshold can be statistical (e.g., posterior probability > 0.9) or pragmatic (the correct identity appears in the top-10 candidates and is verifiable via public posts). Metrics like top-k recall, precision at fixed false-positive rate, and expected cost-per-correct-identification let you compare defenses quantitatively and prioritize mitigations where deanonymization has the worst business and user impact.

Next, enumerate attacker capabilities in concrete tiers so tests map to realistic adversaries. At the lowest tier an attacker has public query access and can probe a hosted LLM or search an open embedding index; at the middle tier they hold API keys, can submit crafted prompts, and can collect response metadata and embeddings; at the highest practical tier for our scope they are insiders or exfiltrate embedding shards and auxiliary corpora. These capability tiers determine which primitives we assume the attacker can use—few-shot prompting, embedding similarity searches, and side-channel signals such as response latency or tokenization artifacts—and they set the budget you should emulate during red-team exercises.

We also must specify auxiliary-data assumptions because LLM-powered deanonymization depends heavily on external ground truth. For scaling attacks, assume attackers can scrape social media, public registries, corporate directories, and common-data brokers; they will assemble candidate profiles and then use embedding similarity and contextual cues to rank matches. In a test harness you can model this by seeding a candidate pool, computing embeddings for both queries and candidates, and measuring how often the true identity rises in the ranked list under various noise and redaction strategies. This models the realistic chaining step—embeddings plus scraped data—that converts an LLM’s high-confidence guess into confirmed re-identification.

Clarify what we include and exclude in scope to avoid false generalizations. Our threat model targets production-facing components: deployed LLMs exposed via APIs, fine-tuned internal models that accept user text, and embedding indices used for retrieval-augmented generation—these are the high-risk primitives where deanonymization utility is highest. We explicitly exclude specialized hardware attacks and nation-state zero-day exploits because their resources and vectors differ substantially; instead we assume attackers use commodity compute, accessible auxiliary datasets, and automated probing. Under these assumptions, defenses such as strict access controls, rate limiting, monitoring for probing patterns, token-level redaction, and differentially private training can be systematically evaluated against the modeled adversary.

Finally, make the model operational by prescribing measurable tests and escalation rules. Run automated probing campaigns constrained by real-world budgets, compute top-k identification rates using embeddings and LLM outputs, and flag any configuration where attacker success exceeds your business-acceptable threshold. When tests show high re-identification rates, escalate to mitigation experiments: tighten API scopes, add noise to embeddings, or retrain with privacy-preserving mechanisms, and then re-run the same quantitative checks. By making these assumptions explicit and repeatable, we move from abstract fear of deanonymization to an empirical program that balances privacy, utility, and operational cost—setting the stage for quantifying attacker cost and demonstrating concrete proof-of-concept attacks in the following sections.

ESRC Pipeline Explained

Building on this foundation, think of the attacker workflow as a repeatable, four-stage pipeline that turns noisy text and metadata into a verifiable identity—this is the ESRC pipeline in practice and it sits at the heart of modern deanonymization threats to LLM privacy. The pipeline front-loads extraction of weak signals, transforms those signals into dense representations, searches large candidate pools using vector similarity, and then confirms high-confidence matches by chaining to external ground truth. Because each stage compounds information, small design choices early in the flow (what you log, how you embed, or what you index) have outsized effects on re-identification risk downstream. We’ll unpack each stage technically and show where to instrument, test, and mitigate risk in production systems that serve user-provided content or expose embedding indices.

The first stage is signal extraction: parse and normalize every piece of attacker-accessible input and side-channel data. In extraction you collect free-text fragments, metadata (timestamps, locale, client hints), telemetry (latency, token counts), and any partially redacted fields; attackers often automate parsers that recover structured cues from noisy logs. For example, a chat transcript with removed names still contains timeline patterns, unique entity co-occurrences, and phraseology that can be turned into candidate queries. When you design audits, capture which signals are persistently available to external callers and treat them as part of the public attack surface rather than internal noise.

The second stage is embedding and representation: convert extracted signals into vectors optimized for similarity search. Attackers either compute embeddings using the exposed API or recreate vectors locally if the model is available; they then index those vectors into approximate nearest neighbor stores for sub-second lookup. The choice of embedding model, dimensionality, and normalization critically affects distinguishing power—higher-dimensional, semantically rich embeddings increase recall but also amplify deanonymization. In practice you’ll see attackers compute cosine similarity and tune thresholds empirically; for example, a quick Python similarity check looks like:

import numpy as np

cos_sim = lambda a,b: np.dot(a,b) / (np.linalg.norm(a)*np.linalg.norm(b))

score = cos_sim(query_vec, candidate_vec)

The third stage is scoring and ranking: merge vector similarity with auxiliary signals to produce a ranked candidate list. How do you measure confidence in a candidate match? Attackers typically compute a composite score that blends cosine similarity, metadata overlap (shared locations, timestamps), and language-model output confidence to produce a posterior-like ranking. Operational metrics that matter here are top-k recall and precision at fixed false-positive rates; during red-team runs we emulate attacker budgets and measure how often the true identity appears in the top-1 or top-10 under different noise or redaction strategies. Designing thresholds—e.g., require cos_sim > 0.92 and at least two independent metadata matches—turns probabilistic guesses into actionable escalations.

The final stage is confirmation: convert a high-ranked candidate into an externally verifiable identity. Confirmation typically uses active probing (crafted prompts to elicit unique facts), scraping public profiles, or cross-referencing corporate directories and known registries. This stage is where an LLM’s high-confidence pattern-matching becomes a confirmed re-identification; without it, a top-k guess is a risk but not a breach. Confirmatory checks increase attacker cost but are often automated, so minimizing the signal available for confirmation (by restricting embeddings, adding calibrated noise, or reducing metadata leakage) directly reduces successful confirmations.

Thinking about the ESRC pipeline end-to-end clarifies where defenses most effectively break the chain: prevent signal extraction by stricter redaction and telemetry policies, reduce embedding fidelity with clipped or noisy vectors, limit fast probing via rate limits and anomaly detection on similarity queries, and raise confirmation cost by limiting auxiliary data exposure. By instrumenting each pipeline stage with measurable KPIs—signal surface size, embedding entropy, top-k identification rate, and confirmation success—you can run repeatable tests that map mitigations to reductions in deanonymization risk.

As we move into reproducible proof-of-concept attacks and attacker cost modeling, we’ll reuse this ESRC framing to design experiments that isolate each stage’s contribution to re-identification. That lets us compare concrete mitigations under the same adversary model and quantify the trade-offs between utility and LLM privacy.

Attack Demonstration Walkthrough

Building on this foundation, let’s walk through a realistic, repeatable attack demonstration that shows how deanonymization escalates from noisy text to verified identity when an LLM and an embedding index are available. Start with a clear research question: can an attacker recover a real identity from a partially redacted chat transcript using only public scraping and API-level probing? This framing focuses our red-team budget and gives us measurable success criteria—top-k recall and confirmation rate—so the experiment has operational meaning rather than being a theoretical exercise.

Begin by constructing the test harness to emulate the attacker tiers we defined earlier. Seed a candidate pool of public profiles scraped from social media and corporate directories; these are the auxiliary datasets an adversary would use for chaining. Instrument the target system to log exactly which signals an external caller can observe—response text, returned embedding vectors or similarities, token counts, and latency. These observable signals become the inputs to the extraction stage of our ESRC pipeline: you’ll treat telemetry and partial text as first-class attacker surface rather than incidental noise.

Next, automate the extraction-to-embedding flow and index the candidate pool into an embedding index for fast lookups. Convert each extracted fragment (phrases, timestamps, entity co-occurrences) into vectors using the same embedding model the service exposes, then run approximate nearest neighbor queries against the index to rank candidates. For a quick sanity check compute cosine similarity as follows: cos_sim = dot(q,v) / (||q|| * ||v||). Tuning query batching, prompt templates, and input normalization materially changes recall; in practice you’ll run multiple prompt variants and aggregate similarity scores to boost signal-to-noise.

After ranking, fuse auxiliary metadata to produce a composite confidence score and measure identification metrics. Combine cosine similarity with metadata overlap (shared city, job title, or uncommon entity co-occurrence) to compute a posterior-like score and report top-1 and top-10 recall under controlled false-positive thresholds. For practical red-team thresholds we often treat cos_sim > 0.9 as high-signal and require at least one independent metadata match before escalation; you should record precision at fixed false-positive rates so you can compare mitigations quantitatively rather than eyeballing results.

The confirmation stage converts a ranked guess into verifiable re-identification. Use active probing prompts that ask for corroborating details—time-anchored facts or unique phrasings—that an attacker can cross-check against the scraped profiles. For example, a crafted follow-up prompt might elicit an anecdote or nickname that appears verbatim on a public post. Automate confirmation queries and then validate matches by programmatically scraping the candidate profile for the corroborating string; this is the step that turns an LLM’s high-confidence match into a real privacy breach and where attacker cost becomes concrete.

Throughout the experiment collect KPIs that map directly to mitigation effectiveness: signal surface size (count of observable features), embedding entropy (variance in candidate scores), top-k identification rate, and confirmation success per probe cost. Run ablation studies by removing signals (drop timestamps, redact locations), by adding calibrated noise to embeddings, and by throttling similarity queries to model rate limits. These controlled experiments show which defenses reduce confirmed re-identification most per unit of utility lost, letting you prioritize mitigations with measurable privacy-utility trade-offs.

Taking this concept further, the next section will quantify attacker cost and compare mitigations under identical attack workloads so we can choose defenses that are both practical and provably effective. By reproducing the full extraction→embedding→search→confirm loop in a test environment you turn abstract deanonymization risks into engineering metrics you can iterate against, validate, and monitor in production.

Defense Strategies and Limitations

Deanonymization driven by large language models turns small, innocuous signals into reliable identity cues, so the defenses you deploy must break the attacker’s ESRC pipeline at multiple points rather than rely on a single silver bullet. Start by treating every externally observable artifact—returned text, embeddings, metadata, and telemetry—as part of your public attack surface and design mitigations that either remove, degrade, or rate-limit those signals. In practice this means layering controls: access and authentication at the perimeter, algorithmic noise or clipping inside the model/embedding layer, and monitoring plus canary tests that detect probing patterns before confirmation occurs.

Preventing signal extraction is the lowest-cost and highest-return move when you can change surface area quickly. Enforce strict input/output redaction rules in server code, remove unnecessary metadata from API responses (timestamps, client hints), and avoid echoing user-provided content verbatim in model replies. For retrieval systems, never serve raw embedding vectors to clients; perform nearest-neighbor lookups server-side and return opaque IDs or ranked, sanitized text that minimizes verbatim overlap with sensitive fragments. These changes reduce the number of weak signals an attacker can harvest without significantly altering model utility for common tasks.

Where attackers rely on embeddings for similarity search, lower embedding fidelity rather than eliminating retrieval entirely. Techniques that work in practice include vector clipping (limit norm), dimensionality reduction, quantization, and calibrated additive noise to vectors before indexing. Differential privacy during training or DP-style noise applied to embeddings can bound information leakage; differential privacy (DP) here refers to algorithmic guarantees that a single user’s data has limited influence on outputs. However, you must tune these mechanisms against utility: adding Gaussian noise or reducing dimensions may dramatically reduce top-k recall for legitimate queries, so we recommend iterative red-team testing to find the practical privacy-utility frontier.

How do you decide which defenses to prioritize? Use threat-driven KPIs: measure top-k identification rate, confirmation success per probe cost, and signal-surface size in a controlled lab. Prioritize access controls and rate limiting for external APIs first—requiring API keys, enforcing per-key quotas, and applying anomaly detection on query vectors and prompt patterns buy you time and significantly raise attacker cost. Next, instrument embedding indices and apply server-side similarity thresholds rather than returning raw similarity scores; these make large-scale automated confirmation workflows brittle and more expensive for attackers.

There are operational and attacker-adaptation limits you must accept. Any mechanism that reduces signal fidelity will also reduce legitimate utility—users expect accurate retrieval and personalization, so tuning is an engineering trade-off. Adaptive adversaries can work around coarse defenses by aggregating many low-signal probes, using auxiliary datasets to amplify weak matches, or recruiting insiders who can access higher-fidelity artifacts. Side channels—response latency, token counts, or error messages—are hard to fully close and often require deep instrumentation and continuous hardening.

Higher-assurance defenses exist but come with substantial complexity and cost. Techniques like secure multi-party computation, homomorphic encryption, or running retrieval inside hardware-backed enclaves can prevent vector exfiltration, but they introduce latency, operational burden, and scaling challenges for large embedding indices. Differentially private model training (e.g., DP-SGD) can limit long-term memorization, yet it typically demands more data and tuning and can degrade downstream fine-tuned model quality. Weigh these costs against your threat model: for consumer-facing chat APIs, strict rate limits and embedding noise may suffice; for sensitive internal models, consider stronger cryptographic or DP investments.

Finally, embed defenses in a measurement loop rather than as one-off changes. Regular red-team exercises that emulate the attacker tiers we defined earlier, canary profiles seeded into indices to detect probing, and automated KPIs that track confirmation rates will show whether defenses regress as attackers adapt. As we move to quantify attacker cost and compare mitigation effectiveness, these operational measurements let you choose defenses that reduce confirmed deanonymization per unit of utility lost—turning abstract privacy risk into actionable engineering trade-offs.

Operational Mitigations and Best Practices

Building on this foundation, the highest-leverage operational moves are the ones that shrink what an attacker can observe and raise the cost of converting noisy signals into confirmed deanonymization. Start by treating every externally observable artifact—returned text, embedding outputs, telemetry, and API error messages—as part of your threat surface and assume attackers will try to stitch those artifacts to auxiliary datasets. We must therefore prioritize controls that reduce signal surface area while keeping retrieval utility intact; prioritization depends on where you expose embeddings and how critical high-fidelity retrieval is to your product.

The first operational layer is strict perimeter and request-level controls that slow or stop automated probing early. Require authenticated API keys with per-key quotas and token-bucket rate limiting, enforce per-IP and per-identity throttles, and scope keys to minimal capabilities (read-only vs. retrieval vs. admin). For example, implement short-lived keys for public clients and rotate them automatically; instrument quota violations as high-severity events so you can triage suspected probing quickly. These measures don’t remove signals, but they substantially raise attacker cost and are inexpensive to roll out.

Next, prevent signal extraction by sanitizing inputs and outputs and by never exposing raw vectors to clients. Avoid echoing user-provided sensitive fragments verbatim in model responses, strip or hash unstable metadata in API replies (precise timestamps, client hints), and keep logs that stream to public-facing services intentionally redacted. For retrieval, perform nearest-neighbor lookups on the server and return opaque document IDs or context-limited snippets rather than raw embedding vectors or similarity scores. On the server side, apply calibrated transformations to vectors before indexing or returning them; for example, clip and add small Gaussian noise:

# server-side vector hardening (illustrative)

vec = vec / max(1.0, np.linalg.norm(vec) / 5.0) # L2 clip to norm=5

vec = vec + np.random.normal(0, 0.01, size=vec.shape) # additive noise

Tuning embedding fidelity is a practical lever for reducing re-identification power while preserving utility for legitimate retrieval. Reduce dimensionality for lower-risk indices, use quantization to reduce representational precision, and consider batch-wise or index-level noise where appropriate. Apply vector clipping (limit L2 norm), controlled additive noise, and, when possible, dimensionality reduction from high-dimensional models (e.g., 1536→384) for public-facing indices. Each change requires empirical validation: run top-k recall and precision-at-fixed-false-positive benchmarks against a seeded auxiliary pool to measure utility loss per mitigation.

How do you detect probing before confirmation occurs? Instrument canaries and monitoring that map directly to the ESRC pipeline metrics we discussed earlier. Seed honey profiles into your embedding index, watch for repeated high-similarity lookups against those canaries, and track patterns like high query variability, short inter-query intervals, or repeated low-signal aggregation attempts. Maintain KPIs—signal-surface size, embedding entropy, top-k identification rate, and confirmation success per cost—and alert when any metric trends upward relative to baseline.

Hardening internal models and developer tooling reduces insider and credentialed adversary risk. Apply strict RBAC for embedding creation and index management, limit access to embedding export endpoints, require VPC-only or private-network access for high-fidelity indices, and minimize telemetry that developers can query. For particularly sensitive datasets, schedule retraining workflows inside isolated environments and consider differentially private training (DP-SGD) for models that will be widely accessible; treat DP as a long-term control, not a quick toggle.

Finally, bake defenses into a measurement loop so mitigations evolve with attacker adaptation. Run periodic red-team experiments that emulate the attacker tiers we defined, perform ablation testing of signals removed versus noise added, and gate any rollout of relaxed retrieval fidelity behind measurable decreases in top-k identification and confirmation rates. By instrumenting, testing, and iterating, we turn abstract LLM privacy risk and deanonymization threats into quantifiable engineering trade-offs you can manage over time—and prepare the organization to choose the next set of defenses based on measured reductions in confirmed re-identification rather than intuition.