Background: OpenAI Leadership Turmoil

Building on the broader discussion of organizational risk and product stewardship, the episode that convulsed OpenAI in November 2023 crystallized tensions around OpenAI leadership, governance, and the pace of AI development. You likely remember the headline: the board removed the CEO midweek, staff and investors revolted, and within days a deal was struck to return him while promising a governance review. That rapid sequence — removal, mass staff unrest, and reinstatement — exposed how governance architecture can become a single point of failure for mission-critical AI organizations. (washingtonpost.com)

The core disagreement driving the crisis centered on how to reconcile aggressive product strategy with oversight obligations. Board members alleged failures of candor and insufficient transparency; executives and many researchers pushed back, arguing that commercial partnerships and product timelines were essential to fund compute-intensive research. This clash is not unique to OpenAI: it’s the archetypal governance trade-off between rapid model iteration for practical deployments and conservative controls intended to mitigate systemic risk. For teams building models, that trade-off should shape your design of reporting lines, release gates, and escalation paths. (bloomberg.com)

Employees and investors played an outsized role in resolving the crisis, demonstrating how stakeholder alignment (or misalignment) can determine organizational outcomes. Within hours of the ouster, hundreds of staff signaled willingness to resign en masse and major investors, including a strategic partner, weighed in publicly — pressure that materially changed the board’s calculus. That sortie showed that when you build mission-driven engineering teams, their collective response to governance moves can create a de facto enforcement mechanism for leadership decisions. If you’re designing governance for an AI project, ask: how would your teams and external partners respond if leadership decisions suddenly threatened continuity? (theguardian.com)

An independent review later concluded the firing stemmed from a breakdown in trust rather than evidence of product safety failures, and the company reconstituted its board while adopting new governance measures. The WilmerHale-led investigation, and the subsequent appointment of new directors, were intended to shore up conflict-of-interest policies, create whistleblower channels, and clarify oversight responsibilities — concrete fixes that you can map to standard governance controls in technical organizations. We can treat those remedies as a pragmatic template: independent review, refreshed board composition, explicit governance guidelines, and transparent reporting lines are the building blocks that reduce single-point governance risk. (openai.com)

This episode has practical implications for how you structure accountability in AI development. It underscores why you should codify decision authority for: model release criteria, incident response, third-party partnerships, and executive-level reporting—each with documented signoffs and audit trails. In practice, teams that combined technical release checks (safety evaluation, red-team results, metrics thresholds) with governance signoffs fared better during leadership instability; that pattern suggests blending engineering gates with board- or executive-level checkpoints rather than relying on informal trust. (bloomberg.com)

As we move into the next section, keep this operational lesson in mind: governance failures aren’t only reputational hazards — they can stall product roadmaps, jeopardize partnerships, and trigger regulatory scrutiny. We’ll next examine how different governance models (nonprofit oversight, hybrid corporate structures, and investor influence) distribute risk and authority, and what that means for the teams responsible for building safe, reliable AI.

Governance Structure and Board Roles

Building on this foundation, the practical center of accountability in any mission-driven AI organization is how you define governance, who sits on the board, and what those board roles actually do day-to-day. We need governance that translates safety and product-risk objectives into concrete decision rights rather than vague exhortations. If you treat the board as a passive overseer, you create a single-point failure; if you overload it with operational detail, you slow innovation. The sweet spot is a governance structure that assigns clear, auditable authorities for model releases, external partnerships, and emergency interventions while preserving executive agility.

Start with intentional composition: the board should mix independent directors, technical expertise, and investor representation in fixed proportions so role incentives are balanced. Independent directors reduce conflicts of interest; directors with deep technical literacy (someone who can read a red-team report and interrogate evaluation metrics) reduce information asymmetry; investor and founder representation preserve strategic continuity. Define term limits, observer seats, and nomination processes in the charter so you don’t rebuild composition reactively during a crisis. These choices in board composition directly shape how robustly the organization can exercise oversight under stress.

Concrete committee architecture turns broad responsibilities into executable workflows: create standing committees for safety and risk, audit and compliance, and conflicts-of-interest, each with a written charter and KPIs. The safety committee’s remit should include approving release criteria, holding periodic tabletop incident responses, and commissioning independent reviews when thresholds are breached. The audit committee enforces transparency—verifying audit trails, whistleblower channels, and vendor due diligence. By codifying committee charters and routings, you make escalation predictable rather than ad hoc.

Operationalize oversight by mapping technical gates to governance signoffs and automated audit trails so decisions are reproducible. How do you map technical release gates to board-level oversight? For example, implement a release pipeline where engineering gates (unit tests, adversarial evaluation, latency and fairness metrics) feed a release report; an appointed safety director then issues a signoff token before executive or board approval is required for production rollout. A short pseudocode pattern clarifies the pattern:

if engineering_checks.passed() and safety_report.risk_score < threshold:

safety_director.signoff()

if release_type == "major":

board_committee.approve()

deploy()

else:

block_release()

That pattern preserves velocity for routine updates while ensuring board-level review is triggered only for high-impact changes. It also creates the audit trail auditors and regulators will expect, reducing ambiguity about who authorized what and when.

You must also harden conflict-management and transparency mechanisms so governance isn’t undermined by private incentives. Require regular disclosures of material relationships, create an independent whistleblower path with guaranteed protections, and mandate external reviews for disputes that implicate safety or candor. These controls complement board roles by providing signals the board can act on without relying solely on executive self-reporting. When trust breaks down, pre-agreed independent review processes shorten resolution time and reduce operational shock.

Finally, delineate escalation paths and emergency authorities so the organization can respond without board paralysis. Specify delegated powers for the CEO and an executive safety officer, define what constitutes an “emergency stop,” and require rapid but documented consultation with an emergency board panel when thresholds are crossed. As we discussed earlier, staff and investor responses can materially alter outcomes; by clarifying these board roles and governance mechanics in advance, you align incentives and reduce the risk that governance disputes will derail product roadmaps or public trust. The next section will examine how different legal and corporate forms distribute these authorities and what that means for implementation.

Key Stakeholders and Incentives

Building on this foundation, the practical question teams face isn’t abstract: who benefits when a risky model ships, and who bears the cost if it fails? OpenAI, governance, stakeholders, incentives, and safety should all be front-loaded in your thinking from day one because misaligned incentives create brittle decision-making. What incentives drive executives, researchers, investors, and the board when product velocity collides with systemic risk? Framing that question helps you design concrete controls rather than rely on goodwill or ad hoc crises to realign behavior.

Start by mapping the principal actors and their primary incentives so decision rights are rooted in reality. The board typically prizes organizational continuity, reputational downside mitigation, and fiduciary returns; executives balance strategic growth, partnerships, and day-to-day delivery; engineers and researchers focus on technical progress, publication, and intellectual integrity; investors push for runway extension and measurable ROI; regulators and the public demand accountability and safety. When you name these incentives explicitly you can design bridges—reporting lines, KPIs, and veto authorities—that translate those motives into operational rules rather than whispered expectations.

Misalignment shows up in specific failure modes and we saw a real-world example of this tension earlier in the post. When funding and partnership deadlines reward rapid releases, product teams can deprioritize rigorous adversarial testing or comprehensive red-team reports; conversely, a board oriented toward risk avoidance can stall necessary deployments until external pressures force unpredictable escalations. That conflict isn’t theoretical: it manifests as stalled roadmaps, rushed mitigations, or governance shocks that interrupt operations. Recognize where incentives pull in opposite directions and codify which axis—safety or velocity—takes precedence under which measurable conditions.

Employees and investors are not passive; they are levered stakeholders whose incentives can reset governance in hours. Staff retention, public statements, and investor actions create practical constraints a board cannot ignore, so make those channels part of your governance fabric rather than emergent threats. Operationalize this by predefining whistleblower protections, employee escalation pathways, and investor notification thresholds that trigger independent reviews. Those mechanisms lower the probability of surprise reactions and convert latent pressure into structured, auditable signals the board can act on without losing credibility.

Designing incentives in practice means using contractual and technical levers together. Tie portions of executive compensation or equity vesting to safety KPIs—metrics like adversarial robustness, measurable reduction in high-confidence hallucinations, and red-team closure rates—so financial incentives reinforce responsible releases. Create a designated safety officer with a limited, documented veto token in the release pipeline and require periodic external audits that are contractually triggered by threshold breaches. Use escrowed partnership milestones that release funding only after documented safety signoffs; these align commercial partners with safety objectives and preserve runway without sacrificing oversight.

For teams implementing governance, the immediate task is pragmatic: map each stakeholder to decision rights, run tabletop scenarios that stress-test incentive conflicts, and instrument dashboards that surface misalignment early. Define clear escalation paths that specify who can pause a release, which metrics unlock board review, and how independent reviews are commissioned and published. By converting stakeholder incentives into auditable governance mechanics, we reduce the chance that personality, panic, or short-term returns determine outcomes—and we preserve the technical team’s ability to deliver safe, reliable AI as the organization scales.

Safety vs. Commercial Strategy Tension

Building on this foundation, the hardest practical question you face is how to reconcile safety with an aggressive commercial strategy without letting either axis permanently derail the product roadmap. When compute costs, partner deadlines, and market windows push for fast model releases, safety and governance obligations can feel like opposing forces rather than complementary controls. How do you decide when commercial urgency should override additional safety testing, and what measurables let you do that responsibly? We’ll walk through a decision-forward approach that keeps product velocity while preserving auditability and systemic risk controls.

The tension exists because incentives pull in different directions: product teams and investors often prize time-to-market and revenue milestones, while risk owners and boards are focused on systemic harms and long-term reputation. Systemic risk, in this context, means failure modes that cascade beyond a single customer—misinformation at scale, adversarial misuse, or model behaviors that interact with infrastructure in unpredictable ways. Risk appetite is therefore not a single knob; it’s a vector you must quantify across categories (safety, privacy, compliance, robustness). Defining those axes early gives you objective thresholds to reference instead of ad hoc judgments when deadlines loom.

Operationally, treat release decisions as a layered control problem rather than a binary safety gate. First, classify changes by impact: minor tuning, feature flag flips, partner-facing API launches, or major architecture upgrades. For partner launches, use graduated rollouts: internal canaries → partner sandbox → limited-production cohort → wide production. Couple that with contractual mechanics—conditional milestone payments or escrowed funds released after documented safety signoffs—so commercial strategy is explicitly tied to demonstrable safety metrics instead of informal assurances.

On the technical side, implement contingency engineering patterns that let you maintain velocity while bounding risk. Instrument fine-grained telemetry and real-time detectors for hallucination rate, distributional drift, and policy-violation signals; enforce runtime capability ceilings and per-tenant rate limits; and deploy fast rollback and kill-switch mechanisms. For example, codify a release policy as metadata alongside the build: release: {cohort: 1000, throttle: 10rps, telemetry_thresholds: {high_confidence_hallucinations: 0.02}}. Those programmatic knobs let you automate pauses when safety metrics exceed thresholds and provide the audit trail governance will expect.

Decision discipline matters: require three converging signals before accelerating a risky release—engineering checks (adversarial tests, unit/regression suites), operational readiness (monitoring, throttles, incident playbooks), and a documented governance signoff tied to measurable KPIs. If any one signal is weak, we constrain the launch to a narrower cohort and increase monitoring frequency rather than cancel the roadmap outright. That approach answers the practical question of trade-offs by giving you a predictable escalation path: if telemetry breaches hard thresholds, an on-call safety officer triggers rollback; if metrics are within soft limits, expand access incrementally while preserving escrowed contractual protections.

This pattern preserves commercial strategy without surrendering safety or governance: you keep partner momentum via staged access and conditional funding while the board and safety officers retain clear, auditable authorities to pause, require independent review, or demand remediation. As we move into governance architecture, we’ll map these operational controls back to board committees, delegated emergency authorities, and the legal forms that make those powers enforceable—because aligning incentives on paper and in code is the only way to avoid repeating governance shocks.

Policy Reforms and Oversight Options

Policy reforms and oversight options are the levers that convert governance intent into reproducible organizational behavior; if you get these wrong, a single leadership dispute can cascade into product, legal, and reputational crises. Building on this foundation, we should front-load policy reforms, oversight, and release criteria into the development lifecycle so they’re discoverable and enforceable rather than aspirational. Start by treating safety KPIs, transparency requirements, and signoff authorities as product metadata that travels with every build and every partner contract. That approach makes governance an operational artifact rather than a debate you have under stress.

Internally, the most effective policy reforms are those you can automate and audit. Require mandatory, machine-readable release criteria that enumerate required tests (adversarial, fairness, robustness), telemetry readiness, and a named safety officer with a time-limited veto token; pair that with a protected whistleblower channel and routine independent reviews to reduce single points of failure. Align incentives by tying a portion of executive and research compensation to measurable safety outcomes—closure rates on red-team findings, reproducible decreases in high-confidence hallucinations, and third-party audit results—so financial drivers reinforce safe behavior. By codifying these choices, you turn ambiguous expectations into objective conditions that the whole organization understands and measures.

Oversight spans a spectrum from internal committees to external audits; you should design mechanisms that balance agility with independent verification. How do you design oversight that preserves innovation while enforcing accountability? Use a tiered model: lightweight governance for routine, low-impact changes; mandatory independent review for high-impact releases; and publicly documented audit summaries for anything with systemic risk. External oversight options include accredited third-party audits, rotating technical reviewers drawn from academia or trusted industry partners, and formalized incident-reporting protocols that provide regulators and partners with clear, time-bound responses.

Regulatory tools and policy levers outside the company matter as well and shape what you must implement internally. Consider participating in regulatory sandboxes where you can test models under supervised conditions while collecting empirical safety data that informs both policy and product design. Pursue voluntary certification programs or standards (third-party safety stamps) where available to create interoperability of trust with partners and customers. Finally, negotiate contractual mechanics—escrowed milestones, conditional payments, and liability clauses—that align commercial incentives with the safety review cadence you’ve specified.

Enforce policy through technical controls: policy-as-code, immutable audit logs, and cryptographic signing for signoffs make oversight verifiable. Embed release metadata into builds so that every deployment includes a machine-readable policy object; for example:

{"policy": {"risk_class": "high", "required_signoffs": ["safety_director", "audit_committee"], "telemetry_thresholds": {"hallucination_rate": 0.02}, "review_expiry": "2026-08-01"}}

This metadata lets CI pipelines, runtime governance agents, and external auditors automatically validate compliance before deployment and continuously monitor behavior in production. Use tamper-evident logs and periodically rotate independent validators to prevent captured oversight.

Implementation is a program, not a one-off task: pilot reforms on a few product lines, run quarterly tabletop exercises that simulate leadership disputes and emergency stops, and publish sanitized audit summaries to rebuild external trust. We should treat independent reviews as recurring operations—schedule them, budget for remediation, and close the loop publicly when feasible so partners see progress. Taking these steps turns policy reforms and oversight options from theoretical checkboxes into concrete governance capabilities you can test, measure, and iterate on as the organization scales and regulatory expectations evolve.

User Impact and AI Development

Building on this foundation, the immediate question for product teams is pragmatic: how do governance failures translate into real user impact and slower AI development cycles? When governance breaks down, users feel it as reliability regressions, degraded model outputs, or sudden policy shifts that change behavior overnight — all of which erode trust and increase churn. We need to treat those user-facing symptoms as first-class signals in our development lifecycle, not as downstream PR or legal problems. Front-loading user impact into release decisions connects governance to the engineering metrics you already track and gives you concrete levers to act on before customers notice.

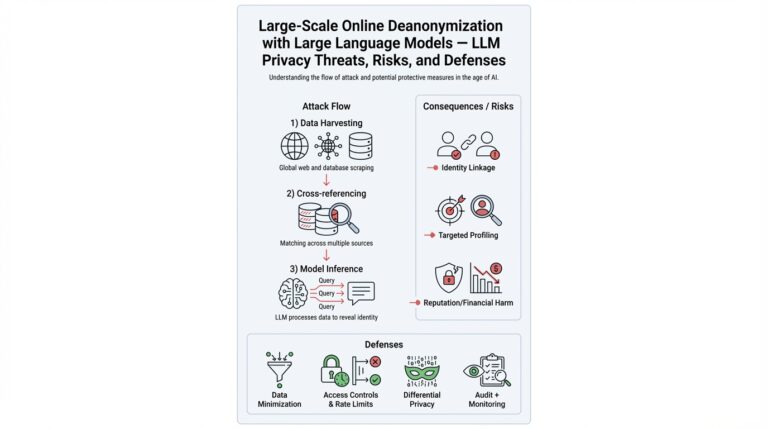

A concrete way governance lapses affect users is through availability and predictability loss. For example, an emergency executive decision that forces a rapid rollback can remove runtime safeguards or disable throttles, producing spikes in hallucinations or policy-violation errors for end users. Define hallucination the first time you use it: a hallucination is a model output that asserts false facts with high confidence. Likewise, make red-team explicit: a red-team exercise is an adversarial test designed to surface misuse or failure modes. When these controls are bypassed, downstream products—chatbots, search augmenters, customer support agents—experience higher error rates and inconsistent behavior, which complicates integration for partners and increases support load for your SRE teams.

We mitigate those user impacts by embedding operational controls into the development and deployment pipeline rather than relying on one-off managerial fixes. Use staged rollouts, canary cohorts, and per-tenant throttles so that a governance-triggered change affects a small, observable population first. Couple those rollout mechanics with runtime circuit breakers and policy-as-code enforcement that can be toggled automatically when telemetry crosses predefined thresholds. For instance, instead of cancelling a partner launch when a governance question appears, reduce the cohort size, lower the model’s temperature, or enable a stricter safety classifier at the inference layer—technical knobs that preserve service continuity while governance works through questions.

Longer-term, governance friction reshapes AI development priorities and research incentives in measurable ways. Teams will prioritize reproducible evaluation suites, continuous robustness testing, and synthetic adversarial workloads so model improvement is defensible to non-technical stakeholders. That changes hiring, tooling, and funding: you’ll invest more in evaluation infra, in automated benchmarking against drift, and in interpretable logging that shows why a model made a decision. Those investments slow raw iteration speed but accelerate responsible innovation by making releases auditable and remediable rather than reversible only through emergency board action.

Operational metrics should therefore drive both product safety and stakeholder conversations. Track not only latency and error SLOs but also hallucination rate at varying confidence thresholds, distributional drift scores, false-positive policy flags, and mean time-to-detect (MTTD) for adversarial regressions. Map those metrics to explicit escalation paths: if hallucination_rate > X for Y minutes, safety officer auto-marks the release for rollback; if drift_score increases by Z% in 24 hours, trigger an independent review. Assign clear owners—an on-call safety officer, an incident lead, and an executive sponsor—so the metric-to-action pipeline is auditable and fast.

As we move into governance architecture, remember this operational rule: protecting users and preserving velocity are not mutually exclusive when you build controls into the stack. By instrumenting releases with safety metadata, by using staged access and runtime mitigations, and by tying metrics to predefined governance actions, we limit user impact and keep AI development moving forward. The next section will connect these operational controls to legal forms and board authorities so you can make those protections enforceable on paper and in practice.