Why reliability matters for LLMs

When an LLM request fails at 99th-percentile latency or returns a hallucinated answer, the impact goes beyond a single user annoyance — it damages trust, increases operational cost, and creates regulatory risk. How do you ensure an LLM-based feature doesn’t become the single point of failure for your product? Building on this foundation, we’ll frame why reliability must be a first-class concern for LLM calls in production and what’s at stake if it isn’t.

Unreliable behavior creates three concrete problems you’ll notice quickly. First, user experience degrades: customers perceive incorrect or slow outputs as product bugs, not model artifacts, and churn increases after a few bad interactions. Second, cost and throughput suffer: repeated retries and long-running token usage inflate your bill and waste worker threads, turning intermittent model errors into sustained resource pressure. Third, system-wide fragility emerges: a surge in API errors can cascade through synchronous request paths, triggering scaledown events, SLA breaches, and manual firefights during peak traffic.

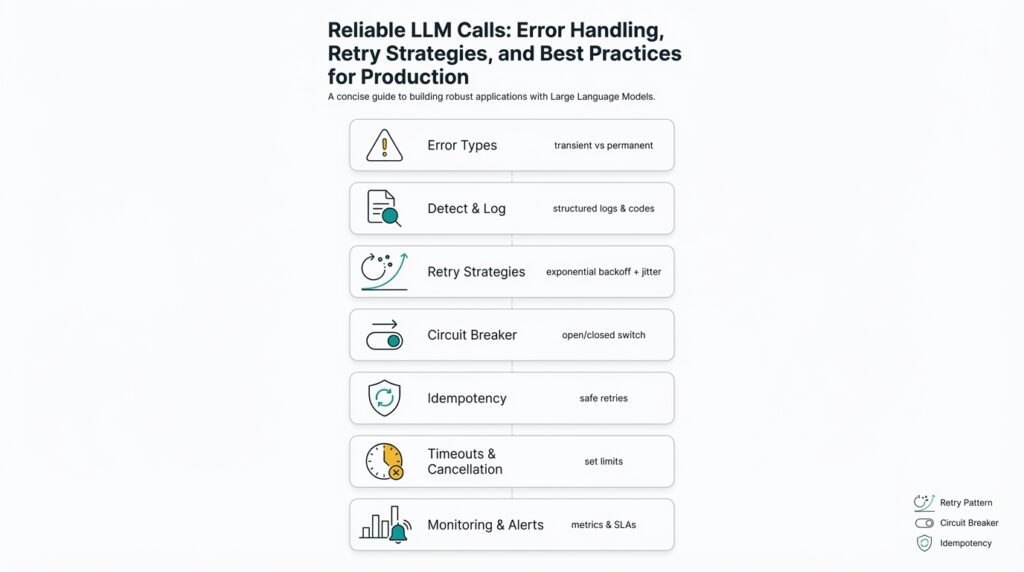

These failures are avoidable with predictable design patterns. Start with defensive input validation and deterministic prompt templates so you reduce nondeterminism at the edge. Add idempotency tokens and short client-side timeouts to prevent duplicate side effects during retries. Implement circuit breakers and rate limits around external LLM endpoints so you can fail fast when error rates cross thresholds. For example, a pragmatic retry strategy in Python-like pseudocode looks like:

for attempt in range(max_retries):

try:

response = client.call(prompt, idempotency_key=key, timeout=timeout)

return response

except TransientError:

sleep(backoff_base * 2 ** attempt + jitter())

raise PermanentFailure()

That pattern couples retry strategies with idempotency and bounded backoff—minimizing wasted tokens and preventing retry storms. When synchronous quality matters, fall back to a cheaper local model or a cached answer; when eventual responses are acceptable, switch to an asynchronous queue so the user-facing path stays responsive.

Observability is the operational backbone of reliability. Track latency percentiles (p50/p95/p99), token consumption per request, error rate by error class (rate limit, timeout, server error), and downstream application success rates. Instrument prompts with trace IDs and capture compact prompt hashes in logs so you can correlate bad outputs to specific prompt shapes and model versions. Run synthetic scenarios and chaos tests that inject latency and API failures; these tests reveal brittle couplings where an LLM failure translates into business-level outages.

You’ll face trade-offs: aggressive retries reduce visible failures but raise costs and extend tail latency; conservative timeouts keep systems snappy but risk surfaced errors for transient glitches. Decide based on SLOs and cost budgets—use a higher-quality model and synchronous flow for revenue-critical paths, and cheaper models or async workflows for bulk processing. Also consider compliance and auditability: reliable request logging and consistent outputs simplify investigations when a model produces legally sensitive content.

Understanding these operational realities explains why engineering for reliability is not an optional nicety but a core product requirement. The next step is to convert these motivations into concrete error handling, retry strategies, and best practices you can apply in your call stack — we’ll translate these principles into patterns, sample code, and observable SLAs to enforce them.

Identify common failure modes

Building on this foundation, the single most important step before you design mitigations is to inventory the ways an LLM call can fail—because each failure mode demands a different error handling, retry, and observability strategy. Why does a request that worked yesterday suddenly return a 503 or a nonsense answer today? Asking that question up front focuses our debugging and informs whether we should tune retries, tighten timeouts, or add schema validation to outputs.

Failures fall into broad, actionable categories: transport and infra errors, throttling and quota failures, latency tails and timeouts, model-quality or semantic failures, and human/operational mistakes. Transient errors are short-lived and often solvable with backoff and retries; permanent errors reflect client-side problems (bad prompts, auth, malformed requests) that retries only amplify. Identifying which category an observed problem belongs to is the first step toward effective mitigation and cost control.

Transport and infrastructure problems manifest as network timeouts, TLS handshake failures, DNS resolution errors, or upstream 5xx responses from the LLM provider. These failures typically show diagnostic signals you can instrument: increased p99 latency, spikes in connection resets, or correlated 502/503 rates across regions. In practice, a regional network flap can convert a handful of failed requests into a retry storm if clients don’t apply exponential backoff with jitter; we therefore track both raw error counts and retry amplification metrics to detect these cascades early.

Throttling and quota errors produce 429 responses or provider-specific quota codes, and they behave differently than transient 500s: they reflect policy, not chance. Distinguish soft limits (temporary rate limiting) from hard limits (exhausted monthly quota) in your logs, and honor rate-limit headers when provided. For side-effectful operations, use idempotency tokens so retries after a 429 don’t produce duplicate work, and combine client-side rate limiting with server-side circuit breakers to protect downstream systems.

Latency tails and timeouts are one of the trickiest failure modes because they interact with user-facing SLAs and cost. Long token-generation times or large-context prompts can push p99 latency into seconds or minutes; if you set client-side timeouts too aggressively you surface errors for recoverable requests, but if you set them too high you block threads and inflate billing. Mitigation patterns include hedged requests (send a lower-latency model in parallel with a higher-quality model), streaming partial responses, and favoring asynchronous queue-based flows when eventual consistency is acceptable. Tune timeouts relative to user deadlines—your client deadline should be shorter than the system-level SLA to allow fallbacks.

Model-quality failures — hallucinations, incorrect extractions, truncated or syntactically invalid outputs — are semantic, not transport problems, and require deterministic validation layers. Apply strict output schemas (JSON Schema, Protobuf, or custom validators), lightweight classifiers to detect toxic or off-domain responses, and business-rule checks (e.g., numeric ranges for invoice amounts) before persisting or acting on a response. For example, an invoice OCR pipeline that trusts raw LLM output without validation can propagate incorrect amounts into billing; adding a numeric sanity check reduces that risk dramatically.

Finally, operational and human errors are common and sometimes the most surprising: credential rotations without rollout, stale prompt templates, incorrect model version configuration, or deployment bugs that change request shapes. These failures often leave telltale fingerprints—sudden jump in a single prompt-hash error rate, or a gap in trace IDs across services—and are preventable with automated schema tests, prompt regression suites, and deployment-time integration checks.

Knowing these failure modes lets you prioritize observability, testing, and mitigation work by impact: protect revenue paths with stricter retries and expensive models, use async and cheaper models for bulk processing, and instrument prompt hashes, token usage, and error classes to break down root causes quickly. Next, we map each failure mode to concrete retry strategies, timeout configurations, and SLOs so you can implement targeted, cost-aware defenses for LLM calls.

Timeouts, cancellation, and circuit breakers

Latency and unpredictability in LLM calls are the silent product killers — they turn intermittent model slowness into user-facing errors, runaway billing, and cascading retries. How do you prevent a single slow generation from blocking threads or burning tokens? Front-loading timeouts, explicit cancellation, and a robust circuit-breaker strategy let you fail fast, reclaim resources, and route traffic to safe fallbacks so the rest of your system remains healthy and responsive.

Pick client-side timeouts deliberately rather than by guesswork. Your request timeout should be shorter than the system-level SLA so you can trigger fallbacks before the user deadline expires, and it should vary by use case: low-latency UI flows get aggressive timeouts, background enrichment jobs tolerate longer ones. Measure p50/p95/p99 latency for the exact model and prompt length you use, then set conservative initial timeouts (for example: p95 + safe margin) and iterate with canary deployments. Timeouts protect compute budgets and request queues; they also become an important signal in your observability pipeline when correlated with token usage and retry amplification.

Explicit cancellation mechanisms protect you from wasted work after the client gives up. Implement cancellation tokens or abort controllers in your stack and propagate them to any long-running generator or streaming reader so the provider can stop work mid-generation. For instance, use an async request pattern where you send an abort signal when the client times out or the user navigates away; the server then calls provider.abort(request_id) or closes the streaming connection. Example pseudocode:

# async pattern: cancel token propagates to provider

cancel = CancellationToken(timeout=client_timeout)

try:

result = await provider.generate(prompt, cancel_token=cancel)

except OperationCancelled:

cleanup_partial_state()

raise

Cancellation reduces token costs and avoids retry storms that occur when a timed-out request keeps running on the provider side while the client retries. Equally important is exposing partial results: where possible stream best-effort output to the client and decide whether to surface partial text, degrade quality for speed, or switch to an async workflow that completes later.

Circuit breakers give you systemic protection when external quality degrades or quotas are hit. Configure a short-window error rate breaker (e.g., open on >50% error rate across 10s or five consecutive 5xx/429 responses) and a longer-window sliding window that prevents flapping during transient recovery. When the breaker is open, short-circuit calls to a fallback flow — serve cached responses, return a lightweight deterministic model, or enqueue work for async processing — and log the decision with prompt hashes and trace IDs. Use a half-open probing strategy that permits a small number of requests to verify recovery and increase the probe size only on success; integrate provider rate-limit headers and per-region health to make smarter open/close decisions.

Combine these controls with bounded retries and hedging to balance user experience and cost. Use exponential backoff with jitter and cap retries based on request type: idempotent, read-only LLM queries might try more aggressively than side-effectful calls. Make your client timeout shorter than the retry budget so cancellation prevents duplicate token consumption across attempts. For latency-sensitive flows, send a hedged low-latency model in parallel with a higher-quality model and adopt the first successful response while canceling the slower request—this reduces p99 tail risk without multiplying retries across the fleet.

Instrument and iterate: track timeout counts, cancellation rates, circuit-breaker opens, token consumption per request, and end-to-end success rates. Correlate those metrics with user-facing SLAs and cost reports so you can answer operational questions like: “When should we tighten timeouts?” and “Is the breaker opening due to genuine provider instability or a misconfigured prompt?” Building these signals into dashboards and alerting lets us tune timeouts, cancellation policies, and breaker thresholds in production with confidence, and it sets up the next set of mitigations — targeted retries, schema validation, and model fallback policies — that further harden LLM-based features.

Retry strategies and exponential backoff

Latency spikes and intermittent provider errors will evaporate user trust if we treat retries as a blunt instrument. We need deliberate retry strategies that limit cost and avoid amplifying failures, and we should bake exponential backoff math into the client so retries back off predictably under load. Starting with clear retry eligibility and a bounded backoff policy reduces retry storms and token waste, and it gives us a deterministic surface to observe and tune. In practice, implement retries as a controlled safety valve—not a band‑aid for bad prompts or expired credentials.

Decide which failures are worth retrying before you write any retry loop. Transient transport errors, connection resets, 5xx server errors, and well-formed 429s with short Retry-After headers are usually safe to retry; semantic failures (invalid request, auth, malformed payload) are not. When should you stop retrying? Use idempotency tokens and classify requests by side-effect risk: read-only or idempotent queries can tolerate higher retry budgets, while side-effectful operations should use minimal retries plus server-side deduplication. This classification prevents duplicate work and keeps costs predictable.

Make the backoff parameters explicit and conservative: base_delay, multiplier, jitter function, maximum_delay, and max_attempts. A common starting point is base=200ms, multiplier=2, max_attempts=5, and max_delay=10s; the nth delay becomes min(max_delay, base * multiplier^(n-1)) plus jitter. Exponential backoff reduces collision probability exponentially between attempts, and capping delays prevents unbounded long waits in user flows. We should surface these parameters as config so teams can tune them per endpoint, model, or customer SLA.

Jitter is the critical refinement that prevents synchronized client retries from creating waves of traffic. Prefer randomized jitter strategies over fixed exponential wait. For example, full jitter picks a uniform random between 0 and the computed backoff, while equal jitter picks half the backoff plus a uniform random in the remaining half; decorrelated jitter (the AWS approach) uses the previous sleep to compute the next, avoiding correlation across clients. Implement a tiny helper instead of ad‑hoc sleeps; here’s a compact pattern you can port:

# pseudocode: full jitter

delay = min(max_delay, base * (2 ** attempt))

sleep(random.uniform(0, delay))

Apply jitter consistently across regions and SDKs so retries remain decorrelated at scale.

Boundary controls beyond jitter make retries safe in production. Define a retry budget per user, per request type, and per service to bound amplification (for example, no more than two retries for UI requests and five for background jobs). Combine this with circuit breakers and provider‑aware rate limits: when a provider signals quota exhaustion or sustained 5xx rates, open the circuit and route to cache, a deterministic fallback model, or an async queue. Instrument retry amplification, retry latency, token consumption per attempt, and success-after-retry rate so you can trace when retries are helping versus when they’re multiplying cost.

Tune retry behavior by use case rather than guesswork. For low-latency interactive paths, favor a tiny retry budget with aggressive cancellations and hedged low‑latency model calls; for throughput jobs, allow longer backoff caps and more attempts while using idempotency and dedupe. When you map these patterns into SLAs, make retries observable: alert on rising retry rates, increasing success-after-retry latency, or growing token spend per successful request. Taking this disciplined approach to retries, backoff, and jitter lets us reclaim control of tail latency and cost while preserving user experience and system stability — next we’ll translate these controls into concrete fallback and schema‑validation policies for model responses.

Idempotency, deduplication, and safe retries

Duplicate side effects are one of the fastest ways an otherwise resilient LLM integration breaks production reliability, so we must treat idempotency, deduplication, and retries as design primitives rather than afterthoughts. When a client times out and retries, or when multiple workers process the same queue message, you need guarantees that only one business action is applied. Start by defining who owns the uniqueness contract and what constitutes a single logical operation; this clarifies when to generate an idempotency token and when to trigger deduplication in the backend. Making these choices early reduces cost inflation from wasted token generations and prevents user-facing anomalies like duplicate invoices or repeated emails.

An idempotency token should represent the logical intent of an operation, not a transient transport attempt. We recommend constructing keys from stable attributes: a user or tenant identifier, the operation name, and a deterministic input hash (for example, SHA256(prompt + metadata)). Avoid embedding timestamps in the core idempotency key because that converts every attempt into a new operation. Clients should generate or reuse a token for the lifetime of the logical operation; servers must treat the token as the canonical dedupe key and store the outcome (success/failure, response hash, side-effect id) atomically so subsequent requests can be answered from cache rather than re-executed.

On the server side, deduplication storage is the crucible of correctness and latency. Use a purpose-built store with strong atomic primitives: Redis SETNX with short TTLs for low-latency paths, or a transactional Postgres table with a unique constraint and an upsert pattern for durable guarantees. Persist the idempotency key together with a bounded response or a pointer to the completed side-effect (for example, a persisted result ID or outbox entry). Choose the storage TTL to balance eventual consistency and operational cost: short TTLs for UI flows where you only need protection for a few minutes, longer windows for billing or legal actions where duplicates are unacceptable for hours or days.

Streaming generations and resumable sessions complicate dedupe because partial work can be visible before the final commit. For streamed outputs, record a generation run ID and checkpoint offsets as part of the dedupe state so retries can safely resume or reconcile instead of restarting from zero. If the provider returns a partial token or an attempt ID, persist that mapping to your idempotency record and prefer resumption semantics over blind retries. This approach reduces token waste and makes streamed retries safe: if a client reconnects and reuses the same idempotency key, the server can either resume the stream or return the finalized response.

Operations that produce external side effects require stricter limits on retries and stronger dedupe mechanisms. For side-effectful calls, minimize client retry budgets and require server-side deduplication to be the final arbiter: write the idempotency key to the ledger before invoking the external action, or implement a transactional outbox so the external call is driven from a single, durable queue consumer. Optimistic concurrency controls—versioned resources, compare-and-swap, or unique operation markers—prevent race conditions when multiple workers attempt the same commit. Remember that exactly-once semantics are expensive; in many systems, at-least-once with strong dedupe and reconciliation is the pragmatic tradeoff.

Observability turns idempotency from a theoretical guarantee into an operational one. Instrument duplicate detection metrics (dedupe_hits, duplicate_execution_count), track success-after-retry rates, and log idempotency tokens and input hashes with trace IDs so you can reconstruct the decision path. Alert on rising dedupe misses or when cost-per-success increases, since those signals indicate either a change in client behavior or a misconfigured TTL/storage. Correlating these metrics with token consumption helps answer questions like: “When are retries harming cost instead of helping?”

Choosing TTLs, storage backends, and retry budgets is contextual work that depends on SLAs, cost constraints, and legal needs. For interactive UIs pick short TTLs and aggressive cancellation; for billing, choose longer dedupe windows and durable stores; for batch pipelines favor persistent unique constraints and idempotent worker semantics. Taking this approach gives you predictable behavior under retries, minimizes duplicate side effects, and prepares the system for layered fallbacks and schema validation that follow in the next section.

Monitoring, logging, and alerting practices

Observability is what turns vague outages into actionable work; without disciplined monitoring, logging, and alerting you’re flying blind when an LLM path degrades. Start by treating monitoring, logging, and alerting as a single, integrated workflow: metrics to detect surface issues, traces to drill into request flows, and logs to record the exact prompt, prompt hash, and decision path that led to a result. How do you detect when retries are making reliability worse or when token spend silently balloons? Ask that early and instrument for it.

Instrument metrics, traces, and structured logs in tandem so you can pivot from a p99 latency spike to the precise prompt variant that caused it. Emit p50/p95/p99 latency per model and prompt-length bucket, expose token consumption and retry-amplification counters per request, and attach a trace ID plus a compact prompt hash to every metric and log so you can correlate signals end-to-end. Use distributed-tracing correlation primitives rather than free-form strings so dashboards can join traces, logs, and metrics reliably during incident work. (opentelemetry.io)

Design alerting around SLOs and error budgets instead of raw thresholds; alerts should tell you when user experience or cost is on a path to breach an SLA. Define SLIs for success rate, latency, and cost-efficiency (for example, tokens per successful response) and create burn-rate alerts that page when you’re consuming budget far faster than sustainable. Implement multi-window checks (short window for immediate paging, longer window for escalation) and use burn-rate multipliers to distinguish brief spikes from sustained degradation so teams act on the events that matter. (sre.google)

Make logging a first-class, queryable artifact but control its volume and sensitivity. Emit structured JSON logs with fields for traceId, spanId, prompt_hash, model_version, token_count, and error_class; redact or hash any PII at generation time to meet compliance and retention constraints. Apply sampling and tail-sampling for high‑volume paths so you retain representative traces and all error cases while avoiding log bill shock; configure retention tiers (hot for 7–30 days, cold for longer forensic windows) and ensure logs are searchable by prompt_hash and idempotency token for fast root-cause analysis. (opentelemetry.io)

Build dashboards and debug flows that map directly to operational questions you’ll be asked: “Which prompt templates are consuming the most tokens?” or “Which regions show correlated 502s and retry amplification?” Create a drilling path from an SLO burn event to a heatmap of prompt-hash error rates, then to raw logs and the full trace. Complement production telemetry with synthetic probes and chaos tests that exercise retries, timeouts, and hedged-model flows so you can validate alert thresholds and runbooks before users notice. (mezmo.com)

Operationalize alerts with playbooks and noise controls so on-call teams can act quickly and confidently. Triage runbooks should include the canonical queries (by traceId, prompt_hash, idempotency_token), expected mitigations (circuit-breaker flip, fallback model, or cache warm), and post-incident follow-ups tied to the error budget. Track operational metrics like retry-amplification, success-after-retry rate, dedupe_hits, and token_cost_per_success on the same dashboards as SLIs so you can answer whether a config change, prompt regression, or provider instability drove the incident — and iterate on thresholds and sampling to keep alerts actionable as the system evolves.