Market Landscape Overview

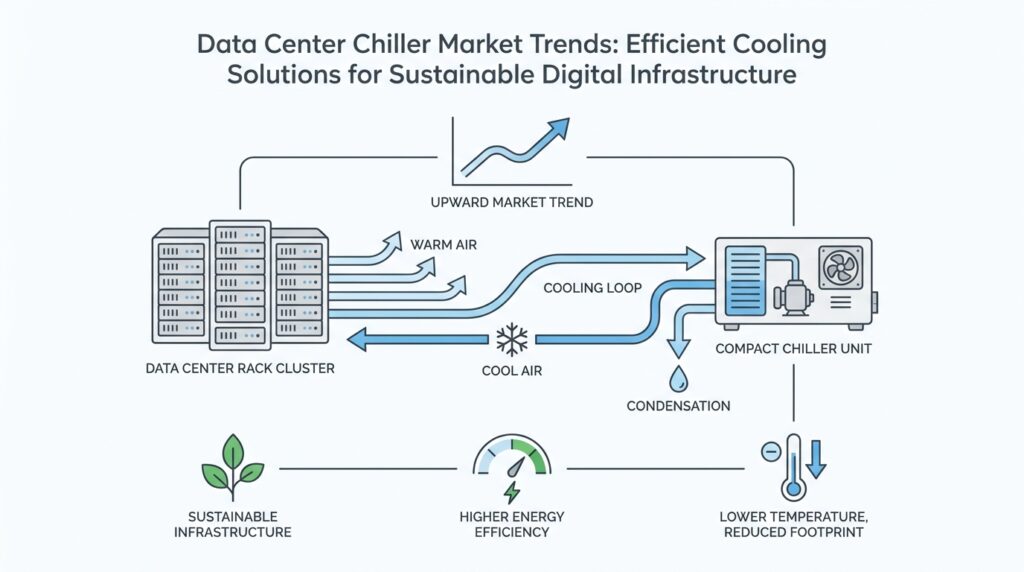

The data center chiller market is no longer a quiet corner of the infrastructure world; it sits right at the center of a much bigger story about AI, power density, and sustainability. As servers get hotter and racks get denser, cooling stops being a background utility and starts behaving like a strategic purchase. The International Energy Agency notes that cooling can account for about 7% of electricity use in efficient hyperscale sites and more than 30% in less-efficient enterprise data centers, while global data center electricity use reached about 1.5% of global demand in 2024 and has grown at roughly 12% a year over the past five years.

What does that mean in plain language? It means this market is split between two very different worlds. On one side, many traditional enterprise sites still rely on familiar chilled-water systems and evaporative heat rejection; on the other, hyperscale and colocation operators are redesigning around higher heat loads, liquid-ready hardware, and more flexible cooling architectures. Lawrence Berkeley National Laboratory describes these newer setups as a mix of airside economizers, waterside economizers, dry coolers, and air- or water-cooled chiller support, with AI-oriented liquid cooling often used for dense equipment.

The biggest demand driver is easy to name, even if the engineering behind it is not: more powerful servers need better heat removal. In Uptime Institute’s 2024 Cooling Systems Survey, 22% of respondents said they already use direct liquid cooling, and 61% said they do not use it yet but would consider it. Respondents also pointed to higher-density IT racks and higher-powered servers as the strongest reasons to expand its use, which tells us the data center chiller market is increasingly being shaped by hybrid systems that support both air and liquid cooling.

Sustainability is pushing just as hard as performance. The U.S. Department of Energy says that raising temperature set points and widening humidity ranges can cut energy use, and those changes can also reduce water use by lowering the amount of heat the cooling tower must reject. The same guidance says higher chilled-water temperatures and reduced airflow can trim chiller energy consumption by about 20%, which is why buyers now evaluate chillers not only by capacity, but also by water use, part-load efficiency, and how well they support free cooling.

That pressure is showing up in standards and procurement rules, too. ASHRAE maintains data-center-related standards such as Standard 90.4 for energy performance and Standard 127 for testing air-conditioning units serving data center spaces, while the DOE’s chiller buying guidance distinguishes between air-cooled and water-cooled electric chillers and their full-load or part-load efficiency requirements. In practice, that means the market rewards vendors that can prove efficiency, reliability, and compliance instead of just promising lower temperatures.

So the landscape is becoming more layered, not less. Legacy plants are still very much alive, but the fastest-moving projects are leaning toward modular, hybrid, and liquid-ready designs that can grow with AI workloads without wasting power or water. Public R&D is reinforcing that direction: the DOE’s COOLERCHIPS program was created to develop highly efficient and reliable data center cooling technologies, and the IEA says a high-efficiency pathway could cut data center electricity use by more than 15% versus its base case by 2035. That is the direction the data center chiller market is heading—toward systems that cool harder, waste less, and adapt faster.

AI-Driven Cooling Demand

Picture a new AI cluster arriving in a data hall: the servers are smaller than the heat problem they create, and the room starts to feel like a kitchen with every burner turned on at once. That is why AI-driven cooling demand has become such a big force in the data center chiller market. The International Energy Agency says AI is accelerating the deployment of high-performance accelerated servers, which raises power density inside data centers, and the U.S. Department of Energy has also flagged AI and machine learning as major drivers of rising energy demand in these facilities.

What makes this different from older workloads is not only the amount of electricity, but where that heat lands. A traditional server room spread its load across many machines, while AI training and inference can concentrate far more work into a smaller footprint, especially around GPU-heavy racks, which are the graphics-processing-unit racks used for fast parallel computation. NREL notes that warm-water liquid cooling can support rack power densities of 60 kilowatts per rack or more, which gives us a clue about how quickly the cooling conversation has changed. In other words, the data center chiller market is being pulled toward systems that can handle concentrated heat instead of only broad, room-scale airflow.

So why does that matter for chillers in particular? Because once the heat becomes denser, the old habit of overcooling the whole room starts to waste energy fast. Lawrence Berkeley National Laboratory explains that liquid cooling can improve chiller performance and create better opportunities for free cooling, which is when outside conditions help remove heat with less mechanical work. That means operators are not just buying “more cooling”; they are buying chillers that can work with higher water temperatures, hybrid loops, and shifting AI loads without turning electricity into a long, expensive tug-of-war.

We can also see the shift in adoption, not just in theory. Uptime Institute’s 2024 survey found that 22% of respondents already use direct liquid cooling, and 61% do not use it yet but would consider it, which tells us the market is moving from curiosity to planning. That matters because AI projects rarely arrive as neat, finished systems; they grow, change, and stack new requirements on top of old infrastructure. The most attractive cooling designs now look modular, hybrid, and liquid-ready, so the data center chiller market can support both conventional air-cooling loads and hotter AI zones in the same facility.

That is also why the conversation is becoming more practical and less abstract. DOE and NREL both point to cooling innovation as a way to manage the rising electricity burden from AI, and NREL’s COOLERCHIPS effort aims to push cooling energy below 5% of a typical high-density data center’s IT load, where IT load means the power used by the computing hardware itself. The message for buyers is clear: the winning systems will not only cool harder, but also respond faster, waste less, and fit a future where AI keeps rewriting the heat map.

Chiller Types Compared

When you start comparing chiller types in a data center, the choice stops feeling like a catalog decision and starts looking like a site strategy. The real question is not only, “Which machine can remove heat?” but also, “Which one fits your power profile, water limits, and growth plans?” In the data center chiller market, that usually brings us to a few familiar options: air-cooled chillers, water-cooled chillers, and modular systems that break cooling into smaller building blocks.

Air-cooled chillers are often the first stop in the conversation because they are easier to picture. They reject heat directly to outside air, which means they do not need a cooling tower, a water treatment program, or the same level of plumbing complexity as water-based systems. That makes them attractive for smaller facilities, retrofit projects, and sites that want simpler maintenance. The tradeoff is that they usually consume more electricity in hot weather and can struggle to match the efficiency of water-cooled designs when the load stays high for long stretches.

Water-cooled chillers take a different path, and that path often looks more efficient on paper. A water-cooled chiller moves heat into a water loop and then hands it off to a cooling tower, which uses evaporation to dump heat outdoors. Because water transfers heat better than air, these systems often perform very well in large data centers, especially when the facility runs year-round and cares deeply about part-load efficiency, which is performance when the chiller is not running at full capacity. The catch is that they ask for more infrastructure, more water, and more upkeep, so they tend to reward operators who can manage that extra complexity.

That difference matters because not every buyer is solving the same problem. If you are wondering, “Which chiller type is best for a data center?”, the honest answer is that it depends on what the site values most. A water-cooled setup may win where efficiency and scale matter more than simplicity, while an air-cooled system may win where space is tighter, water use is restricted, or the project needs to move quickly. In the data center chiller market, the best choice is rarely the one with the biggest headline number; it is the one that matches the building’s real operating conditions.

Modular chillers add another layer to the picture, and they are becoming easier to understand as data centers grow more unpredictable. A modular chiller is a system made of smaller cooling units that can be added, removed, or staged as demand changes, much like building with blocks instead of carving from a single stone. This approach helps operators avoid buying far more cooling than they need on day one, and it also gives them a cleaner path for expansion when AI workloads raise heat loads later. For many colocation and hyperscale projects, that flexibility is as valuable as raw efficiency.

There is also a practical middle ground that many facilities are now exploring. Some designs pair chillers with economizers, which are systems that use favorable outdoor conditions to reduce mechanical cooling, while others support higher chilled-water temperatures so the chiller does not have to work as hard. These hybrid approaches matter because they soften the old tradeoff between performance and sustainability. In other words, the data center chiller market is moving toward systems that can cool aggressively when needed and back off when the weather or workload allows.

The final comparison comes down to how each type behaves under pressure. Air-cooled chillers usually offer simpler installation and lower water dependence, water-cooled chillers usually offer stronger efficiency at scale, and modular systems usually offer the best flexibility for phased growth. Once you line those up against your site’s density, climate, and water constraints, the decision becomes much clearer. That is why the smartest buyers are not asking which chiller is best in theory; they are asking which one will still make sense when the next rack arrives and the heat map changes again.

Efficiency Metrics to Track

When you stand in front of a cooling spec sheet, the hardest part is not spotting the big number on the page. It is knowing which numbers actually tell you whether the system will save energy, water, and money once the data hall is busy. That is why the data center chiller market now revolves around efficiency metrics, not marketing language. The simplest way to think about an efficiency metric is this: it is a score that shows how much cooling you get for the electricity or water you spend, and it helps you compare one design against another on something closer to equal ground.

The first numbers most teams watch are power usage effectiveness (PUE) and water usage effectiveness (WUE). PUE shows how much total facility power is used compared with the power going to IT equipment, while WUE measures how much water the site uses for each unit of IT energy. Those two metrics matter because a chiller does not live in isolation; it sits inside a whole cooling system with pumps, fans, towers, and controls. When you ask, “How do I know if a data center chiller is efficient?”, PUE and WUE give you the broad landscape before you zoom in on the machine itself.

Once we move closer to the chiller, the conversation becomes more specific. Two common measures are coefficient of performance (COP), which is the amount of cooling delivered for each unit of electricity consumed, and kilowatts per ton, often written as kW/ton, which tells you how much power the chiller uses to produce a standard amount of cooling. A “ton” is simply a standard cooling unit, not a weight in the everyday sense. COP is easier to compare mathematically, while kW/ton is often easier to use in procurement conversations, and both help you see whether the data center chiller market is delivering real operational efficiency or only promising it on paper.

But a chiller’s best-looking number can be misleading if it only appears at full load. Most data centers do not spend their lives running at peak demand, so part-load efficiency becomes one of the most important checks on the page. Part load means the chiller is running below its maximum capacity, which is where real facilities often live for long stretches. That is why metrics such as integrated part load value (IPLV), a weighted efficiency score across several load points, matter so much: they show how the system behaves on ordinary days, not just on the rare, hottest hour of the year.

That broader view is especially useful when chilled-water temperature comes into play. A useful metric here is the temperature difference, or delta T (the change between supply and return water temperature), because it shows how much heat the water is carrying back from the room. A healthy delta T can let the system move more heat with less pumping, and it can also support better chiller performance and more free cooling, which is the use of cool outdoor conditions to reduce mechanical refrigeration. In the data center chiller market, buyers increasingly look for designs that can hold efficiency while the temperature settings move upward and the load shifts.

Water and carbon metrics add the next layer of reality. WUE tells you whether the site is consuming too much water for the cooling work it does, and carbon usage effectiveness (CUE) shows how much carbon dioxide emissions are associated with the facility’s energy use. Those numbers matter because a highly efficient chiller in a dry region may still create pressure if it depends on heavy water use, and a low-energy design may still look less attractive if it draws power from a carbon-heavy grid. In practice, the smartest operators treat energy, water, and emissions as one connected story, not separate scorecards.

The last group of metrics is operational, and it is easy to overlook until something goes wrong. Availability, alarm frequency, maintenance intervals, and compressor cycling all tell you whether the chiller stays stable when the workload changes quickly. What good is a lower kW/ton if the system cannot hold setpoint during an AI heat spike? That is the question many buyers ask now, because in the data center chiller market, efficiency only matters if it shows up consistently, under pressure, and without creating new risks for uptime.

Sustainable Design Strategies

Sustainable design starts long before the first chiller is delivered. The smartest teams treat cooling as part of the building’s architecture, not a box to be added later, because the choices made at the drawing stage shape energy use, water use, and heat recovery for years afterward. That is why the data center chiller market is moving toward designs that are planned around climate, workload, and reuse from day one, not only around raw cooling capacity.

How do you make a data center chiller more sustainable without gambling on uptime? One of the first answers is to work with the site instead of against it. The Department of Energy notes that higher chilled-water temperatures and reduced airflow can cut chiller energy use by about 20%, and it also points to water-side economizing, which means using a heat exchanger and cooling tower to bypass the chiller during mild weather. In plain language, we are letting the weather do part of the work, so the machine does not have to fight every hour of the year.

The next layer is heat recovery, and this is where sustainability starts to feel clever instead of costly. ASHRAE Standard 90.4 allows credit for heat recovery and shared space economizers, and the Department of Energy recommends reusing waste heat before sending unusable heat through dry coolers when possible. That means the warm air or warm water leaving the data hall can become useful heat for nearby spaces, turning what used to feel like waste into a resource and making the data center chiller market look a lot more like an energy-reuse market.

Liquid-ready design is another strategy that is becoming hard to ignore. Lawrence Berkeley National Laboratory explains that liquid cooling can improve chiller performance and create much better opportunities for free cooling, which is the use of cool outdoor conditions to reduce mechanical refrigeration. Most liquid-cooled systems are still hybrid systems, meaning they remove part of the heat with liquid and the rest with air, so the safest design is one that can support both paths as the load changes. That flexibility matters because a sustainable plant has to serve today’s servers and tomorrow’s hotter ones without forcing a full rebuild.

Controls are where good intentions become measurable results. The Department of Energy highlights real-time cooling control systems that monitor and adjust cooling power continuously, which helps prevent overcooling and keeps equipment inside safe temperature limits. The same logic applies to airflow management: when hot and cold air mix less, the system wastes less energy cooling the wrong air, and the chiller can stay focused on the load that actually matters. In practice, this is one of the quietest but strongest sustainable design strategies in the data center chiller market.

Water stewardship sits right beside energy stewardship, especially in places where every gallon matters. DOE guidance on cooling towers shows that increasing cycles of concentration can reduce make-up water by 20% and blowdown by 50%, which is a concrete reminder that the cooling loop is not only about electricity. When designers pair that kind of tower management with higher operating temperatures, better aisle containment, and carefully tuned set points, they get a plant that uses less water without asking the chiller to carry a heavier burden.

The last piece is verification, because a sustainable design only counts if it performs in the real world. DOE chiller purchasing guidance distinguishes between air-cooled and water-cooled electric chillers and evaluates both full-load and part-load efficiency, while ASHRAE’s data center standard gives designers a framework for compliance and renewables credits. That is why the best projects do not chase one green feature; they assemble a system that can prove its efficiency, show its water savings, and still leave room for the next expansion.

Future Adoption Trends

The future of the data center chiller market is starting to look less like a single technology race and more like a careful balancing act. As AI pushes data center electricity demand higher and global consumption is projected to reach about 945 TWh by 2030, the cooling question gets sharper: how do we remove more heat without turning energy and water use into the new bottleneck? The answer, increasingly, is that buyers will choose systems that can grow with denser racks, faster hardware refresh cycles, and tighter sustainability goals.

What makes the next few years especially interesting is that adoption will not move in a straight line. Uptime Institute’s 2024 survey found that 22% of respondents already use direct liquid cooling, while 61% say they do not use it yet but would consider it; even so, it remains a small share of the world’s IT servers and is still concentrated in high-performance computing and AI-heavy environments. In other words, the data center chiller market is not replacing air cooling overnight; it is growing into a mixed world where liquid-ready and air-cooled systems often share the same facility.

What will actually drive data center chiller adoption in the next few years? The industry’s own answers are pretty clear: retrofit ease, lower operating costs, maintenance simplicity, and redundancy all matter, but higher rack densities and higher-powered servers still sit near the center of the decision. Uptime Institute’s 2025 survey also shows that operators care about practical fit first, with ease of retrofit ranking highest as a viability test for liquid cooling systems. That tells us future adoption will be shaped less by hype and more by how well a cooling design slips into an existing building without forcing a full rebuild.

At the same time, there are real brakes on the road. Uptime’s 2025 findings point to lack of standardization, high cost, and reliability concerns as the biggest barriers, which helps explain why many buyers still move cautiously even when the performance case is strong. The data center chiller market will likely reward vendors that make procurement feel safer, not just more ambitious, because buyers want clear standards, available parts, and predictable operating behavior before they commit to a new cooling architecture. DOE chiller purchasing guidance also reinforces that efficiency expectations now cover both full-load and part-load operation, so future buying decisions will be judged on measurable performance rather than promises.

Sustainability will keep nudging the market toward higher-water-temperature, lower-waste designs. DOE says higher chilled-water temperatures and reduced airflow can cut chiller energy use by about 20%, while LBNL notes that higher operating temperatures in liquid-cooled systems improve water and energy performance and can reduce chiller run hours. That means future adoption is likely to favor chillers that work well with free cooling, dry coolers, and water-side economizing, because those features let the weather do part of the job.

So the likely next chapter is not “air versus liquid,” but “how much of each, and where.” Uptime’s 2024 and 2025 materials both point to a long period of mixed infrastructure, where air-cooled IT hardware continues for years even as liquid cooling becomes more common in high-density zones. That is why the smartest buyers in the data center chiller market will keep favoring modular, hybrid, liquid-ready systems: they can start small, expand in phases, and still support whatever the next AI workload asks of them.