Why Agency AI Matters

Agency AI is where autonomous agents and AI-driven automation meet real business problems, and it matters because it changes how work gets done at scale. In practice, Agency AI combines task-oriented agents—software that perceives state, plans multi-step actions, and executes through APIs—with orchestration layers that manage retries, observability, and human handoffs. That combination accelerates business transformation by converting fragmented scripts and one-off automations into robust, auditable services that deliver measurable productivity gains across teams.

At its core, an agent is an autonomous program that senses inputs, maintains context, and takes actions to reach goals; we call this closed-loop autonomy. When you move from event-triggered scripts to agents, you reduce brittle point-to-point integrations and enable adaptive workflows that handle exceptions and ambiguous inputs. This is why Agency AI outperforms traditional RPA (robotic process automation) for workflows that require conditional reasoning, dynamic API composition, or adaptive scheduling under uncertainty.

A concrete example: imagine a SaaS operations team that orchestrates incident response, billing escalations, and customer onboarding. Instead of separate playbooks, we build an Agent that watches monitoring streams, composes remediation steps, and invokes downstream services with transactional safety. The pattern looks like this in pseudocode:

while not goal.completed:

observation = sense(events, metrics, ticket)

plan = planner(observation, policies)

for step in plan:

result = executor.call(step)

log(step, result)

if result.failed: escalate_to_human(step)

This code sketch shows the loop that makes the system resilient: sensing, planning, executing, logging, and escalating. In real systems the planner uses business rules, a knowledge base, and optionally an LLM for synthesis, while the executor enforces idempotency and circuit breakers.

The business impact is practical and measurable. We see faster mean time to resolution because agents take immediate corrective actions and surface only true exceptions for human review, which reduces toil and context switching. Agencies and product teams gain velocity: features that used to require manual coordination now deploy with automated verification, rollback, and compliance traces. Those productivity gains translate to lower operating costs and faster feedback cycles—key ingredients of business transformation when you must scale operations without linear headcount growth.

That said, Agency AI introduces new engineering responsibilities: governance, observability, safety, and data lineage. You should instrument agents like services—distributed tracing, structured logs, policy enforcement—and include human-in-the-loop checkpoints for high-risk decisions. When integrating with legacy systems, prefer event-driven adapters and idempotent APIs to avoid state corruption; orchestration tools and queueing systems help decouple agents from fragile backends.

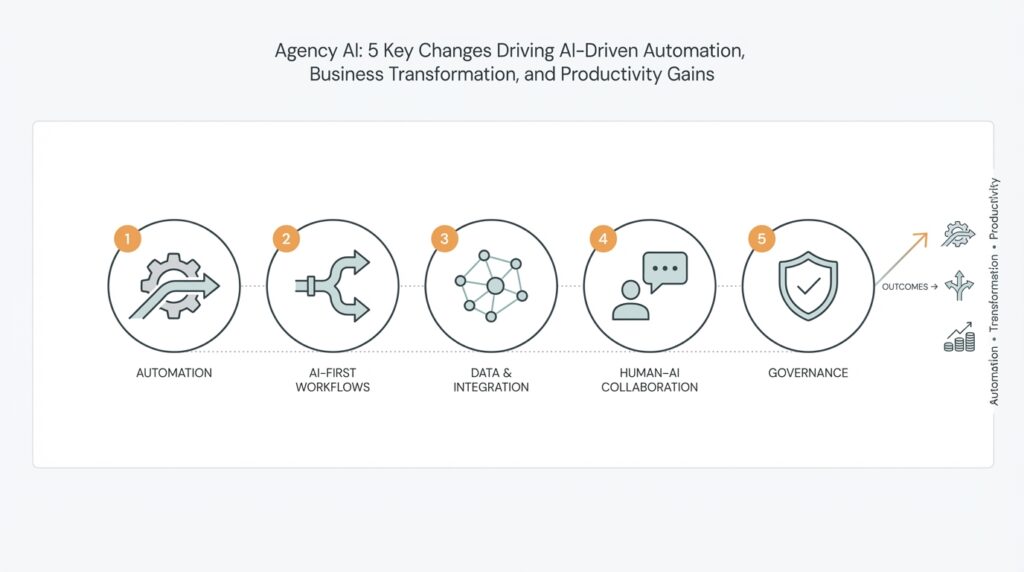

How do you know when to replace a scripted automation with an autonomous agent? Choose agents for workflows that span multiple systems, require conditional reasoning, or demand continuous adaptation to changing inputs. When you need resiliency, auditability, and the ability to generalize a workflow across customers or regions, Agency AI becomes a multiplier rather than a risk. Building on this foundation, we can now examine the five key changes that are making agent-first automation the default path for teams seeking scalable, AI-driven automation and sustainable productivity gains.

Shift to Agentic AI

Building on this foundation, Agentic AI is not a subtle incremental upgrade—it’s a tectonic shift in how you design automation and deliver outcomes. Agentic AI places autonomous agents at the center of workflows, turning brittle point-to-point scripts into resilient, goal-oriented services that can sense, plan, and act across systems. How do you decide when to move from scheduled scripts to agent-first automation? Choose it when workflows require conditional reasoning, multi-system coordination, or continuous adaptation to noisy inputs; those are the scenarios where autonomy yields disproportionate returns.

The architectural implications are immediate and practical. Instead of a single monolithic runner, you compose a lightweight planner, a policy/guardrail layer, and an executor that enforces idempotency and transactional safety; these components sit behind an orchestration fabric that handles retries, queuing, and backpressure. For example, implement a declarative task manifest (JSON/YAML) that the planner transforms into a task graph, then let the executor resolve adapters to legacy systems—this isolates fragile integrations and lets you scale the agent independently of backend load. By treating agents as services, you can apply standard service ownership patterns: CI/CD, observability, and service-level objectives.

Developer workflows change when you adopt autonomous agents. Write unit tests for planners, integration tests against sandboxed adapters, and scenario-based tests that simulate multi-step failures. Use contract testing for API adapters and launch synthetic end-to-end runs in a staging environment that models real event streams; these tests catch subtle temporal bugs that don’t appear in single-request tests. We recommend a test harness that can replay production events with annotated golden traces so you can measure regressions in planning decisions and execution side-effects before agents touch live systems.

Operational controls and governance become first-class concerns as agency increases. Insert a policy engine to validate proposed plans before execution, enforce rate limits and role-based approvals for high-risk actions, and record immutable audit trails for every planning decision and API call. For example, gate any financial or destructive action behind a human approval step or a multi-sig policy that the agent cannot bypass. Observability should include structured logs of intent, distributed traces of plan execution, and dashboards that surface drift between expected and actual behavior—this combination makes your agentic deployments auditable and safer.

Practical examples clarify the return on investment. Consider onboarding a new enterprise customer: an agent can validate identity documents, provision tenant resources across clouds, configure billing, and schedule a kickoff meeting—retrying failed steps, rolling back partial provisioning, and escalating only when policies demand human review. That single agent replaces multiple handoffs and reduces mean time to completion while preserving compliance and traceability. In production teams we’ve seen the move to agent-first automation cut coordination overhead and rework, because the agent enforces end-to-end contracts and error handling consistently.

Adopting this model requires changes in tooling, process, and mindset. Invest in modular adapters, robust orchestration, and a small-scope pilot that proves the value of autonomous agents before broad rollout. We should treat Agentic AI as a platform: provide teams with reusable planning primitives, policy libraries, and observability templates so product teams can safely compose agentic behaviors. Taking these steps lets you capture the productivity gains of agent-first automation while retaining control, visibility, and the ability to iterate quickly.

Orchestrate AI-Driven Workflows

Orchestrating AI-driven workflows is where agentic decision-making turns into dependable, auditable operations. Building on this foundation, we move from isolated agents and planners to a coordinated runtime that guarantees once-and-only-once effects, enforces policies, and surfaces human approvals when risk exceeds thresholds. In the first 100–150 words that matter, focus on orchestration and AI-driven workflows: this is the control plane that sequences plans, manages retries, and preserves state across long-running tasks. If you want predictable business outcomes, you must treat orchestration as an engineering discipline—not an afterthought.

Choose the right coordination model before you start wiring agents together. A centralized orchestrator simplifies visibility and makes global rollbacks and transactional semantics easier to enforce, while event-driven choreography reduces coupling and improves resilience for high-throughput paths. When should you centralize orchestration versus use choreography? Centralize when you need consistent policy enforcement, audit trails, and compensation logic across heterogeneous systems; prefer choreography when low latency, independent scaling, and eventual consistency are acceptable. Weigh operational complexity, failure modes, and regulatory constraints when making this decision.

State management and idempotency are the backbone of safe orchestration. Store plan state in an append-only task graph or durable state machine so you can resume, replay, and audit every decision; require idempotent adapters and use optimistic locking or sequence tokens when talking to legacy APIs. For long-lived flows—provisioning a tenant, processing settlements, or orchestrating incident remediation—implement compensation steps rather than relying on distributed transactions. This pattern lets you roll back partial side effects predictably and reason about failure recovery during postmortems.

Observability and governance convert opaque agent behavior into operational control. Instrument intent-level events (what the planner proposed), execution-level traces (how steps executed), and outcome assertions (policy checks and approvals) so you can correlate decisions with downstream effects. Insert a policy engine that rejects risky plans, gates destructive actions behind human-in-the-loop approvals, and emits immutable audit records for compliance. We recommend surfacing drift dashboards that compare expected vs actual plans so teams can rapidly detect model or knowledge-base regressions.

You must test orchestration as rigorously as you test services. Write unit tests for planners, contract tests for adapters, and scenario-based integration tests that replay annotated production traces through a sandboxed orchestration runtime. Create a synthetic event harness to simulate partial failures, latency spikes, and authorization errors; use golden traces to assert that compensation and retry behavior match expectations. Version your plans and use canary rollouts for significant control-plane changes so you can roll back orchestration logic without touching agent code.

Operationalizing orchestration at scale requires runtime controls and cost-aware policies. Enforce rate limits and circuit breakers at the executor layer, autoscale workers responsible for side-effectful steps, and set SLOs for plan completion latencies. Apply quota and cost ceilings to expensive downstream actions, and tag executions with business context for chargeback and auditing. Start with a small set of reusable primitives—idempotent adapters, policy templates, and observability libraries—so teams can compose workflows safely without reinventing core controls.

Taking orchestration seriously transforms agentic prototypes into production-grade automation. As we discussed earlier, agents give you closed-loop autonomy; orchestration gives you predictability, compliance, and operational leverage. In the next part we’ll dig into specific platform changes and primitives that make these patterns repeatable across teams, but for implementation now, prioritize durable state, clear coordination models, and tight observability so your AI-driven workflows deliver reliable business outcomes.

Modernize Data and Systems

Agents are only as reliable as the data and systems they act against; when your event streams are noisy, schemas drift, or adapters fail silently, Agency AI degrades into brittle automation. We’ve seen this happen when teams bolt LLM planners onto inconsistent sources without a canonical model—the planner makes plausible but incorrect actions and the executor pays the bill. To enable sustained agent-first automation you must treat data modernization as a core engineering effort: canonical events, contract-first schemas, and durable change-data-capture become part of the agent runtime, not an afterthought.

Start by establishing a single source of truth for intent and state. How do you migrate noisy event stores into a clean, queryable source of truth? Adopt a canonical event model and a schema registry, enforce contracts at producer and consumer boundaries, and use CDC (change data capture) instead of brittle dual-write patterns when possible. This reduces reconciliation work for planners and lets us reason about idempotency and compensation consistently; for example, plan decisions should reference the canonical entity ID and event version so you can replay or replay-correct decisions deterministically.

Operational adapters are where legacy systems meet modern agents, so design them as thin, idempotent facades. Implement adapters that translate canonical events into system-specific calls, apply optimistic locking or sequence tokens, and emit compensating events on failure. Use the Strangler Fig approach for systems migration: route a narrow set of capabilities to the new adapter-backed flow, validate behavior in staging with synthetic events, then expand scope. In practice, we wrap fragile APIs with an adapter that implements a retry policy, a circuit breaker, and an auditable side-effect log so the executor can assert once-and-only-once semantics before committing changes.

Make observability and lineage first-class artifacts so you can diagnose agent decisions end-to-end. Instrument intent-level traces (what the planner proposed), event lineage (which canonical events led to a decision), and adapter-side effects (API calls and responses). Set SLOs for data freshness and schema drift detection; when a planner’s input falls outside expected distributions, surface a clear signal that triggers a policy review or human-in-the-loop checkpoint. We find that correlating planner decisions with raw events and downstream side effects shortens MTTR and exposes knowledge-base gaps you can fix iteratively.

Governance and security should be integrated with your data controls rather than bolted on afterward. Enforce role-based access and field-level encryption at the canonical event layer, and let your policy engine reject plans that would touch sensitive data without explicit approvals. For destructive or financial workflows, require multi-step confirmations recorded in the audit trail; the agent should be able to propose a plan and then pause for a signed token before executing critical steps. This pattern preserves autonomy while keeping high-risk actions within definable guardrails.

Roll out changes incrementally and measure the right signals to prove value. Start with a narrow pilot—one agent, one canonical entity, and a bounded set of adapters—then expand as you gather telemetry on plan accuracy, execution errors, and business outcomes such as reduced lead time or fewer escalations. When we align data modernization, adapter design, and policy controls with the agent runtime, teams can safely scale agent-first automation across services and reduce toil without sacrificing auditability or compliance. Building this foundation lets us move confidently to broader orchestration and platform primitives that compose these patterns across the organization.

Redefine Roles and Skills

Agency AI and agent-first automation change who does what on your teams and how you measure success — not just what systems you build. In the first 100–150 words of any transformation narrative we should name the obvious: AI-driven workflows require different handoffs, different checkpoints, and different expertise. How do you reorganize teams so agents own end-to-end outcomes while humans focus on exceptions, policy, and strategy? Start by treating autonomy as a new product line: define owners, SLAs, and career paths for the capability rather than for individual scripts or services.

Building on this foundation, the most immediate shift is from task operators to systems designers. Rather than hiring people who run cron jobs or maintain point-to-point integrations, hire engineers who understand planners, idempotent adapters, and orchestration fabrics. These engineers must pair API design with observability skills: they write contract tests, author distributed traces that link intent to side effects, and design compensating transactions for failures. We also need policy engineers and risk stewards who can translate legal and compliance rules into machine-enforceable checks in the policy engine.

Skill sets must tilt toward synthesis, verification, and judgment. You should prioritize expertise in prompt engineering and model evaluation, but pair it with classical software practices: unit-tested planners, synthetic event harnesses, and scenario-driven integration tests. Teach engineers to define measurable acceptance criteria for plan accuracy (precision, recall, false-positive cost) and to instrument those metrics into dashboards. Familiarity with idempotency patterns, optimistic locking, and circuit breakers becomes as important as familiarity with the underlying LLMs or planners.

Operational training should be hands-on and scenario-based rather than theoretical. Create a curriculum that mixes short rotations on the agent platform with war-room exercises where teams debug a failed multi-step provisioning flow end-to-end. Use paired-programming and runbook drills to socialize human-in-the-loop procedures and approval workflows. When evaluating candidates or internal transfers, favor demonstrable outcomes: a reproducible scenario test where the candidate designs a planner, writes an adapter, and shows observability that links intent to effect.

Organizationally, separate platform ownership from product execution while keeping tight feedback loops. Run a small platform team that provides reusable planning primitives, policy libraries, and an observability stack; let product teams compose those primitives into business flows with clear guardrails. Establish a lightweight review board for high-risk plan changes and require signed tokens or multi-party approvals for destructive actions. For example, when an onboarding agent provisions cloud resources, the platform enforces quotas and the policy engine gates destructive cleanup steps, while the product owner owns completeness and customer SLAs.

The payoff is tangible: clearer roles reduce coordination overhead, faster iteration cycles, and measurably safer deployments. Track outcomes with business-oriented KPIs — mean time to resolution for exceptions, rate of successful plan executions, and policy-rejection rates — and fold those into career development conversations. As we move from pilots to platform-scale deployments, invest in role-specific career ladders (platform engineer, policy engineer, agent product manager) so the people who build, govern, and operate autonomous systems can grow with the capability and you can scale agent-first automation responsibly.

Measure ROI and Scale

Building on this foundation, start by instrumenting outcomes the same way you instrument services: capture business impact, operational reliability, and incremental cost in a single telemetry plane. In the first 100–150 words you should be explicit: tag every agent execution with a business context (customer_id, flow_id, experiment_id), record intent and outcome events, and emit cost-per-execution metrics so you can map agent behavior to ledger entries. Measuring ROI for Agency AI depends on linking measurable business outcomes (reduced lead time, higher throughput, fewer escalations) directly to agent executions and their system costs. How do you attribute savings to agents versus other improvements? Use controlled experiments and attribution tags to answer that question empirically.

Define a minimal ROI model that your stakeholders can agree on before rollout. State the baseline: current mean time to completion, headcount hours per workflow, error rates, and external costs (third-party API fees, cloud spend). Then define the agent outcome: delta in hours saved, reduction in error-handling work, and incremental run cost. A straightforward formula you can use in early pilots is ROI = (ValueDelivered - AgentCost) / AgentCost, where ValueDelivered is monetized time saved plus avoided penalties or churn. Monetize conservatively and include one-time engineering costs amortized over a realistic horizon (6–18 months) so your ROI reflects operating reality rather than optimistic projections.

Instrument three classes of metrics and make them visible in dashboards. First, business metrics: lead time, conversion, churn, and customer satisfaction signals that agents directly influence. Second, operational metrics: plan success rate, mean time to recovery, policy-rejection rate, and executor latency. Third, cost metrics: CPU/GPU hours, LLM token spend, downstream API charges, and human approval overhead. Correlate these streams so you can answer questions like “Did a 20% drop in mean time to completion come with a 30% increase in token spend?” — that visibility makes cost-vs-value tradeoffs explicit.

Run controlled experiments and incrementally expand scope. Start with A/B tests or canary rollouts where one cohort uses the agentic workflow and another uses the legacy path; keep cohorts large enough to be statistically meaningful. Use synthetic event replay to validate plan accuracy before wider traffic shifts and instrument golden traces so you can compare decision trees across versions. For high-risk actions, employ progressive rollout rules: soft-execute (simulate effects without committing), shadow mode, then gated execution with human-in-the-loop approval; measure both operational safety and business impact at each stage.

Scale measurement with cost-aware governance and tagging. Assign each agent and plan a cost center and resource quota so you can do chargeback and optimize hot paths. Autoscale executors responsively, but enforce cost ceilings and circuit breakers to prevent runaway token or API spend. When you scale geographically or across product lines, normalize metrics (cost per transaction, time per workflow) to allow apples-to-apples comparisons; this prevents misleading conclusions when workflows vary in complexity or regulatory overhead.

Optimize for diminishing returns and prioritize improvements that move the needle. Use marginal analysis: if a change reduces execution time by 5% but increases token spend by 50%, it may not be worth it for low-value flows. Instead, focus optimization efforts on high-volume or high-cost workflows where small efficiency gains compound. Combine model-level improvements (smaller models for routine steps, retrieval-augmented prompts for accuracy) with system-level levers (adapter caching, idempotent batching) so you reduce both error surface and per-execution cost.

Finally, translate measurement into governance and roadmap decisions. Feed ROI telemetry into release gating, budget allocation, and the platform roadmap so platform teams can prioritize reusable primitives that deliver the biggest business returns. Tie team incentives to measurable KPIs — successful plan execution rate, reduced manual escalations, and cost-per-outcome — so you scale Agency AI not just in throughput but in sustained, measurable value. In the next section we’ll apply these measurement patterns to platform-level primitives and governance that make agent-first automation repeatable across teams.