Set clear business goals (pwc.com)

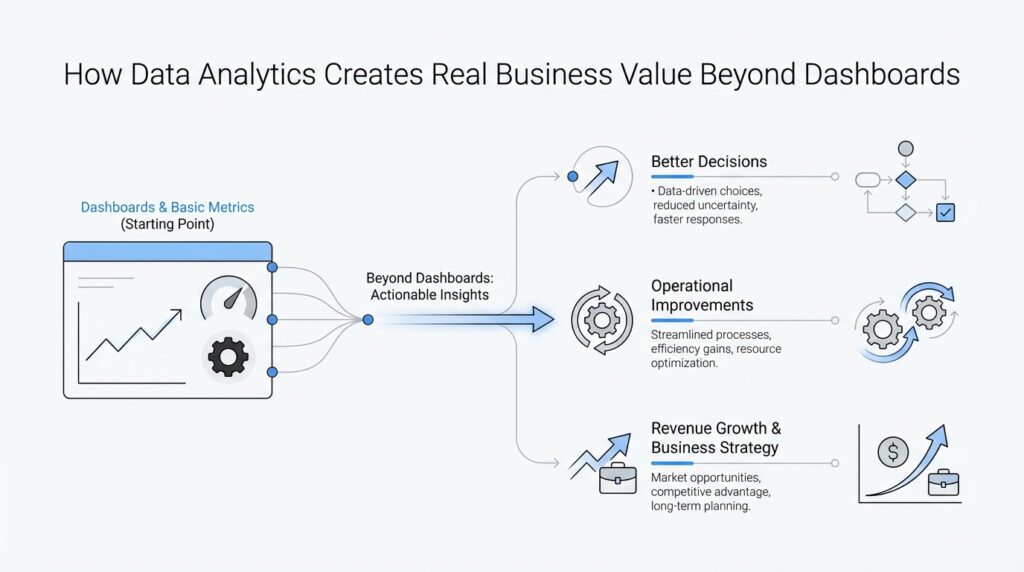

Imagine you’ve just opened your first dashboard and the numbers look impressive, but the room is still quiet because no one agrees on what those numbers are supposed to change. That is the moment when data analytics moves from being a reporting tool to becoming a business tool. When you start with business goals—like increasing revenue, improving customer experience, or reducing wasted time—you give the data a direction, and that direction is what turns information into action.

Building on this foundation, the next step is to make those goals concrete enough to steer real work. A vague goal like “improve performance” sounds ambitious, but it leaves too much room for guesswork. How do you know when you’re making progress if nobody has defined what progress looks like? This is where SMART goals help: SMART stands for specific, measurable, achievable, relevant, and time-bound. In plain language, that means your goal should say exactly what you want to accomplish, how you will measure it, whether it fits your current resources, why it matters, and when it should be done.

Now let us take that idea into a real business setting. Suppose a retail team says, “We want better customer retention.” That sounds reasonable, but it is still a foggy starting point. If the team reframes it as, “We want to reduce repeat-customer churn by 8% over the next quarter,” the work suddenly becomes much clearer. The analytics team now knows what to track, the business team knows what outcome matters, and both sides can test whether their efforts are working. That is the practical value of data analytics: it helps you connect numbers to outcomes instead of collecting numbers for their own sake.

This clarity matters because business goals act like a compass when trade-offs appear. Without them, teams can chase flashy dashboards, compare mismatched reports, or spend months on analysis that never touches a real decision. With them, you can prioritize the use cases that are most likely to create value, pilot ideas on a smaller scale, and establish key performance indicators, or KPIs, which are the specific measures you use to check whether a goal is being met. PwC notes that organizations create more value when they align analytics initiatives with business objectives, focus on high-impact use cases, and tie KPIs to specific outcomes.

So when you ask, “What should we measure first?” the best answer is usually the question behind the question: what business result are we trying to change? Start there, and everything else becomes easier to choose, from the data you collect to the reports you build to the experiments you run. In that sense, setting clear business goals is not a planning exercise that happens before analytics; it is the first act of analytics itself, because it tells the data where to go and tells your team what success actually looks like.

Map KPIs to outcomes (pwc.com)

Building on this foundation, KPI mapping is where analytics starts to feel less like a scoreboard and more like a steering wheel. A KPI, or key performance indicator, is the specific number you watch to see whether a goal is moving in the right direction, while an outcome is the business result you actually care about, such as higher retention, faster growth, or lower cost. How do you know whether a dashboard number matters? You trace it back to the outcome it is supposed to change, because PwC stresses that analytics creates the most value when KPIs are tied to specific business outcomes.

The easiest way to think about this is to imagine a recipe. The finished meal is the outcome, and the heat, timing, and ingredient measurements are the KPIs that help you get there. If you only watch the oven light, you may feel busy, but you still will not know whether dinner is turning out well. In the same way, a business can track dozens of numbers and still miss the point if those numbers do not connect to a result the team is trying to change.

Once that idea clicks, the next step is to separate leading indicators from lagging indicators. A leading indicator is a measure that moves early, like cart abandonment, call wait time, or qualified leads; a lagging indicator is the later result, like revenue, churn, or repeat purchases. Mapping KPIs to outcomes means you use both kinds together, so you can see whether today’s actions are setting up tomorrow’s result instead of waiting until the quarter ends to find out what happened. That is also why PwC recommends identifying high-impact use cases first and using a pilot-led approach to test value before scaling.

Now that we understand the structure, let us make it concrete. Suppose your outcome is reducing customer churn, which means the rate at which customers stop doing business with you. The KPIs might be first-response time, issue resolution rate, and repeat-contact rate, because those measures sit close to the customer experience that influences whether people stay or leave. If first-response time improves but churn does not move, that tells you the mapping is incomplete or the real problem lives somewhere else, such as product quality or pricing. This is the practical power of KPI mapping: it helps you test whether your analytics work is aimed at the right lever.

Taking this concept further, the best KPI maps do not stop at one number. They show a small chain of cause and effect, starting with a controllable activity, moving through an operational measure, and ending with the business outcome. For example, sales calls made may influence conversion rate, which then influences revenue; on the service side, resolved cases may influence satisfaction, which then influences retention. PwC highlights this same logic when it recommends analyzing cost savings, revenue generation, customer experience, and process improvement together rather than treating analytics as a single isolated report.

This is where teams begin to work with more confidence. Instead of asking, What can we measure? they ask, Which measures prove we are changing the business? That shift keeps analytics grounded, keeps pilots focused, and gives everyone a shared way to judge whether the work is paying off. Once the KPI map is clear, the next conversation becomes much sharper: which data do we need, who owns each measure, and what experiment will tell us whether the outcome is really moving?

Prioritize high-value use cases (pwc.com)

Building on this foundation, the next step in data analytics is not to build more reports; it is to choose the few high-value use cases that can actually move the business. That is where analytics starts to feel less like a mirror and more like a lever. When you ask, “Which problem, if solved, would create the most business value right now?” you begin separating busywork from real impact. PwC recommends starting with specific pain points and opportunities, then identifying and piloting the analytics ideas most likely to create value.

This is an important shift because not every promising idea deserves immediate attention. A team can spend months polishing a model, cleaning a dashboard, or debating edge cases, yet still miss the work that would matter most to customers or the bottom line. Taking this concept further, PwC suggests using a pilot-led approach and measuring maximum viable value (MVV), which means estimating the biggest practical value a pilot can deliver before you commit to scaling it. In plain language, we are looking for the use cases that can prove their worth early, not the ones that merely sound impressive in a meeting.

So how do you decide what rises to the top? First, we trace each idea back to the outcome it influences, then we ask whether that influence is likely to show up in savings, revenue, customer experience, or process improvement. A use case that reduces manual rework across a common workflow may be more valuable than a flashy model that only helps once a quarter. PwC specifically calls out those four value areas—cost savings, revenue generation, customer experience enhancement, and process improvement—as the lenses to use when prioritizing analytics initiatives.

Now that the filter is clearer, the next question is: what does a good high-value use case look like in practice? Imagine a customer service team drowning in repeat calls. If analytics can show which issues are causing the most repeat contact, the team can fix the underlying friction instead of treating symptoms one call at a time. That kind of use case is powerful because it connects a controllable operational change to a business result the whole organization cares about, which is exactly the kind of link that makes analytics useful beyond the dashboard.

PwC’s examples make this idea feel very real. In one case, a global insurance company wanted to modernize its data capabilities over several years, but it focused first on the lowest-hanging, highest-value opportunities, starting with enhanced underwriting analytics before changing the underlying technical infrastructure. In another, a multinational hospitality company built an innovation hub to explore customer-data use cases that would not have surfaced through a standard backlog approach. The lesson is not that every company should copy those exact moves; it is that high-value use cases often live in the work closest to the business pain, where a small pilot can prove value before a larger investment begins.

That is why prioritization matters so much. Without it, analytics teams can end up chasing the loudest request, the easiest dataset, or the most visually exciting chart. With it, they can choose the use cases that are likely to change behavior, improve a process, or unlock revenue sooner. PwC also emphasizes establishing clear KPIs tied to specific business outcomes, because once a promising use case is selected, those measures become the way you tell whether the pilot is actually creating value.

In practice, this means you are not asking analytics to do everything at once. You are asking it to do the right thing first, in the part of the business where the payoff is clearest and the learning will travel furthest. That mindset keeps the work grounded, keeps the investment easier to defend, and gives your team a better chance of turning data analytics into something the business can feel, not just observe.

Turn insights into action (sloanreview.mit.edu)

Building on this foundation, the real test of data analytics is what happens after the insight appears. A dashboard can tell you that customers are slipping away, but business value begins when someone knows exactly what to do next: call the right customers, fix the service bottleneck, or change the offer before the quarter slips by. MIT Sloan’s research makes this point clearly: organizations need to know not only what happened, but what actions should be taken to get the right result.

That is why turning insights into action is less about producing prettier charts and more about wiring the insight into the work itself. Think of it like a recipe that does not stay on the page; it moves into the kitchen, where someone measures, stirs, tastes, and adjusts. In analytics terms, you give each insight an owner, a trigger, and a next step, so the recommendation arrives where decisions are actually made. When that structure is missing, even a strong model can become an interesting fact with no follow-through.

This is where business processes matter. MIT Sloan’s research says the fastest path to value comes from embedding insights into business operations, because the big opportunity lives at the operational level, where everyday decisions happen. Imagine a service team that sees an alert when repeat contacts spike; instead of waiting for a monthly report, the team can reroute the issue, adjust scripts, or escalate a root cause right away. That same pattern works in sales, inventory, and finance: the insight becomes a prompt that changes behavior in the moment it matters.

The next step is to start small enough to learn quickly. MIT Sloan’s recommendations emphasize pinpointing the insight first, using readily available data to test the model, and letting the resulting actions reveal the next data you need, because starting with a full data program can slow down time to value. In practice, that means a pilot should answer one sharp question: if this signal is useful, what will we do differently tomorrow? A pilot is not a miniature science project; it is a practical rehearsal for the real business change you want to scale.

Once the pilot shows promise, the work shifts to making the new behavior routine. That usually means assigning a clear process owner, defining the decision threshold, and checking the outcome on a regular cadence so the team can tell whether the action is working or needs tuning. If the insight keeps producing good decisions, you expand it; if not, you revise the trigger, the workflow, or the metric until the connection between analytics and business value is stronger. MIT Sloan’s guidance to focus on big business issues and keep pressing to embed insights into operations points in exactly this direction.

So when readers ask, “How do you turn analytics into action?” the answer is: by making the insight travel. It should move from the model to the meeting, from the meeting to the workflow, and from the workflow to a measurable business result. Once that loop is in place, data analytics stops being something the team looks at and becomes something the business uses.

Embed analytics in workflows (sloanreview.mit.edu)

Building on this foundation, the real work begins where dashboards end and daily routines begin. When an insight sits in a report, it is easy to admire; when embedded analytics is inside a workflow, the same insight reaches the moment a decision is made. MIT Sloan says analytics-driven insights need to be linked to strategy, easy for end users to understand, and embedded into organizational processes so action happens at the right time.

How do you make analytics in workflows real? By treating the workflow like a river with checkpoints. At each checkpoint—customer support ticket, credit approval, inventory reorder, or sales follow-up—you decide what signal should appear, who should see it, and what action should follow. That structure matters because insights only create value when they are consumed and turned into action, not when they remain a static output. This is an inference from MIT Sloan’s emphasis on embedding insights into business processes.

This is why the article recommends starting from the business objective, then defining the specific insight or question needed, and only then identifying the data. That may feel backwards if you are used to a data-first project, but it is a faster path to value because you can use readily available data, test an initial model, and learn where the real gaps are. In other words, embedded analytics is less about building a giant analytical shrine and more about putting a useful answer inside the exact step where someone is already working.

The next piece is timing. An insight that arrives after a customer has left, a shipment has been delayed, or a loan decision has already been made is interesting, but it is no longer operationally useful. MIT Sloan highlights manufacturing, new product development, credit approvals, and call center interactions as examples of places where analytics must be infused into everyday work, because those are the moments when behavior can still change. That same logic applies whether the workflow is digital or human: the insight has to meet the decision while the decision is still alive.

Once the insight is inside the process, the workflow needs a few simple guardrails. Someone should own the decision, the trigger should be clear, and the expected action should be visible enough that people do not have to guess. MIT Sloan also recommends proof-of-value pilots and an implementation roadmap, which means you can start with a narrow workflow, show that it changes outcomes, and then expand it with confidence. That pilot mindset keeps embedded analytics practical, because it proves that the workflow changes behavior before the organization commits to scaling.

Taking this concept further, the goal is not to make every workflow feel technical; it is to make every important workflow feel a little more intelligent. When an alert, recommendation, or next-best action appears at the right moment, analytics stops being a separate reporting exercise and becomes part of how the business runs. MIT Sloan’s core message is that organizations should keep focusing on the big business issue, solve what analytics can solve today, and keep pressing to embed the insight into operations. That is the bridge from a good model to a useful business habit.

Measure impact and refine (pwc.com)

Building on this foundation, the real moment of truth arrives after the pilot is live and the insight is already flowing through the workflow. This is where data analytics stops being a promising idea and starts proving business value. You are no longer asking whether the model looks smart; you are asking whether it changed anything that matters, such as revenue, customer experience, cost, or speed. That question is the heart of measuring impact, and it is what keeps analytics tied to results instead of opinions.

The first step is to compare the new result to a clear baseline, which is the starting point you measured before the change began. Think of it like checking your route on a road trip: if you do not know where you started, you cannot tell whether you are moving in the right direction. How do you know if analytics improved performance? You look at the same KPI, over the same time window, under similar conditions, and ask what changed after the new action went into place. That keeps the conversation grounded in data analytics rather than a gut feeling about success.

Once that comparison is in place, the next challenge is to separate real impact from coincidence. A dashboard can show that a number went up, but that does not always mean the analytics caused it. Maybe a seasonal spike helped, maybe a marketing campaign changed demand, or maybe a process fix happened at the same time. This is why measuring business value often means looking at patterns across segments, comparing pilot groups with non-pilot groups, and checking whether the change is strong enough to matter in practice. The goal is not perfect certainty; it is a believable case that the insight helped move the business.

This is also where small signals become important. A single KPI rarely tells the whole story, so we look at the chain around it. If a customer service pilot lowers first-response time but does not improve retention, we learn that the intervention may be too narrow or the outcome may take longer to appear. If a sales recommendation increases conversion but reduces order quality, we learn that the metric improved in one place while creating friction somewhere else. Measuring impact in analytics is really about reading the full story, not just the headline.

Now that we understand what changed, refinement begins. Refinement means taking what the pilot taught you and adjusting the model, the process, or the KPI map so the next version works better. Maybe the signal needs a different threshold, maybe the workflow needs a clearer owner, or maybe the original use case was too broad to produce a clean result. That kind of tuning is not a sign that the work failed; it is how business analytics becomes sharper with each cycle. The best teams treat every pilot as a lesson that makes the next decision more precise.

Taking this concept further, the most useful refinements usually come from combining numbers with human feedback. The people using the insight can tell you whether it arrived at the right time, whether the recommendation made sense, and whether the action was realistic inside their daily work. That perspective matters because even a strong model can miss the practical details of how people actually operate. When you pair performance data with user feedback, you get a much clearer picture of what to keep, what to adjust, and what to retire.

Over time, this loop becomes a habit: measure, compare, learn, refine, and measure again. That is how data analytics creates lasting business value beyond dashboards, because the organization keeps improving the way it acts, not just the way it reports. Each round makes the next one more focused, more credible, and more useful to the business. And once that rhythm is in place, the conversation naturally shifts toward how to scale what worked and bring the same discipline to the next opportunity.