Token Classification Basics

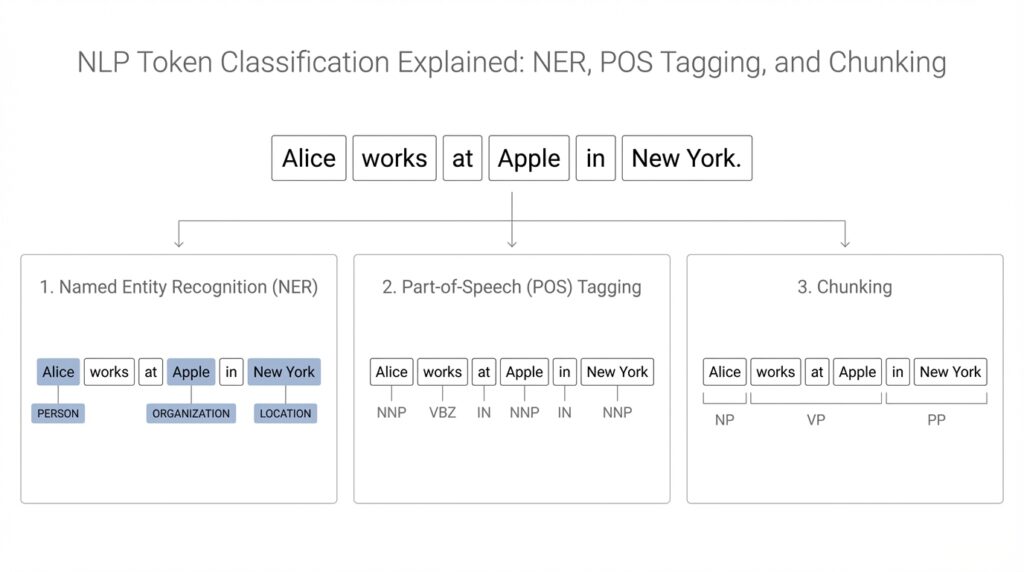

Building on this foundation, token classification is the moment where an NLP model stops reading a sentence like a whole and starts looking at it token by token. A token is a piece of text the model works with, often a word, but sometimes a smaller word piece like New and ##York after tokenization, which means splitting text into model-friendly units. The core idea is wonderfully practical: instead of giving one label to an entire sentence, we give a separate label to each token. That is why tasks like named entity recognition, part-of-speech tagging, and chunking all live in the same family of problems.

Think of it like a teacher grading every answer on a worksheet, not the worksheet as a single block. Each token gets its own classification label, and those labels tell us what role the token plays in the sentence. In named entity recognition, a token might be marked as a person, location, or organization. In part-of-speech tagging, the same token might be labeled as a noun, verb, adjective, or something else, which helps the model understand grammar rather than meaning.

So how do you tell a model where one concept begins and another ends? That is where token classification becomes more precise. A sentence such as “Sarah moved to New York” may look simple to us, but the model has to decide whether Sarah is a person, whether New and York belong together, and whether moved is a verb. For sequence labeling tasks like NER, models often use schemes such as BIO tags, which stand for Beginning, Inside, and Outside. These tags help the model mark the first token of an entity and then continue that entity across the following tokens without losing track.

This is also where chunking quietly enters the scene. Chunking groups tokens into small phrases, often called chunks, such as noun phrases or verb phrases, so the model can see structure in a sentence without building a full grammar tree. If token classification is like labeling each brick in a wall, chunking is like noticing which bricks form a doorway, a window, or a section of the wall. That same idea applies to part-of-speech tagging too, because the tag on each token gives clues about how words fit together and why certain phrases feel complete.

Now that we understand the labels, it helps to see why tokenization matters so much. When a word gets split into multiple pieces, the model still has to assign labels in a consistent way, and that can feel tricky at first. Should the label go on the first piece only, or on every piece? Different systems handle that detail differently, but the goal stays the same: make sure the final token classification output matches the real structure of the sentence. This is one reason token classification is more than a simple word-matching exercise; it is a careful alignment between text, labels, and meaning.

Once this clicks, the bigger picture starts to come into focus. Token classification is not about memorizing words; it is about teaching a model to notice roles, boundaries, and relationships inside language. Whether we are asking it to recognize names, identify grammar, or carve a sentence into useful chunks, the model is learning to attach meaning to each token one step at a time. That careful, token-by-token habit is what makes the later tasks feel less mysterious and much more connected.

NER, POS, and Chunking

Building on this foundation, we can now watch token classification do something more specific and more useful in practice. NER, POS tagging, and chunking all ask the model to look at each token, but they are listening for different kinds of signals. Named entity recognition, or NER, looks for real-world things like people, places, and companies. Part-of-speech tagging, or POS tagging, asks what grammatical job each word is doing. Chunking, in turn, gathers nearby tokens into small phrase-sized groups so the sentence starts to feel less like a stream of words and more like a set of building blocks.

If you have ever wondered, “How do you teach a model to tell a name from a normal noun?” NER is the answer. In a sentence like “Apple hired Maria in London,” the model has to decide that Apple is an organization, Maria is a person, and London is a location, even though each of those words can appear in other roles elsewhere. That is what makes named entity recognition so valuable: it does not just see words, it learns which words point to concrete things in the world. In many real applications, NER is the layer that turns raw text into something searchable, sortable, and easier to analyze.

POS tagging works from a different angle, and that difference matters. Instead of asking what something refers to, POS tagging asks how the word behaves inside the sentence. A word like “book” can be a noun in one sentence and a verb in another, so the tag helps the model read the sentence the way a grammar-aware person would. Think of POS tagging like assigning every actor a role in a play: one word may be the subject, another may be the action, and another may be the description that ties the scene together. That grammatical scaffolding is one reason POS tagging still shows up in many NLP systems, even when the end goal is broader language understanding.

Chunking sits between those two ideas, and that is where it becomes especially helpful. Rather than labeling a full parse tree, chunking groups tokens into short phrases such as noun phrases or verb phrases, which makes the sentence easier to digest without demanding deep syntax analysis. For example, in “The quick brown fox jumped over the fence,” chunking can help identify “The quick brown fox” as one phrase and “jumped over the fence” as another. You can think of it like packing a suitcase: instead of handling every item separately, chunking bundles related items together so the structure becomes clearer at a glance.

Taking this concept further, the same sentence can carry all three layers of meaning at once. “New York City welcomed visitors” may contain a named entity, a grammatical pattern, and a phrase boundary that all overlap, and the model has to keep those roles distinct. That is why token classification feels subtle at first. A token can belong to an entity, play a grammatical role, and live inside a chunk all at once, much like a person can be a parent, an employee, and a neighbor at the same time. The labels are not competing; they are describing different views of the same sentence.

So what does that mean in practice? It means NER, POS tagging, and chunking are not just academic labels; they are tools that help downstream systems make better decisions. Search engines can use them to understand queries more precisely, chat systems can use them to interpret user intent, and information extraction pipelines can use them to turn prose into structured data. Once you see them side by side, token classification stops feeling like a single task and starts looking like a set of lenses. Each lens reveals a different kind of order, and together they help the model read language with far more care than a simple word-by-word scan.

Tagging Schemes and Labels

Building on this foundation, the next question is the one that turns token classification from a neat idea into a working system: how do we decide which labels exist, and how do they mark the edges of meaning? That is what tagging schemes and labels are for. A tagging scheme is the rulebook, and a label is one of the tags inside that rulebook. In practice, this means we are not only saying “this token is part of an entity,” but also deciding whether it begins that entity, continues it, or stands outside it altogether. If you have ever wondered, “How do you teach a model where a phrase starts and ends?” this is the part that answers that question.

The easiest way to picture this is to imagine a set of sticky notes with different colors. One color might mean “this token starts a name,” another might mean “this token is still inside the same name,” and a third might mean “this token is unrelated.” The labels give the model a compact way to describe structure without drawing a full sentence diagram. That is why schemes such as BIO, which stands for Beginning, Inside, and Outside, are so common in token classification. They make the model’s job more precise, because each token carries not only a category, but also a position within that category.

Once we start using labels this way, consistency becomes the real challenge. A sentence can look obvious to us, but annotation often gets tricky at the borders. Should “New York City” be one location label across three tokens, or should each word be tagged separately? Should a hyphenated company name count as one organization or two pieces that belong together? These decisions are not random; they come from annotation guidelines, which are the written instructions human labelers follow so that the dataset stays uniform. Without those guidelines, the same sentence might be labeled two different ways by two different people, and the model would learn confusion instead of structure.

This is also where the difference between the scheme and the actual label set starts to matter. The scheme defines the pattern, while the label set defines the categories that fit inside that pattern. For example, in named entity recognition, the categories might include person, organization, and location, so the full tag inventory becomes something like B-PER, I-PER, B-ORG, I-ORG, and so on. The “B” and “I” parts come from the scheme, while “PER” and “ORG” come from the task itself. That separation is useful because it keeps the structure of tagging reusable even when the meaning of the labels changes.

Now the story becomes more interesting when we bring subword tokenization back into the picture. A single word may split into smaller pieces, and the labeling system still has to stay faithful to the original sentence. In many pipelines, the first piece carries the main label while the later pieces either repeat it or receive a special handling rule, depending on the framework being used. This sounds technical, but the underlying idea is simple: we want the model to preserve the boundary of the original word or phrase, even if the tokenizer has broken it apart. That alignment between text and labels is one of the quiet but essential parts of tagging schemes.

In real projects, the choice of scheme affects both training and interpretation. A simpler scheme may be easier to annotate and learn, while a richer one can capture boundaries more cleanly. Some tasks use BIO because it is straightforward, while others use variants like BILOU, which adds more detail by marking the beginning, inside, last, and outside positions. The point is not to memorize every acronym right away. The point is to see that labels are doing two jobs at once: they tell the model what something is, and they tell it where that thing lives in the sentence.

When you step back, that is the real power of tagging schemes and labels. They turn language into a pattern the model can follow, one token at a time, without losing the shape of the original sentence. Once that structure is in place, the model can move more confidently through named entity recognition, part-of-speech tagging, and chunking, because it now has a clear map for what belongs together and what stands apart.

Preparing Training Data

Before a model can learn token classification, it needs examples that feel like carefully packed suitcases: the right text, the right labels, and nothing left to guess. That is what preparing training data is really about. You are not feeding the model a pile of sentences and hoping it discovers structure on its own; you are showing it, token by token, what counts as a person, a verb, a phrase boundary, or a word outside the pattern. How do you prepare training data for named entity recognition, part-of-speech tagging, or chunking without creating confusion? We start by collecting sentences that reflect the kind of language the model will actually see later.

That first step matters more than it might seem, because training data is the model’s classroom. If all your examples come from news articles, the model may struggle with chat messages, product reviews, or medical notes. If your sentences are too short or too tidy, the model may miss the messy rhythm of real language. So we want a balanced mix: clear examples for learning, but also enough variety to teach the model how language behaves in different situations. This is where annotation guidelines become essential, because they give human labelers one shared rulebook instead of many private interpretations.

Once the sentences are chosen, we move into annotation, which is the careful process of assigning labels to each token. Here, consistency is the hidden hero. One person might think “New York City” should be tagged as a single location across three tokens, while another might split it differently unless the instructions are precise. That is why good training data for token classification includes examples, edge cases, and definitions for tricky moments like hyphenated names, abbreviations, or words that can play more than one role. The clearer the guidelines, the less the model learns from noise and the more it learns from pattern.

Then comes the part that often surprises beginners: the labels must line up with the tokenizer. A tokenizer, as we discussed earlier, is the tool that breaks text into model-friendly pieces, and sometimes one word becomes several subword units. If a label belongs to a word that splits apart, you need a rule for how that label travels across the pieces. Some workflows attach the label to the first subword and ignore the rest, while others repeat the label or mark the extra pieces in a special way. This alignment step is a small technical detail with big consequences, because a mismatch here can quietly weaken the whole token classification model.

After labeling and alignment, we need to separate the data into training, validation, and test sets. The training set teaches the model, the validation set helps us tune it while it is learning, and the test set gives us a final, honest check at the end. It is important that these sets do not overlap, because we want to know whether the model truly learned the pattern or merely memorized the sentences. If the same names, phrases, or documents appear everywhere, the results can look better than they really are, which is a common trap when people are new to preparing training data.

We also want to look for balance before we start training. If one label appears thousands of times and another appears only a handful of times, the model may become lazy and favor the common case. That is why token classification datasets often need a close look for label distribution, missing tags, and annotation mistakes. A quick review of a few samples from each class can reveal problems that raw counts hide. In practice, this quality check is the difference between a dataset that teaches and a dataset that merely exists.

When all of these pieces come together, the picture becomes much clearer. Preparing training data is not a mechanical chore at the edge of the project; it is the part that shapes everything the model will later understand about NER, POS tagging, and chunking. Clean text, consistent labels, careful token alignment, and thoughtful dataset splits give the model a stable path to follow. Once that foundation is in place, the learning process becomes far less mysterious, because the model is finally being taught with examples that make sense.

Fine-Tuning a Model

Building on this foundation, fine-tuning is the moment when a pretrained model stops being a general reader and starts becoming a token classification specialist. How do you fine-tune a model for NER, POS tagging, or chunking? You continue training a model that already understands broad language patterns on a smaller dataset that matches your task. Hugging Face describes fine-tuning as continuing training a pretrained model on a smaller, task-specific dataset, and notes that it usually needs less compute, data, and time than training from scratch.

The next step is choosing the right model shape for the job. For token classification, the common choice is AutoModelForTokenClassification, which adds a classification layer on top of a pretrained checkpoint so the model can predict a label for each token. You also provide id2label and label2id mappings, which are like a bilingual dictionary between human-readable tags and the numbers the model uses internally. That matters because the model learns with numbers, but you need the output to read like O, B-PER, or I-ORG.

Now we come back to the part that quietly makes or breaks the whole process: alignment. A tokenizer can split one word into several subwords, so the labels must follow that split without losing the original meaning. The official workflow uses word_ids to map each token back to its source word, assigns -100 to special tokens so the loss function ignores them, and keeps the label on the first subtoken while skipping the rest. Think of it like placing one name tag on the front of a suitcase instead of sticking the same tag on every zipper and seam.

Once the labels are aligned, TrainingArguments and Trainer take over the training loop. TrainingArguments controls the run, and the Hugging Face guide highlights settings such as output_dir, learning_rate, per_device_train_batch_size, num_train_epochs, eval_strategy, save_strategy, and load_best_model_at_end. Trainer then bundles the model, datasets, tokenizer, data collator, and compute_metrics function into one place, so you can train without wiring every step by hand. For token classification, DataCollatorForTokenClassification helps pad batches dynamically, and seqeval reports precision, recall, F1, and accuracy during evaluation.

That is where fine-tuning starts to feel practical instead of abstract. When the model is learning well, NER turns raw text into entity spans, POS tagging turns words into grammatical roles, and chunking turns token-by-token labels into phrase-sized structure. After training, you can save the model locally or push it to the Hub with trainer.push_to_hub, which makes it easy to reuse the finished model for inference later. With that training loop in place, the model is ready to move into inference, where we can see those learned tags appear on fresh text.

Evaluating Predictions

Building on this foundation, evaluation is where we stop applauding the model and start checking its work. In token classification, we compare predicted tags against gold labels, which are the human-verified answers in the dataset, because a model can sound confident and still miss the real boundaries in a sentence. How do you know whether your NER, POS tagging, or chunking model is actually useful? You measure it with a sequence-labeling metric, and Hugging Face’s recommended path is to load seqeval through the Evaluate library, where it reports precision, recall, F1, and accuracy.

The next step is to turn raw model outputs into something the metric can understand. A token classification model produces scores for each possible label, and the usual move is to pick the highest-scoring label for every token. Before scoring, though, we need to convert numeric ids back into label strings and remove positions marked -100, which Hugging Face uses to ignore special tokens and other positions that should not affect training or evaluation. That alignment step matters because seqeval expects label strings, not raw integers, so evaluation stays faithful to the sentence the way a person would read it.

Once that cleanup is done, the metrics start telling a story. Precision asks how often the model was right when it predicted a label, recall asks how much of the true structure it managed to recover, and F1 gives us a balanced view of both. Scikit-learn describes F1 as the harmonic mean of precision and recall, which makes it useful when you want one score that rewards both care and completeness. Accuracy is still reported by seqeval, but in token classification we usually lean on F1 because it gives a clearer picture of how well the model is handling the labeled spans we care about.

A single score is helpful, but it can also hide a lot of small dramas. One model may do well on PERSON entities and struggle with ORG, or it may find most entities but keep missing the last token in a phrase. That is why it helps to look at class-by-class results, not only the overall number. seqeval includes a classification_report, which gives a text summary of the main classification metrics and helps you see where the model is strong, where it hesitates, and where it is repeatedly confusing one label for another.

That closer look is often where the most useful learning happens. If the model tags New York correctly but misses City, you are probably seeing a boundary problem rather than a complete failure. If it labels a token as part of an entity when it should be outside, that often points to a confusing example in the training data or an alignment issue between tokenization and labels. So evaluation is not just a final exam; it is also a flashlight. It shows you which part of the pipeline needs another pass, and it helps you decide whether the next improvement should come from cleaner annotations, better label alignment, or a stronger model checkpoint.