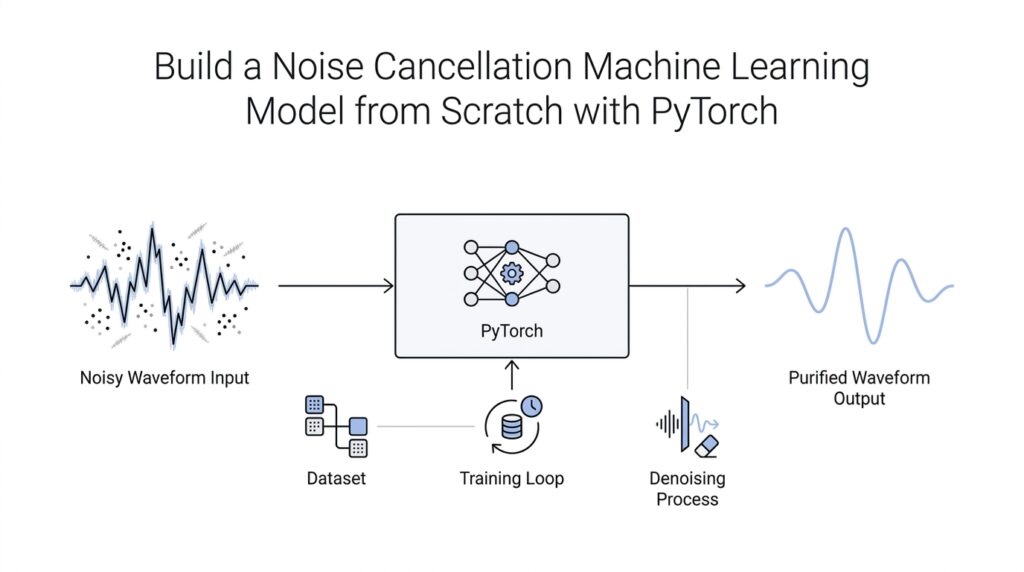

Create Paired Audio Data

How do you train a model to remove noise if it never sees the same sentence twice? The answer is to build paired audio data: one version of a sound clip with noise, and one version of the same clip kept clean. For a noise cancellation machine learning model, this pairing is the bridge between raw audio and something PyTorch can learn from. It gives the model a clear before-and-after story, which is exactly what we need at the start of this journey.

Think of it like handing a student both a messy page and the corrected answer sheet. The messy page is the input, which includes speech plus background noise. The answer sheet is the target, which contains only the clean speech you want the model to recover. When we create paired audio data, we are teaching the model that these two clips belong together, sample by sample, so it can learn what to keep and what to remove.

That pairing only works if the clips line up properly. Audio is a stream of numbers, and those numbers represent sound over time, so even a small shift can confuse the training process. If the noisy clip starts a fraction of a second earlier than the clean clip, the model may learn the wrong relationship and treat timing errors as noise. To avoid that, we keep both versions trimmed, padded, and matched to the same length, which means they contain the same time window of speech.

In practice, paired audio data usually comes from two paths. Sometimes you already have clean recordings and mix in extra noise yourself, which is a common way to create training pairs when real-world data is limited. Other times you collect real noisy speech and separately obtain a clean reference recording of the same sentence. The first method gives you more control, while the second feels more like the real world, and both can help a noise cancellation machine learning model learn useful patterns.

This is also where signal-to-noise ratio, or SNR, enters the picture. SNR is a measure of how loud the useful sound is compared with the unwanted noise. A high SNR means the speech is easier to hear, while a low SNR means the noise is overpowering the voice. By mixing clean audio with different SNR values, we create a range of difficulty levels, which helps the model learn to handle everything from a quiet hum to a crowded room.

Once the pairs are built, we store them in a format PyTorch can work with, often as tensors, which are the multidimensional arrays of numbers that neural networks read. At this stage, it helps to keep the noisy input and clean target organized by filename or shared index so they never get separated. If you ever ask, “How do I prepare audio for a noise cancellation model?” this matching step is the heart of the answer. The model cannot learn to clean what it cannot compare, and paired audio data gives it that comparison on every training example.

There is one more practical detail worth keeping close: consistency matters. The same sample rate, which is the number of audio snapshots taken per second, should apply to both clips so the model sees time in a uniform way. The same channel format should also be used, whether that means mono, which is a single audio channel, or stereo, which is two channels. Once these pieces are in place, we have a dependable dataset that turns noisy sound into a teachable pattern, and that sets us up perfectly for the next step of shaping those clips into model-ready inputs.

Preprocess Waveform Samples

Now that the noisy and clean clips are paired, we can start to preprocess waveform samples so they speak the same language to PyTorch. How do we preprocess waveform samples for PyTorch without erasing the timing clues the model needs? The answer is to turn each clip into a consistent waveform—the raw sound values laid out over time—and then shape those values into a tensor, which is PyTorch’s name for a multi-dimensional array of numbers. At this stage, we are not trying to make the audio sound prettier; we are trying to make it predictable enough that the model can learn from it.

The first thing we usually do is load the audio into memory in a form the model can work with. In TorchAudio, torchaudio.load() returns a waveform tensor and a sample rate, and by default that waveform is stored as float32 values normalized to the range [-1.0, 1.0]. That is helpful because loud and quiet recordings arrive on a shared scale instead of a messy mix of formats, and it gives us a clean starting point for noise cancellation machine learning preprocessing. If you have ever wondered why one file seems huge and another feels tiny, this early standardization is often the reason.

From there, we make the timing consistent. The sample rate is the number of snapshots of sound taken each second, so two clips with different sample rates are like two videos recorded with different frame rates; they may describe the same event, but their numbers do not line up naturally. TorchAudio provides resampling utilities that use bandlimited interpolation, and that gives us a practical way to convert everything to one shared sample rate before training. This step matters because a model cannot compare time fairly if one waveform is measured in tighter slices than another.

Once the sample rate matches, we turn to length. Training works best when each example has the same number of frames, because PyTorch batches tensors by stacking them together, and torch.stack() requires every tensor in the group to be the same size. That is why we usually trim extra audio from long clips and pad shorter ones with silence until they fit a fixed window. Think of it like cutting and filling index cards so every card has the same shape before we place them in a box. The point is not to destroy content, but to give the model a stable canvas for learning.

We also keep an eye on channels, because waveform samples can arrive as mono—one audio channel—or stereo—two channels, usually left and right. TorchAudio reports the number of channels along with the number of frames, so we can decide early whether to keep both channels or fold them into a single mono stream. For a first noise cancellation machine learning model, one channel is often easier to reason about, because it removes one more source of variation from the data. The important part is consistency: every noisy input and every clean target should follow the same channel format so the network does not have to guess what kind of audio it is seeing.

By the time we finish preprocess waveform samples, each pair should feel boring in the best possible way: same sample rate, same duration, same channel layout, and the same numeric range. That boredom is a gift, because it lets the model focus on the real puzzle, which is separating speech from noise instead of wrestling with mismatched file formats. When we reach for the next part of the pipeline, we want our noisy input and clean target to behave like twins that were measured with the same ruler.

Extract Spectrogram Features

At this point, the audio is lined up and predictable, and now we need to turn sound into something the model can read like a map. If you’ve ever wondered, how do we extract spectrogram features for a noise cancellation model?, this is the step where a waveform stops feeling like a long ribbon of numbers and starts behaving like a structured image. In PyTorch, that usually begins with a spectrogram, which shows how frequency content changes over time, and it is built from the short-time Fourier transform (STFT), a way of slicing audio into small overlapping windows before measuring their frequency makeup.

The good news is that the transform is doing something very human-friendly: it looks at a tiny stretch of sound, asks what frequencies are hiding inside it, then moves forward and asks again. That sliding-window idea is exactly what the STFT does, and PyTorch documents that torch.stft computes frequency components of short overlapping windows as they change over time. It also tells us that the number of frequency bins depends on n_fft, the FFT size, and that a real-valued input with onesided=True produces n_fft // 2 + 1 frequency bins.

That is why the two knobs you reach for first are n_fft and hop_length. n_fft controls how finely the frequency axis is divided, while hop_length controls how far the window moves between snapshots, which changes the time resolution. TorchAudio’s tutorial shows the same idea in practice: larger n_fft values give more frequency detail, and hop_length affects how many time frames you get. If the settings feel like tuning a camera, that is a helpful analogy—n_fft changes how sharp the scene is, and hop_length changes how often you take each picture.

There is one more setting that quietly makes a big difference: the window function. PyTorch warns that leaving the window unspecified can default to a rectangular window, which may create unwanted artifacts, and it recommends tapered windows such as torch.hann_window(). In plain language, the window is the soft-edged frame you place around each slice of audio so the model sees a smoother transition instead of a hard cut. When we are preparing spectrogram features for a noise cancellation machine learning model, that little frame helps the frequency picture look less jagged and more trustworthy.

Once we have the spectrogram, we still have to decide how much of it we want the model to stare at. A magnitude spectrogram keeps the strength of each frequency, while a power spectrogram squares that strength; TorchAudio’s Spectrogram transform lets you choose the exponent with the power argument, and if you set it to None, you get the complex spectrum instead. That choice matters because different models like different kinds of clues. For a first noise cancellation machine learning project, many people start with magnitude or power features because they are easier to inspect and feed into a network.

Very often, we then compress the range with a log-like scale. AmplitudeToDB turns amplitude or power values into decibels (dB), which are a logarithmic unit that makes large and small values easier to compare visually and numerically. TorchAudio notes that this conversion depends on the maximum value in the input tensor, so the same clip can look slightly different if you split it into smaller snippets first. That is why it helps to apply the same feature-extraction pipeline to both the noisy input and the clean target, so the model is learning from matching views of the same moment.

If you want a more compact representation, you can also move from normal frequency bins to the mel scale, a frequency scale designed to space bands more like human hearing. TorchAudio’s MelScale turns a normal STFT into a mel frequency STFT using triangular filter banks, and MelSpectrogram is built from Spectrogram() plus MelScale(). That makes mel spectrogram features a neat shortcut when you want fewer frequency bands and a smoother, speech-friendly view of the signal. The tradeoff is that you give the model a more compressed picture, so the right choice depends on whether you care more about detail or compactness.

So when we extract spectrogram features, we are not decorating the audio—we are translating it. We take the waveform we already cleaned up, slice it into overlapping windows, turn those windows into frequency frames, and then optionally compress or rescale them until the numbers are ready for PyTorch. That gives us a clean bridge from raw sound to learnable input, and it sets us up to pair those noisy and clean feature maps in the next stage of the pipeline.

Define the Denoiser Model

At this point, we have turned sound into a cleaner, more structured representation, and now we need the part of the system that learns the actual rescue plan. The denoiser model is the neural network that takes a noisy input and predicts what the clean speech should look like, which is the heart of a noise cancellation machine learning model. If you are asking, “How do we define the denoiser model in PyTorch?”, the answer starts with choosing a network that can read the noisy features we already prepared and learn the difference between clutter and speech.

Think of the model as a careful listener with two jobs at once: first, notice the patterns in the noisy signal, and then decide which parts belong to the voice and which parts belong to the noise. In practice, we usually build this kind of model as an encoder-decoder, which is a network that compresses information into a smaller internal form and then rebuilds it on the other side. The encoder acts like a scanner that gathers clues, while the decoder acts like a restorer that turns those clues back into a cleaner version of the audio. That shape works well for a denoiser model because noise cancellation is really a story of separation and reconstruction.

So what does the model actually receive? Since we just extracted spectrogram features, the input is often a noisy spectrogram, and the target is the matching clean spectrogram from the paired audio data. That gives the network a very direct lesson: here is the messy version, here is the clean version, and your job is to learn the mapping between them. In a noise cancellation machine learning model, this mapping matters more than memorizing one specific file, because we want the model to generalize to new voices, new rooms, and new kinds of background noise.

When we define the denoiser model in PyTorch, we also decide how much complexity we want in the network. A smaller model may use a stack of convolutional layers, which are layers that slide across the input and look for local patterns, much like tracing a finger over the ridges of a map. That is helpful because speech has repeating structures, and noise often breaks those structures in recognizable ways. A deeper model can capture richer relationships, but it also needs more data and more care, so we start with a shape that is powerful enough to learn without becoming overwhelming to train.

Another design choice is whether the model predicts the clean signal directly or predicts a mask, which is a set of values that tells us how much of each part of the noisy input to keep. Predicting a mask can feel like dimming the unwanted parts of a photo instead of redrawing the entire picture from scratch. Both approaches can work, but masking often gives the denoiser model an easier task because it learns to adjust what is already there rather than inventing everything anew. That can make the noise cancellation machine learning model easier to stabilize, especially when you are still learning the pipeline.

We also need to make the model’s output match the form of the training target. If the target is a clean spectrogram, then the final layer should produce something with the same shape, so we can compare the two and measure the error. That comparison is what teaches the model, because the network sees where its prediction differs from the clean reference and updates itself to close the gap. In other words, the denoiser model is not magical; it is a pattern-finding machine that improves by repeatedly comparing noisy guesses with clean truth.

A good way to keep the design grounded is to remember the one question the model must answer every time: which parts of this signal are useful speech, and which parts are noise? Once we frame the task that way, the architecture choices start to make sense, from the layers we stack to the output we expect. The denoiser model is the brain of the noise cancellation machine learning model, and with its shape defined, we are ready to connect it to a loss function and let training begin.

Train on Noisy Inputs

Once the paired clips are ready, training starts with a very human question: how do we train on noisy inputs without teaching the model to copy the noise? In PyTorch, we answer that by switching the network into training mode with model.train(), then feeding it batches from a DataLoader, which is the tool PyTorch uses to iterate over training data in chunks. That is the moment the noise cancellation machine learning model stops being a diagram and becomes a student, taking one noisy example at a time and trying to match it to the clean reference we prepared earlier.

The next step is to let the data arrive in batches instead of one clip at a time. A batch is a small group of examples that the model sees together, and DataLoader can also shuffle those examples so the model does not get too comfortable with one fixed order. That matters because we want the noise cancellation machine learning model to learn the pattern of speech under changing noise, not the rhythm of the dataset itself. When each noisy input stays matched to its clean target inside the same dataset entry, the model gets a fair comparison every time.

Inside the training loop, the noisy tensor goes forward through the model, and the model returns a prediction of what it thinks the clean audio should look like. Then we measure the gap between prediction and target with a loss function, which is PyTorch’s way of scoring error. For denoising, nn.MSELoss is a common starting point because it measures the mean squared error between the predicted tensor and the clean tensor, and it expects the two shapes to match. In plain language, we are asking, “How far did the model drift from the clean answer on this batch?”

That score only becomes useful when we turn it into learning signal. Before each batch, we clear old gradients with optimizer.zero_grad(), because PyTorch accumulates gradients by default, and leaving the old ones in place would blend yesterday’s mistake with today’s lesson. After the loss is computed, we call loss.backward() to trace how each parameter contributed to the error, and then optimizer.step() to update the weights. If you are wondering why the order matters, this is the reason: zero first, learn from the current batch, then move the weights a little closer to the clean target.

A nice way to picture this is to imagine the model wearing headphones in a busy room. Each noisy input is a practice round where the model hears the whole mess, makes a guess, and then compares that guess with the clean version we already know. Over many batches, the weights start to favor patterns that survive the noise, like the steady shape of vowels or consonants, while less useful clutter gets pushed aside. That repeated adjustment is the heart of training a noise cancellation machine learning model in PyTorch: not one perfect guess, but many small corrections guided by the same clean truth.

During validation, we change the mood of the model. We call model.eval() so modules that behave differently in training and inference, such as Dropout and BatchNorm, switch into evaluation behavior, and we wrap the forward pass in torch.no_grad() so PyTorch stops tracking gradients and saves memory. This is the quieter part of the journey, where we stop teaching and start listening to whether the model actually cleans up sound it has never seen before. The noisy input still matters here, but now it is a test rather than a lesson.

If the loop feels repetitive, that is a good sign. Training a noise cancellation machine learning model is supposed to look a little like practice: noisy batch, clean target, loss, backward pass, update, repeat. The model slowly learns that the noisy input is not the answer itself; it is the puzzle we solve to reach the answer. Once that rhythm is in place, we are ready to look at how the loss behaves from epoch to epoch and decide whether the model is truly getting better.

Test Model Performance

How do you know your noise cancellation machine learning model actually works on audio it has never heard before? This is the moment when we stop teaching and start checking, so we switch the network into evaluation mode with model.eval() and keep gradient tracking off with torch.no_grad() or torch.inference_mode(). PyTorch defines eval() as the mode that changes how layers like Dropout and BatchNorm behave, and it notes that no_grad() and inference_mode() are meant for inference, with inference_mode() also requiring model.eval() if you want proper evaluation behavior.

From there, we feed the held-out noisy clips through a DataLoader, which is the PyTorch utility that wraps a dataset and lets us iterate over samples in batches. That keeps the test run organized and gives every noisy input the same forward pass, while the matching clean target stays close by so we can compare them pair by pair. This is the same basic data path we used during training, but now the model is only making predictions, not learning from them.

The next step is to measure the gap between what the model predicted and the clean audio we expected. We usually reuse the same loss idea we trained with, because the loss gives us a single number that tells us how far the denoised output drifted from the reference, and that makes a noise cancellation machine learning model much easier to judge across different clips. If the score stays close to what we saw on validation data, that is a good sign the model is generalizing; if it suddenly jumps on the test set, that is our clue that the model may have learned the training examples too specifically rather than the broader speech pattern.

Numbers help, but audio has a habit of hiding surprises, so we also listen to the output itself. A denoised clip can score well and still sound a little hollow, clipped, or over-smoothed, and that is why a quick listening check is such a valuable companion to the loss value. In practice, this is the point where we ask whether the noise cancellation machine learning model is preserving the shape of the voice, not just shrinking the error on paper.

It also helps to compare the same test example before and after denoising, because the contrast tells a clearer story than either clip alone. A strong result sounds like speech that stays natural while the background hum, hiss, or room noise steps back into the distance; a weak result sounds like the model shaved away too much detail along with the noise. When we see that pattern repeat across several unseen clips, we can trust that the noise cancellation machine learning model is learning a useful habit, not memorizing a single recording.

By the time we finish testing, we are not looking for perfection so much as consistency. We want the model to behave calmly on new audio, keep the speech intact, and reduce the noise in a way that holds up across different voices and rooms. That steady behavior is the real signal that the pipeline is working, and it gives us a solid foundation for deciding whether to tune the model further or move on to deployment.