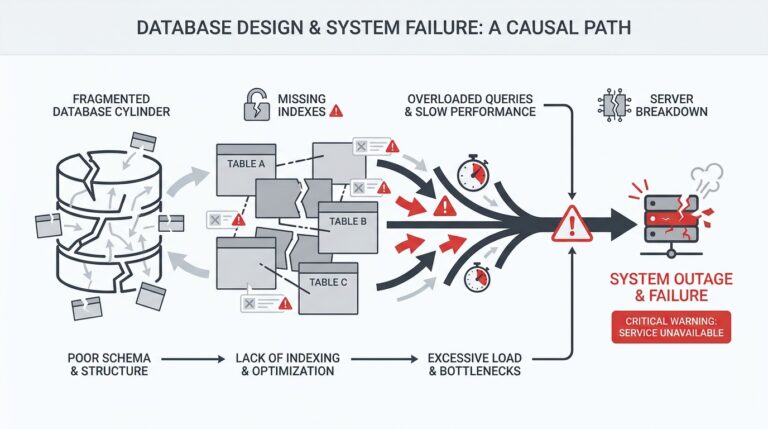

Why faster databases fall short

Low latency is a necessary but insufficient answer to delivering reliable real-time analytics; you need an analytics language layer and typed APIs to close the gap between raw speed and predictable, auditable insights. Faster databases reduce query time, but they don’t encode meaning, enforce contracts, or prevent subtle semantic regressions when schemas and upstream events change. For real-time analytics and streaming dashboards you care about correctness, lineage, and stable contracts at least as much as you care about nanosecond response times. How do you avoid a situation where a single schema tweak silently breaks all downstream visualizations?

Building on the operational realities we already discussed, performance improvements treat the symptom—latency—while leaving the underlying fragility intact. Databases can return rows quickly, but they cannot guarantee that a column represents the same business concept across versions or sources. When multiple teams push event schema changes, the database still executes queries fast, yet aggregations may aggregate different semantics into one metric. This is why teams that focus only on faster databases still see alert storms and trust erosion in dashboards.

Beyond semantics, there are practical pipeline concerns that speed alone doesn’t fix. Real-time ingestion, transformation, and cleanup introduce races and backfills; materialized views can be fast but stale if dependencies change; and joins across heterogeneous sources can produce nondeterministic results when timestamps or watermarking rules differ. In production we routinely see dashboards that show spikes caused by late-arriving events or by duplicated keys from retries—issues that a low-latency engine won’t detect. We need mechanisms that make intent explicit, validate inputs, and explain how a metric was computed.

That’s where typed APIs and an analytics language layer become indispensable: they turn implicit assumptions into explicit contracts that tooling can check. A typed API is simply an interface that declares the shape and semantics of analytics entities (dimensions, measures, timestamps) so you can run compile-time or CI checks before those changes hit dashboards. For example, a minimal TypeScript-style contract for an analytics endpoint might look like this:

interface PurchaseEvent {

userId: string; // stable user identifier

amountCents: number; // cents to avoid float drift

currency: 'USD' | 'EUR'; // limited enum for safe aggregation

occurredAt: string; // ISO-8601 timestamp

}

type RevenueByDay = {

day: string;

revenueCents: number;

}

When your analytics language layer maps that interface to queryable primitives, you can validate that incoming events match the contract, generate safe SQL or execution plans, and produce typed client libraries for dashboards and microservices. This prevents a change like renaming amount to totalAmount from silently introducing a nullable column that collapses your aggregates.

There are trade-offs: typed APIs and a language layer add development work and an abstraction that must itself be correct and performant. But they move the hard problems—schema evolution, type mismatches, semantic drift, and lineage—out of ad-hoc scripts and into a versioned system where CI, code review, and static checks can run. Faster databases and indexes still matter for throughput and latency, yet pairing them with typed contracts reduces debugging time, eliminates many classes of alerts, and enables safe evolution of real-time analytics.

If you want dashboards you can trust and APIs that other engineers can safely depend on, treat speed as one axis of the solution and typing, contract validation, and an analytics language layer as the other. In the next section we’ll examine practical patterns for designing those typed APIs—how to model measures, evolve contracts safely, and integrate compile-time checks into your CI pipeline so you get both low latency and high confidence in production.

Analytics language layer explained

Building on this foundation, the analytics language layer and typed APIs are the mechanisms that make real-time analytics predictable, auditable, and evolvable rather than merely fast. If you care about stable metrics, end-to-end lineage, and contract-driven evolution, the analytics language layer must sit between raw event streams and your query engine so it can enforce semantics, perform static checks, and generate safe execution plans. We’ll treat the language layer as a compiler-like system that verifies intent before any dashboard or downstream service consumes data.

At its core the language layer provides three capabilities: a typed schema/contract model, a declarative transformation DSL (domain-specific language), and a compilation pipeline that emits validated queries or materialized view definitions. The typed API declares shapes and semantics for dimensions, measures, and timestamps so tooling can detect breaking changes at merge time. The DSL expresses how to derive metrics (for example, grouping, windowing, or enrichment) in a way that preserves types, and the compiler enforces invariants such as time-zone handling, numeric units, and nullability rules. By moving these checks to the language layer, you shift detection of semantic regressions from runtime alert storms to CI failures.

To make this concrete, imagine you expose a typed API for a revenue metric rather than a free-form SQL view. In the language layer you might declare a measure like Revenue(amount_cents: Int, currency: Currency) and a transformation RevenueByDay = group_by(window(order_time, 1 day), sum(Revenue.amount_cents)). The compiler can then validate that all upstream events include amount_cents as an integer, that currency maps to a small enum, and that the windowing function has correct watermark rules. If someone renames amount_cents upstream or changes the event timestamp semantics, the change fails static checks and a PR must either update the contract or provide a backward-compatible migration.

Beyond schema checks, the language layer encodes operational rules you otherwise wrestle with in ad-hoc scripts: late-arrival policies, deduplication semantics, deterministic joins, and lineage metadata. For instance, you can declare a watermark policy alongside a grouped aggregation so the compiler reasons about completeness guarantees and flags queries that would produce nondeterministic aggregates. Lineage information generated by the layer lets you trace every cell in a dashboard back to the exact transformation and source fields, which is essential when debugging spikes. These capabilities are what separate low-latency databases from a trustworthy real-time analytics stack.

How do you introduce this without slowing down delivery? Start by modeling high-impact metrics and exposing them through versioned typed APIs. Implement schema evolution rules—minor additive changes are auto-accepted, breaking renames require a new API version—and wire static contract tests into CI so breaking diffs fail early. Produce client libraries from the language layer (typed SDKs for dashboards and services) to prevent manual SQL drift, and add runtime guards that reject events failing validation while emitting telemetry for migration work. This incremental adoption preserves developer velocity while removing many classes of silent regressions.

Taking this concept further, the language layer becomes the place where we reconcile performance and correctness: the compiler targets your fast database or stream engine for execution while keeping type safety and lineage intact. In the next section we’ll examine practical patterns for designing those typed APIs—how to model measures, evolve contracts safely, and integrate compile-time checks into CI—so you can get both low latency and high confidence in production.

Typed API fundamentals

Building on this foundation, the analytics language layer and typed APIs are the place we encode intent so dashboards remain trustworthy even as event schemas and teams change. In practice that means treating metrics, dimensions, and timestamps as first-class typed contracts rather than ad-hoc SQL views—so you can run static checks and catch regressions before they hit production. For real-time analytics you need these contracts to be explicit because low latency alone won’t protect you from semantic drift, late-arriving events, or mismatched units.

At the core, a typed API declares three things: shape (field names and types), semantics (units, enums, cardinality guarantees), and operational invariants (watermarks, deduplication keys). When you model a revenue metric, for example, declare amount as an integer number of cents, currency as a constrained enum, and the event time as a timestamp with a timezone policy. A concise DSL or annotated TypeScript definition makes these invariants machine-checkable, for example:

metric Revenue {

measure amount_cents: Int(unit: "cents");

dimension currency: Enum<["USD","EUR"]>;

timestamp occurred_at: Timestamp(tz: "UTC");

window tumbling(1d) with watermark(5m);

}

This type-level metadata solves concrete problems you face every day: nullability rules that change aggregation semantics, implicit unit mismatches that skew totals, and ambiguous join keys that produce nondeterministic results. By encoding units, nullability, and cardinality constraints up front you prevent a common class of bugs—like accidentally summing dollars and cents or combining metrics sourced from inconsistent user identifiers. These typed invariants also feed lineage: when every cell in a dashboard carries type-checked provenance, you can trace a spike back to the exact transformation and source field.

How do you evolve a metric without breaking downstream dashboards? Adopt a versioned contract strategy and clear compatibility rules. Allow additive, non-breaking changes (new optional fields) to flow through automatically, while requiring an explicit new API version for breaking renames or type changes. In practice we gate breaking changes behind a migration PR that includes a transformation mapping (old_field → new_field), automated contract tests, and a deprecation window; that pattern limits blast radius and gives consumers time to upgrade.

Tooling makes these fundamentals actionable. Generate typed client SDKs from the language layer so dashboards and services use the exact contract rather than hand-rolled SQL. Run a compile step during CI (analytics-compiler –check) that validates schema conformance, verifies watermark and windowing rules, and emits lineage metadata; if checks fail, the merge fails. At runtime, enforce input validation at ingestion (reject or quarantine events that violate the contract) and ship telemetry that surfaces contract violations, so migrations become observable work rather than silent failures.

Put differently, typed APIs are both a design discipline and an engineering pattern: they bring static guarantees into the same lifecycle as code. When an upstream team renames amount to totalAmount, the compiler or CI should catch the mismatch and force an explicit mapping or version bump, preventing a silent null collapse in your aggregates. Taking this concept further, the next section will show concrete design patterns for modeling measures, evolving contracts safely, and wiring static contract tests into CI so you get both low latency and durable correctness for real-time analytics.

Designing typed API schemas

Building on this foundation, start by treating the typed API as your primary design artifact: it is where semantics, units, and operational invariants live first, and SQL or execution plans are generated second. When you model types here you encode what matters for correctness—amounts in cents, constrained enums for currency, timestamp semantics, and deduplication keys—so the analytics language layer can detect semantic drift before dashboards break. Front-load these invariants in the schema and your CI will catch many regressions that speed-optimized databases alone cannot. Designing the typed API correctly reduces firefighting and makes downstream consumers confident they can depend on your metrics.

Model primitives explicitly and conservatively so consumers never guess intent. Declare numeric units, nullability, and cardinality as part of the field type rather than as separate docs: for example, amount: Int(unit: “cents”, nullable: false, aggregator: sum) communicates aggregation safety and prevents unit-mixing bugs. Treat timestamps as first-class typed fields with timezone and watermark metadata, for example occurred_at: Timestamp(tz: “UTC”, watermark: “5m”); this lets the compiler reason about completeness and windowing determinism. Also model identity and join keys with cardinality guarantees (user_id: Id(singleton) vs session_id: Id(many)) so join strategies are explicit and joins remain deterministic under retries and partial failures.

Design a clear evolution strategy that answers the inevitable question: How do you avoid silent breaks when fields are renamed or types change? Establish compatibility rules: additive optional fields are backward-compatible; renames and narrowing types require a new version or an explicit migration mapping. Require migration PRs to include a transformation that maps old_field -> new_field, automated contract tests that exercise both old and new shapes, and a deprecation window that gives consumers time to upgrade. Use semantic or calendar-based versioning for contracts and surface compatibility warnings in the SDKs so consumers have actionable upgrade paths rather than surprise nulls in aggregates.

Make typing actionable via CI and runtime checks so schema design drives behavior. Run a compile-time check (analytics-compiler –check) on every PR to validate shapes, units, watermark rules, and lineage assertions; fail the merge if invariants break. Generate typed client SDKs from the same schema so dashboards and microservices import the contract instead of hand-writing SQL, and include runtime ingestion validators that quarantine or reject events violating the contract while emitting telemetry for migration work. This combination moves detection left (in CI) and makes migrations observable at runtime, turning schema evolution from guesswork into a controlled engineering process.

Concrete patterns speed adoption and reduce mistakes. Use an explicit transform map when you introduce breaking changes, for example:

// migration PR: v1 -> v2 mapping

transform RevenueV1_to_V2(event: RevenueV1): RevenueV2 {

return {

total_cents: event.amount_cents ?? (event.amount_dollars ? event.amount_dollars * 100 : 0),

currency: event.currency || 'USD',

occurred_at: normalizeTimestamp(event.occurred_at)

};

}

Require unit tests that assert parity between v1-derived aggregates and v2-native aggregates within an acceptable tolerance during the deprecation window. Also emit lineage metadata alongside these tests so reviewers can trace every output cell to the exact input field and transformation function, which dramatically reduces review overhead and post-merge surprises.

Finally, trade complexity in the schema for certainty in production: prefer explicit enums, units, and watermark policies even when they initially feel verbose. That verbosity becomes leverage: it enables static checks, SDK generation, and reproducible migrations that prevent the alert storms we described earlier. Taking these pragmatic schema-design steps lets the analytics language layer do its job—compile intent into reliable execution—and sets up the next discussion on concrete compiler rules and CI integration patterns.

Typed API implementation walkthrough

Building on this foundation, we’ll walk through a practical implementation that turns the analytics language layer and typed API concepts into deployable artifacts for real-time analytics. Start by treating the typed API as the single source of truth for shape, semantics, and operational invariants so every downstream artifact is generated from the same contract. In this walkthrough we’ll implement a minimal pipeline: contract authoring, compilation and checks, runtime validation, SDK generation, migration mapping, and observability so you can ship metrics with confidence rather than surprise.

Begin by authoring the contract in a machine-readable DSL or annotated TypeScript so semantics are explicit from day one. Define measures, dimensions, timestamps, units, and cardinality guarantees in the schema; for example, a revenue metric in a TypeScript-like DSL:

ts

metric Revenue {

measure amount_cents: Int(unit: "cents", nullable: false);

dimension currency: Enum<["USD","EUR"]>;

timestamp occurred_at: Timestamp(tz: "UTC", watermark: "5m");

id order_id: Id(singleton);

}This declaration does three things: it documents intent, makes invariants machine-checkable, and gives the compiler the metadata it needs to generate safe queries and lineage information.

Next, implement the compiler pipeline that validates contracts and emits execution artifacts for your target engine. The compiler should perform static checks (type conformance, nullability, unit consistency, watermark composition) and either generate parameterized SQL or a stream execution plan; run it as part of CI with a command like analytics-compiler –check –schema=Revenue.dsl. Integrate these checks into pull requests so any upstream rename, type narrowing, or watermark mismatch fails fast; failing early converts runtime debugging into a review-time problem and prevents silent null collapses in dashboards.

At ingestion, wire runtime validators and graceful migration paths so production data never silently violates the contract. Implement an ingestion validator that rejects or quarantines events that fail schema checks while emitting rich telemetry for migration work. For breaking changes, include an explicit transform map in the migration PR that converts old shapes into the new contract; for example:

ts

function RevenueV1_to_V2(e: RevenueV1): RevenueV2 {

return {

amount_cents: e.amount_cents ?? (e.amount_dollars ? Math.round(e.amount_dollars * 100) : 0),

currency: e.currency || 'USD',

occurred_at: normalize(e.occurred_at)

};

}Require unit tests that assert parity between v1-derived aggregates and v2-native aggregates during the deprecation window.

Generate typed client SDKs from the same schema so dashboards and services import the contract rather than hand-writing SQL. A generated TypeScript SDK enforces compile-time correctness for visualization queries and prevents SQL drift:

ts

import { RevenueClient } from 'analytics-sdk';

const cli = new RevenueClient();

const rows: RevenueByDay[] = await cli.query({ window: '1d' });Version the SDK alongside the contract and surface compatibility warnings in the package so consumers can plan upgrades; use semantic or calendar-based contract versions and require migration PRs for breaking bumps.

Observe, trace, and roll out carefully: emit lineage metadata for every compiled artifact, record watermark and deduplication choices, and create a test harness that replays historical events through both old and new transforms. How do you roll this out safely? Start with high-impact metrics, run parallel reporting during the deprecation window, gate the merge on artifact-level parity tests, and gradually expand coverage. These rollout steps minimize blast radius and let you validate correctness under production-like load.

Taking these implementation steps turns the analytics language layer from an abstract idea into an operational system: typed APIs become living contracts enforced by compiler checks, ingestion validators, generated SDKs, and lineage telemetry. In the next section we’ll examine concrete compiler rules and CI snippets that automate these checks so your real-time analytics are both fast and auditable.

Monitoring and versioning APIs

Building on this foundation, robust monitoring and deliberate versioning are what turn typed APIs and an analytics language layer from nice-to-have design patterns into production-grade guarantees for real-time analytics. If you only compile contracts and generate SDKs but lack visibility into how contracts behave in the wild, you still end up debugging dashboards at 3 a.m. We need monitoring that exercises the same invariants the compiler enforces, and a versioning policy that makes compatibility explicit and auditable. Treat these as first-class engineering features: observability plus safe evolution prevents silent regressions and preserves trust in downstream visualizations.

Start by defining exactly what you will monitor. The primary signals are schema conformance (percent of events matching the typed API), transformation parity (aggregates computed from legacy vs. new mappings), and operational invariants like watermark lag and deduplication effectiveness. Instrument your ingestion layer to emit schema.validation.failures, transform.parity.delta, watermark.lag_ms, and dedupe.rate with dimensions for source, contract version, and deployment shard. These metrics let you detect semantic drift—when a field’s meaning or cardinality subtly changes—and surface it before it shows up as a dashboard spike.

Observability must combine metrics, traces, and lineage-aware logs so you can answer both “what” and “why.” Capture a trace through the analytics language layer: ingestion → validation → transform → compile → materialize. Correlate traces to metric spikes and include lineage identifiers so a single dashboard cell maps back to the exact contract version and transform function. How do you set thresholds? Use historical baselines during a deprecation window to set anomaly bounds: flag transform.parity.delta above an agreed tolerance and alert the owning team. This makes alerts actionable rather than noisy.

Versioning is where you encode compatibility rules that the analytics-compiler and CI can check automatically. Adopt a simple policy: additive changes (new optional fields) are backward-compatible; renames, narrowing types, or semantic changes require a new major contract version and an explicit migration mapping. For example, include a migration function in your PR that maps RevenueV1 to RevenueV2 and ship tests that assert parity within an acceptable tolerance: assert parity(aggregateV1, aggregateV2) < 0.5%. Tie SDK package versions to contract versions so consumers see compatibility metadata at install time and know when they must update.

Gate every breaking change with CI-level checks and staged rollout practices. Run analytics-compiler --check and a parity test harness in CI that replays a representative window of production events through old and new transforms. If parity or lineage assertions fail, the merge is rejected; if they pass, deploy the new contract behind a feature flag and run parallel reporting for the deprecation window. Monitor metric.parity.delta and schema.validation.failures during the window and only flip the flag once stability criteria and SLA targets are met.

Finally, decide runtime behavior for violations and operationalize your runbooks. Choose between rejecting, quarantining, or auto-mapping invalid events and emit rich telemetry for whatever path you pick; quarantined events should surface with lineage tags and a remediation ticket in the owning team’s queue. Configure alerting that separates critical contract breaks (stop-the-line) from noise-level drift that schedules an engineering task. Taking this approach—instrumented typed APIs, lineage-aware monitoring, and explicit versioning—lets us evolve real-time analytics with confidence and hands you the practical controls to avoid the silent failures that slow teams down.