Set Up Your Toolkit

The first thing we want is a small, reliable workspace, because Python programming feels much smoother when your tools are ready before the real building begins. If you’ve ever opened a brand-new kitchen and found the oven, knives, and measuring cups all in the same place, you already understand the idea: a good Python toolkit keeps the important pieces close together and easy to reach. What should you install first when you’re setting up a Python toolkit? We start with the essentials that help your code run, help you manage projects, and help you catch mistakes before they turn into frustration.

At the center of that workspace sits Python itself, the language interpreter that reads and runs your code. An interpreter is a program that translates your instructions into actions one line at a time, like a patient reader following a recipe as you write it. For modern Python programming, it helps to use a recent Python 3 release so you can follow current examples and avoid old habits that beginners often inherit by accident. Once Python is installed, we can move from “I have the language” to “I have a place to work with it.”

That place is usually a virtual environment, which is a separate folder that holds the packages for one project without mixing them into every other project on your computer. Think of it like giving each project its own backpack instead of tossing every tool into one giant bag. This matters because one app may need one version of a library while another app needs something different, and your Python development environment stays calm when those worlds stay apart. Creating a virtual environment is one of those small habits that feels ordinary at first, then saves you from a lot of confusion later.

Next comes package management, which is the part of the toolkit that lets you install, update, and remove libraries. A package is a reusable piece of code someone else has written, and a package manager is the tool that helps you bring those pieces into your project without hand-copying files around. In Python programming, this is where your toolkit starts to feel practical, because you can add a web library, a testing tool, or a data-processing helper in a few commands instead of rebuilding everything yourself. The real benefit is not speed alone; it is consistency, because the same project can be recreated later with the same ingredients.

A text editor or code editor is the other half of the story, because writing code in a plain notebook and writing code in a well-chosen editor feel very different. A code editor is a program designed for writing source code, so it can help with indentation, syntax highlighting, and quick navigation. Those features may sound small, but they act like training wheels for your attention: they help you notice a missing character, a mismatched bracket, or a line that needs to be indented before the bug grows teeth. When you are learning, that kind of quiet support makes Python development feel less like guessing and more like reading a clear map.

We also want a testing tool in the toolkit, because good code is not only code that runs once; it is code we can trust again tomorrow. Testing means checking your code in a deliberate way to see whether it behaves the way you expect. At first, this can feel like extra work, but it is a bit like trying on shoes before a long walk: the small check prevents a bigger problem later. As your projects grow, tests become the steady friend that tells you whether a change improved things or accidentally moved a piece in the wrong direction.

Finally, we round out the toolkit with formatting and linting tools, which help keep code readable and catch suspicious patterns. Formatting means arranging code in a consistent style, while linting means scanning code for possible mistakes or style problems. These tools are like a shared house rule that keeps every room orderly, so you do not have to argue with yourself about spacing, naming, or line breaks every time you open a file. Once this foundation is in place, the rest of modern Python programming becomes easier to follow, because your toolkit is doing some of the quiet work for you while you focus on building.

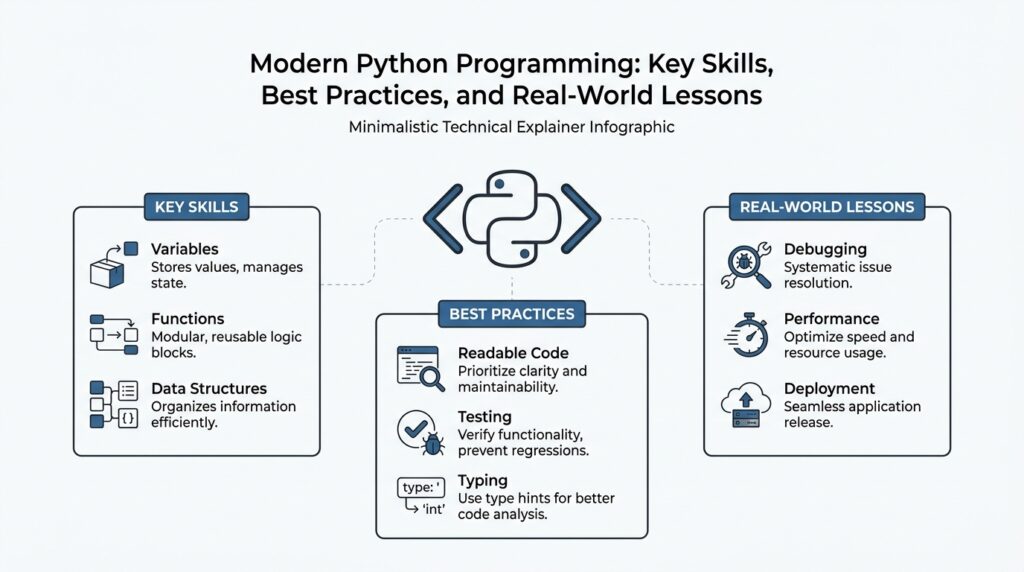

Write Clear Python Code

What makes Python code feel clear instead of crowded? Usually, it is the moment when the code starts telling its own story instead of making us guess. Python’s own style guidance leans hard in this direction: code is read far more often than it is written, and the Zen of Python says that readability counts and explicit is better than implicit. That is the heart of clear Python code: we write for the next person who opens the file, even if that person is future-you.

The first scene in that story is naming. When we choose names like total_price, customer_name, or send_receipt, we give the reader a small map before they even reach the logic. PEP 8 recommends lowercase words with underscores for functions and variables, CapWords for classes, and short, descriptive module names, because names should reflect how something is used rather than how it is built. In practice, that means we stop trying to be clever and start trying to be understood.

Then comes shape: the way the code sits on the page. PEP 8 recommends four spaces for indentation, spaces around operators, and blank lines between major blocks so the eye can rest and the structure can breathe. That may sound cosmetic at first, but it works like good room design: when the hallway is clear, you can see where each door leads. When we keep line breaks, indentation, and spacing consistent, clear Python code becomes easier to scan, review, and change without fear.

We also help the reader by saying what the code does in the places where the code cannot speak for itself. A docstring is a short built-in explanation written inside a module, function, class, or method, and PEP 8 recommends them for public pieces of code; PEP 257 adds that a good docstring should summarize behavior, not repeat the function signature. Comments should do the same kind of honest work: they should explain intent, stay up to date, and avoid restating the obvious. If a comment says “increment x” next to x = x + 1, it has only added noise, not light.

Sometimes clarity needs a little extra help from type hints, which are labels that show the expected type of a value, parameter, or return value. Python does not require them, but the language documentation says they are useful to static type checkers and can also help code editors with completion and refactoring. That makes type hints a bit like labels on storage boxes: they do not replace the contents, but they make the contents easier to find when the room gets busy. For beginners, they also answer a question many people search for: how do I make a function’s purpose obvious without opening its body?

Clear code also stays explicit when it handles work that can go wrong. PEP 8 recommends using specific exceptions instead of a bare except:, keeping the try block as small as possible, and using with statements when a resource needs reliable cleanup. That style gives us a cleaner reading path: we can see what might fail, what we expect, and what happens next. In other words, Python programming becomes less about hoping the code behaves and more about showing, line by line, how it is meant to behave.

As we keep writing, the goal is not perfection on the first pass; it is clarity that can survive the second, third, and tenth reading. When names are honest, spacing is calm, explanations are meaningful, and control flow is explicit, the code begins to feel welcoming instead of mysterious. That is the real payoff of clear Python code: it makes the next change feel like a continuation of the story, not a rescue mission.

Add Type Hints Early

When you first sit down with a fresh Python file, type hints feel a little like sticky labels on moving boxes: they do not change the objects inside, but they make the whole room easier to understand later. If you have ever asked, “When should I add type hints in Python?”, the most helpful answer is early, while the shape of the code is still easy to see. In Python, type hints are annotations that describe the expected types of variables, parameters, and return values, and the language does not enforce them at runtime. Their value shows up in static type checkers, IDEs, and other tools that can read those annotations before the code runs.

That early moment matters because the code is still soft enough to change without friction. When we add type hints at the beginning of a function’s life, we are forced to decide what goes in, what comes out, and whether the name we chose really matches the job it does. PEP 484, the core specification for Python type hints, describes them as a standard syntax for easier static analysis and refactoring, which is a good clue that they are meant to help us think clearly before the project grows teeth. In practice, adding type hints early often feels less like extra paperwork and more like drawing the map before the road gets crowded.

The first payoff is that early type hints make your code easier to read without opening every function body. A parameter like count: int or a return value like -> str gives the next reader a quick promise about behavior, and the Python documentation notes that type hints can aid code completion and refactoring in IDEs. That means your editor can become a quieter partner, catching mismatches and offering the right suggestions while you still have the problem in front of you. For a beginner, that support is valuable because it turns a vague “something feels off” moment into a concrete signal.

It also helps to start at the edges, where your code talks to the rest of the program. Public functions, methods, and shared data structures are usually the best places to add type hints first, because those are the points where confusion spreads fastest if the contract is fuzzy. Python’s typing system lets you annotate simple values like int and str, but it also supports more expressive forms through the typing module when your data becomes more complex. That is one reason early Python type hints feel so practical: they grow with you instead of forcing you to redesign your code later.

When the signature starts to look crowded, type aliases can keep the story readable. A type alias is a shorter name for a longer type expression, and the Python documentation says aliases are useful for simplifying complex type signatures. So if you find yourself repeating the same nested list, dictionary, or tuple shape, you can name that shape once and reuse it, which keeps early annotations from becoming a knot of symbols. That small habit makes type hints easier to adopt at the beginning, because you are not choosing between clarity and precision—you can have both.

It is also important to remember what type hints are not. Python does not enforce them at runtime, so they do not replace tests, and they do not stop a bad value from reaching your function if you pass one in anyway. What they do offer is an earlier layer of feedback: static checking, safer refactoring, and a clearer path for the next person who reads or edits the code. That is why adding type hints early works so well in modern Python programming: the annotations become part of the design, not an afterthought pasted on once the logic is already hard to untangle.

As we keep building, those annotations become quiet guideposts through the rest of the project. They help the code explain itself, they make tools more helpful, and they keep small decisions from turning into larger mysteries. Once that foundation is in place, we can move on with more confidence, because the boundaries of each function are already speaking clearly to us.

Model Data with Dataclasses

Now that our type hints are speaking clearly, we can give the data itself a cleaner shape. This is where Python dataclasses come in, especially when you are tired of writing the same boilerplate over and over for objects that mostly store information. If you have ever wondered, what is a dataclass in Python?, think of it as a neat little container that can hold related values and automatically fill in a lot of the boring setup work for us. That makes dataclasses a very friendly way to model data when your code needs names, numbers, dates, or settings to travel together.

The easiest way to feel this is to imagine a simple recipe card. A recipe card has a title, a list of ingredients, and a few instructions, and we do not need a full machine with lots of custom wiring just to keep those pieces together. A dataclass is the same idea in Python: we describe the fields, and Python helps build the rest. With the @dataclass decorator, a small class can automatically get an initializer, a readable representation, and helpful comparison behavior, which saves time and keeps our code focused on the meaning of the data instead of the mechanics of storing it.

That mechanical work matters more than it first appears. Without dataclasses, we often have to write an __init__ method, a __repr__ method, and sometimes comparison methods by hand, even when the class does little more than bundle values together. Python dataclasses reduce that repetition, so a class like Customer, Order, or Book stays short and easy to scan. The result is not only less code; it is also less room for mistakes, because we are not copying the same setup pattern into every new data model.

What makes Python dataclasses especially useful after type hints is that they turn our earlier labels into a working structure. When we write fields such as name: str or price: float, we are not only describing the data, we are shaping the object around it. That helps both readers and tools understand the class at a glance, which is a quiet but powerful benefit in modern Python programming. In other words, type hints tell the story, and dataclasses give that story a sturdy frame.

As soon as the data gets a little more interesting, we can add defaults and special rules without losing the simplicity. A default value means a field already has a starting value if we do not provide one, and dataclasses.field() gives us finer control when a value needs to be created in a careful way, such as with an empty list for each new object. That detail is easy to miss at first, but it prevents shared-state surprises, where two separate objects accidentally lean on the same mutable data. When people search for how to model data with dataclasses in Python, this is often the moment they need most: enough power to handle real objects, but still inside a clear and readable pattern.

We can also make a dataclass more protective when the data should not change after it is created. A frozen dataclass is one that blocks changes to its fields, which is useful when an object represents something that should stay stable, like a configuration or a coordinate. That is a little like writing values in pencil versus ink; sometimes you want the freedom to edit, and sometimes you want the comfort of knowing the record will stay fixed. Choosing whether a dataclass should be frozen helps us match the class to the job, rather than treating every object the same way.

The real win is that dataclasses help us model the shape of the problem instead of the shape of the syntax. When we are building Python data models, we want the class to read like a small description of the world: this thing has these fields, this field starts with that value, and this object should or should not change later. That clarity makes the next steps easier too, because once the data is well modeled, functions, tests, and even debugging all have something more solid to stand on. From here, we can start asking not only what the data looks like, but how our program should move through it.

Test Behavior, Not Internals

Once your code starts growing, the tempting thing is to test every little mechanism inside it. But the tests that last are the ones that watch what the code does, not how it is assembled. In Python testing, that means we care about behavior: the return value, the raised exception, the saved file, the updated object, or the email that goes out. We do not want our tests to become a mirror of private helper functions, because the moment we refactor, that mirror shatters even when the feature still works.

A good way to picture this is to think about checking a locked door. You do not need to inspect every spring inside the lock to know whether it works; you try the handle, turn the key, and see whether the door opens or stays shut. Behavior-focused tests use the same logic. They ask, “What would a real user, caller, or system observe?” That question keeps our Python tests grounded in the outside world, which is the part that actually matters.

This is where the phrase test behavior, not internals earns its keep. If a function promises to calculate a total, then the test should confirm the total, not the exact sequence of temporary variables used to produce it. If a method promises to reject bad input, then the test should check for the correct error, not whether a private helper happened to run first. That approach makes modern Python programming far less fragile, because we can improve the inside of the machine without rewriting the entire test suite every time.

Here is the kind of shift that helps. Suppose you have a calculate_discount() function inside an Order class, and later you decide to move that logic into a separate helper or dataclass method. If your test only checks the final discounted price, it still passes when the internal structure changes. If your test insists on calling the helper directly, it breaks for no user-facing reason. That is why behavior-based testing feels calmer: it protects the promise, not the plumbing.

There is one place where peeking behind the curtain can still make sense: boundaries. A boundary is where your code talks to something external, such as a database, a web API, or an email service. In those spots, we often use a mock, which is a fake object that stands in for the real dependency during a test. What should we test in Python: the result or the implementation? Usually the result, while using mocks only to avoid slow or risky outside calls. That way, we can confirm that our code asks for the right thing without tying the test to every internal step.

This matters even more after the earlier sections on type hints and dataclasses. Those tools help us describe the shape of data clearly, and behavior-focused tests help us prove that the shape still leads to the right outcome. A dataclass may gain a new field, a function may split into two helpers, or a module may move to a new file, and none of that should force a rewrite if the visible behavior stays the same. The test suite then becomes a safety net for Python refactoring, not a trap that punishes improvement.

A simple habit helps here: write the test from the outside in. Start with the result you want to see, then add only the setup needed to reach that result. If the test starts talking about private attributes, hidden helpers, or exact call order, pause and ask whether you are checking behavior or architecture. That small pause often reveals the cleaner path, and it keeps your Python tests readable for the next person who opens the file.

Over time, this way of testing makes the codebase feel sturdier and kinder. You change the internals, and the tests stay quiet unless the promise truly changed. That is the real win: not a test suite full of clever inspections, but one that tells you, with confidence, whether the code still behaves the way you meant it to.

Handle Async Workloads

When our Python code starts waiting on the outside world, the story changes. A web request pauses, a file takes time to open, or one slow service holds up everything behind it, and that is when async workloads become useful. If you have ever wondered, how do you handle async workloads in Python without freezing the rest of the program?, the answer begins with one simple idea: we let the waiting happen in a way that keeps the rest of the work moving.

That shift is easier to picture if we think about a busy café. A single barista could stand still and watch one drink finish before starting the next, or they could prepare the next order while the milk steams and the espresso pulls. In Python, asynchronous code works more like the second version. An async function, called a coroutine, is a function that can pause and resume later, and that pause lets other tasks get attention while one task is waiting.

The part that keeps this motion organized is the event loop, which is the system that watches for paused tasks and resumes them when they are ready. You can think of it as a traffic controller for your program’s waiting time. Instead of creating a separate thread of control for every pause, the event loop keeps a single rhythm and moves between tasks at the right moments. That is why async programming often feels calm once it clicks: nothing is forced to sit idle when it could be doing something useful.

The most important word in that rhythm is await, because it marks the moment when your code says, “I can pause here and come back later.” When we use await, we are telling Python to hand control back to the event loop until the result is ready. This is a big part of modern Python programming because it makes async workloads readable instead of mysterious; the code still looks like a story, even though the story now has interruptions and returns.

It helps to keep one boundary in mind: async is best for waiting, not for heavy number-crunching. If your program spends most of its time asking the network for data, talking to a database, or handling many slow I/O operations, asynchronous code can make the system feel much more responsive. I/O, or input/output, means the back-and-forth between your program and something outside it, like a server or disk. But if the task is mostly pure computation, async programming will not magically make it faster, because the work is still being done by the same CPU.

That is why asyncio, Python’s built-in library for async workloads, matters so much. It gives us the basic tools to create coroutines, schedule them together, and manage the event loop without pulling in a complicated framework too early. In practice, this helps us write programs that can fetch several resources, wait on several responses, or coordinate several background tasks while still staying readable. The real benefit is not that everything happens at once, but that waiting stops wasting the whole program’s time.

There is one careful habit that beginners learn quickly: once a function becomes asynchronous, its neighbors often need to grow with it. If an async function calls another function that blocks the interpreter for a long time, the event loop can lose its rhythm and the advantage starts to disappear. That means we want to use non-blocking libraries when possible, or move truly blocking work elsewhere so the rest of the async flow stays smooth. This is one of the first places where Python async can feel tricky, and it is completely normal to need a few passes before the pattern feels natural.

The good news is that async code becomes easier to trust when we treat it with the same care we gave to dataclasses and testing. We still want clear names, small focused functions, and tests that check behavior from the outside, because async workloads are easier to maintain when the surrounding code stays honest and simple. Once the waiting is under control, the program feels less like a line at a counter and more like a well-run kitchen, where several useful things can move forward together without stepping on each other.

Package and Ship Cleanly

When your code is still sitting on your machine, it can hide its rough edges. The moment you try to share it, though, Python packaging stops being a background task and becomes part of the craft: you are turning a working project into something another person can install, trust, and run. How do you package Python code so it feels clean the first time someone else meets it? We start by treating shipping as a design choice, not an afterthought, because modern Python programming works best when the project can travel without bringing your whole laptop along.

The first landmark is pyproject.toml, which is the project’s front door. A pyproject.toml file tells build tools what the package is called, what it depends on, and how it should be assembled, so we do not have to bury that information in scattered files. Think of it like a shipping label that also lists the contents of the box; the label helps other tools know what they are handling before they open anything. This is where clean Python packaging starts to feel calm, because the important details live in one place instead of being hunted down later.

From there, we want the code to live in a shape that makes mistakes harder to hide. A common habit is to place the importable code inside a src/ folder, which is a project layout where the real package sits under source control instead of floating loose at the top level. That structure helps us notice accidental imports from the working directory, which can make a project seem ready when it actually only works in one specific folder. When we package and ship Python code cleanly, that little bit of discipline pays off because the code that runs locally is much more likely to be the code that runs after installation too.

Next comes the bundle itself, and this is where two words matter: a wheel and a source distribution. A wheel is a prebuilt package file that installs quickly, while a source distribution is a source archive that other tools can build from later. Both matter because they serve different travelers: one is the ready-to-use suitcase, and the other is the folded pattern that someone can stitch into a suitcase on their own machine. If you have ever wondered, “What should I ship when I publish a Python package?”, the answer is often both, because each one gives users and automation a different path to the same code.

Clean shipping also means telling the truth about what the package needs. Runtime dependencies are the libraries your package must have when someone uses it, while development dependencies are the tools you need while building, testing, or formatting it. Keeping those groups separate makes the package lighter and the intent clearer, which matters a lot when another person installs it in a fresh environment. A version number does similar work: it tells readers whether they are looking at a stable update, a new feature, or a possible breaking change, so the package’s history stays readable instead of mysterious.

Then we add the human-facing pieces, because a package is not complete until it introduces itself well. A README is the first page people read, and a license is the set of permissions that explains what they are allowed to do with the code. Those two files sound ordinary, but they act like the handshake at the door: they tell users what the project does, how to start, and what relationship they can expect with it. In practical Python packaging, that kind of clarity matters just as much as the code inside the archive, because people trust what they can understand quickly.

The last quiet test is to install the package in a fresh environment and see whether it still behaves the same way it did on your machine. That check reveals missing dependencies, broken imports, and assumptions that only existed in your local setup, which is exactly why modern Python packaging rewards caution before release. We can think of it as the final walk-through before handing over the keys: if a stranger can install the package, read the package metadata, and use it without a guided tour, then we have shipped it cleanly and are ready for the next layer of automation.