Set Summarization Goals

You can compare GPT-4 and free LLMs all day, but the conversation only starts to make sense when you know what the summary is supposed to do. Maybe you want a quick “what happened?” version for a busy manager, or maybe you need a careful digest that preserves names, dates, and decisions for later review. Those are both summarization goals, and they lead to very different choices. If you skip this step, even a powerful model can give you a result that feels polished but misses the real job.

That is why we begin by naming the outcome in plain language. A summarization goal is the specific result you want from the model, such as shortening a report, pulling out action items, or translating a long discussion into a few clear takeaways. In GenAI summarization, that goal acts like the destination on a map: without it, the model may move confidently, but not necessarily in the direction you need. When you set the goal first, you can judge GPT-4 and free LLMs against the same target instead of comparing two vague outputs that were never asked to do the same thing.

What do you actually want the reader to get from the summary? That question sounds simple, but it is the hinge that holds everything together. A summary for a customer email should be short and friendly, while a summary for a legal brief should preserve exact meaning and avoid over-compression. One asks for brevity, another asks for fidelity, which means keeping the original meaning intact. Once you see that difference, you start to understand why model quality is only part of the story; the goal decides what quality even means.

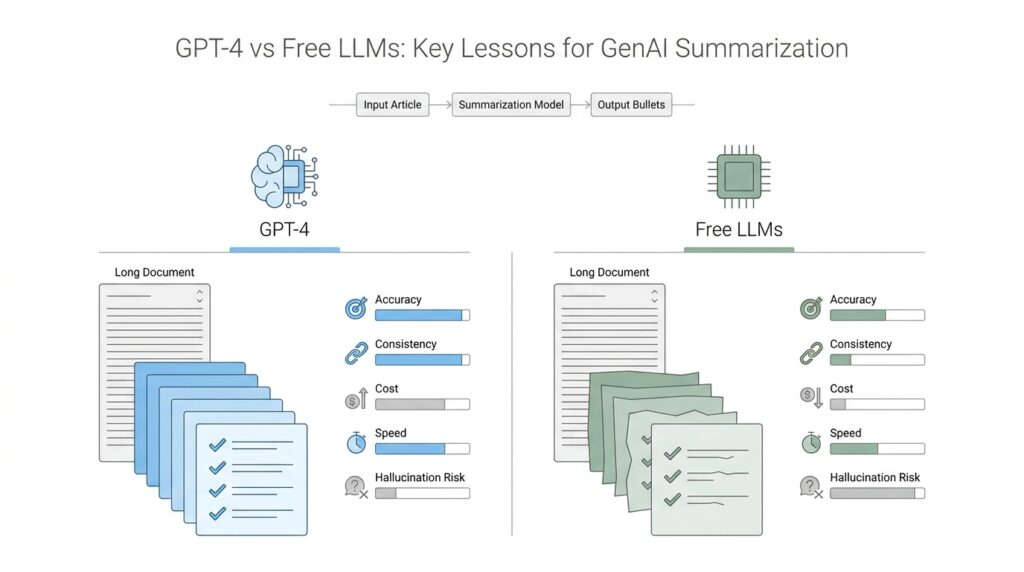

This is also where free LLMs and GPT-4 begin to diverge in practice. A free LLM, or large language model offered at no cost, may be perfectly capable of producing a readable overview, especially when the task is simple and the source text is clean. GPT-4 may handle nuance, longer context, and more careful instruction-following more reliably, especially when the summary needs structure, balance, or subtle distinctions. But neither model can guess your priorities unless you tell them. If your goal is to capture decisions, names, and next steps, then a pretty paragraph that leaves those details behind is not a success.

The easiest way to think about this is to imagine handing the same document to two assistants. One assistant hears, “Make this shorter,” and the other hears, “Tell me the key decisions, the risks, and the actions I need to take.” The first instruction invites a general overview, while the second creates a focused summary with a clear purpose. That difference matters in prompt design because the prompt is how we translate your summarization goals into something the model can follow. When we are careful here, we reduce the chance that GPT-4 or a free LLM will drift into a style that looks helpful but ignores what you actually needed.

You will also save time later by deciding how success will be measured before you generate anything. Do you care most about coverage, which means including the important points? Or do you care most about concision, which means keeping the result very short? Maybe you care about both, but not equally. By choosing that balance upfront, you make GenAI summarization more predictable, and you give yourself a fair way to compare models instead of reacting to whichever one sounds nicest on the first try.

Once the goal is clear, everything downstream becomes easier to shape. You can ask for bullet-like clarity, narrative flow, or strict preservation of key facts, and each choice starts to feel intentional rather than accidental. That is the quiet advantage of setting summarization goals first: it turns a broad model comparison into a focused test, and it gives you a practical lens for deciding whether GPT-4 or a free LLM is the better fit for the job ahead.

Compare Model Strengths

When you start comparing GPT-4 vs free LLMs for summarization, the first thing to notice is that they do not enter the room with the same job description. GPT-4 is built to handle more complex and nuanced language, and OpenAI’s report describes it as stronger on factuality and adherence to desired behavior than earlier models, while still warning that it is not fully reliable and can hallucinate. That matters because a summary is not only about shortening text; it is about keeping the meaning intact while trimming away the noise.

That is where GPT-4 often feels like the steadier guide. If your source material is crowded with names, exceptions, or nested ideas, GPT-4 is more likely to keep the thread in place and follow the shape of your instruction instead of flattening everything into a generic paragraph. In OpenAI’s own framing, GPT-4 was designed to improve understanding and generation in more complex and nuanced scenarios, and it outperformed prior large language models on a broad set of benchmarks. For GenAI summarization, that usually translates into better balance: not too short, not too loose, and not too eager to lose a key detail.

Free LLMs, on the other hand, often shine in a different way: they lower the barrier to entry. If you are experimenting, drafting a rough first pass, or summarizing text that is short and clean, a free model can be enough to get you moving without asking you to commit budget or infrastructure right away. In practical terms, that makes free LLMs useful like a notebook sketch, while GPT-4 feels more like the version you would hand to someone else after the edges matter. The comparison is not about one being “good” and the other being “bad”; it is about how much polish, consistency, and trust you need from the first output.

What do you notice when the stakes rise? GPT-4 begins to show its edge in tasks that demand careful instruction-following and tighter control over tone, structure, and emphasis. OpenAI’s later GPT-4.1 work continues that same direction, highlighting stronger instruction following and long-context handling, which helps explain why the GPT-4 family is often favored when the summary has to stay faithful across longer inputs. That same family of models is still not perfect, but it is built to be more dependable when the text becomes dense and the reader cannot afford a sloppy shortcut.

This is also why the strongest model is not always the best starting point. A free LLM can be the better choice when you are testing a prompt, comparing formats, or asking for a quick digest that you plan to review yourself anyway. GPT-4 tends to earn its keep when you want fewer surprises and less cleanup, especially if you are summarizing material where meaning, sequence, or nuance would be expensive to lose. In other words, GPT-4 vs free LLMs is less a battle and more a question of trust: how much accuracy do you need from the first draft?

Seen this way, model strength is not a single number; it is a match between the model and the moment. GPT-4 gives you stronger language understanding, better instruction following, and a more reliable foundation for careful GenAI summarization, while free LLMs give you access, speed, and a low-friction way to start exploring. Once you recognize that split, the choice becomes much clearer: use the lighter model when the task is forgiving, and reach for GPT-4 when the summary has to carry real weight.

Prompt for Faithful Summaries

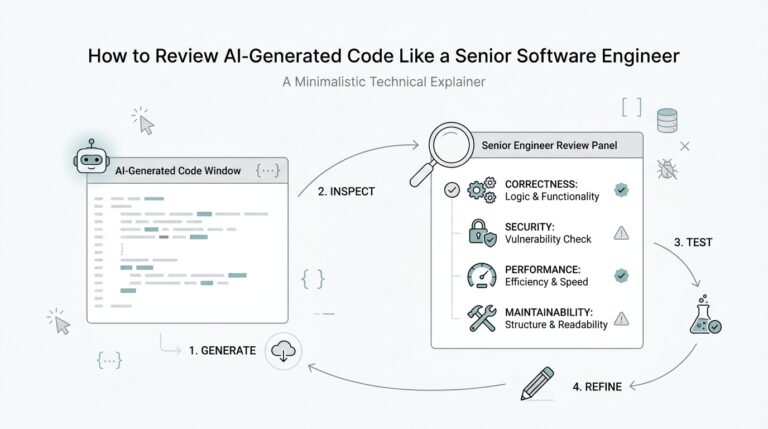

Once we know what the summary should do, the next question is how to ask for it in a way the model can actually follow. OpenAI describes prompt engineering as designing and refining your input so the model gives the best possible answer, and that mindset fits faithful summaries perfectly: you are not asking for a polished blur, you are asking for a shorter version that still carries the original meaning. In GenAI summarization, that means we start by telling the model what must survive the trimming process.

The safest prompt begins by drawing a fence around the source text. Tell the model to use only the provided passage, not outside knowledge, and to say when the source does not support a detail. That matters because OpenAI’s GPT-4 system card notes that language models can still produce unreliable content and hallucinations, which is the word we use when a model invents something that sounds plausible but was never in the text. For faithful summaries, the instruction should feel less like a suggestion and more like a rulebook.

Next, we make the shape of the output visible before the model starts writing. OpenAI’s prompting guidance recommends giving helpful context and describing the ideal output, including tone, format, length, audience, and constraints, because clear instructions help the model stay on task. That is where you spell out whether you want one paragraph, a few short paragraphs, or a fixed number of sentences, and whether the summary should preserve names, dates, decisions, and action items exactly. A faithful summary gets much steadier when the destination is specific.

If you are wondering, “How do I prompt GPT-4 for a faithful summary without losing the thread?”, the answer is to ask for precision in layers. First, tell the model what to keep; then tell it what to ignore; then tell it how to show uncertainty. A prompt like ‘use only the text below, keep proper nouns and dates unchanged, and mark anything unsupported as unknown’ gives the model a clear path. OpenAI also recommends using markdown or XML tags to separate instructions from source material, which helps the model see where your guidance ends and the document begins.

This is also where we can borrow a small trick from careful editors: ask for fidelity before elegance. If the text is dense, the model can be told to prioritize coverage of the main claims, then compress the language only after the facts are safely captured. OpenAI’s GPT-4.1 materials say the model family follows instructions more reliably and handles long context better, which helps when the source is lengthy and the details are spread out. Even so, the prompt still carries the heavy lifting, because a strong model cannot guess which facts matter most unless we name them.

The quiet power of a good faithful-summary prompt is that it turns a vague request into a testable one. We can read the result and check whether the model preserved the original meaning, kept the key facts intact, and resisted the temptation to decorate the answer with invented color. That is the real advantage here: not a prettier summary, but a more trustworthy one. Once the prompt says what must not change, GPT-4 and free LLMs become much easier to compare on the thing that matters most.

Check Hallucination Risk

When you reach the point where a summary looks polished, the next question is almost always the most important one: can you trust it? That is where hallucination risk enters the story, meaning the chance that a model will confidently invent something that was never in the source text. GPT-4 was built to be more capable than earlier models, but OpenAI still says it has known limitations, including hallucinations, so the real task in GenAI summarization is not only making the output shorter but checking whether the model stayed faithful to the original.

This risk matters more in summarization than it does in many other tasks because a summary can hide its mistakes so neatly. If a model changes a date, merges two people into one, or adds a detail that sounded likely, the result may still read smoothly even while the meaning shifts under your feet. OpenAI defines hallucinations as plausible but false statements, and its research notes that models can improve accuracy by guessing more often, even though that also raises the error rate. In other words, a summary can look helpful on the surface while quietly carrying the wrong facts inside it.

That is why GPT-4 vs free LLMs is not really a contest about who writes the prettiest paragraph. It is a question of how well the model can hold onto the source when the text gets crowded with names, exceptions, and nested ideas. OpenAI says GPT-4.1 follows instructions more reliably and handles long-context comprehension better, which is the kind of strength that matters when you are trying to preserve meaning across a long document. A free LLM can still be useful for a rough pass, but the more the job depends on precision, the more you want a model that is less likely to drift when the instructions get specific.

So how do you check hallucination risk before you trust the result? You start by making the model work inside a fence. OpenAI’s prompt guidance recommends putting instructions first and separating them from the source text with clear markers like ### or """, then being specific about length, format, and content requirements. For faithful GenAI summarization, that means telling the model to use only the provided passage, keep names and dates unchanged, and mark anything unsupported as unknown instead of filling in the blanks.

That small change in prompting creates a very practical habit: the model stops behaving like a storyteller and starts behaving like an editor. If you ask, “How do I check hallucination risk in GPT-4 or a free LLM?” the answer is to compare the summary line by line against the source, especially for proper nouns, numbers, and causal claims. OpenAI’s guidance also emphasizes clear instructions and iteration, which means you can tighten the prompt after each pass if the model keeps adding unsupported details. The goal is not to eliminate uncertainty entirely, because even OpenAI says hallucinations remain a hard problem, but to make them visible instead of hidden.

Once you build that habit, hallucination risk becomes easier to manage and less mysterious. You begin to notice which models hold steady under pressure, which ones need more guardrails, and which ones are acceptable only when a human will review the draft carefully. In practice, that is the real lesson for GPT-4 vs free LLMs: use the model that is least likely to invent when the summary has to be faithful, and ask it to say “I don’t know” rather than guess when the source goes silent.

Measure Cost and Speed

Once your prompt is stable and your fidelity checks are in place, the next question gets very practical: what does this summary cost, and how long does it take to get there? With GPT-4 vs free LLMs, the answer is not only about a price tag or a loading spinner. It is about the total effort needed to turn raw text into a usable summary, including retries, cleanup, and the time you spend checking whether the result is trustworthy.

That is why cost should be measured in two layers. The first layer is direct cost, meaning the money you pay to run the model, if any. The second layer is hidden cost, meaning the extra minutes you spend fixing vague wording, correcting missed details, or rerunning a prompt that was too loose. A free LLM can look cheaper on paper, but if it needs three extra passes to produce something dependable, the real cost may be higher than it first appears.

Speed needs the same careful treatment. When people ask, “How do I compare GPT-4 vs free LLMs for speed without fooling myself?”, they often mean response time, which is the number of seconds before the model starts or finishes answering. But in GenAI summarization, the more useful measure is end-to-end speed, which includes the full journey from prompt to final draft. If a model responds quickly but forces you to revise the output by hand, it may not save time at all.

A fair comparison starts with the same input, the same summary goal, and the same instruction style you already defined. Then we watch three things: how long the model takes to respond, how many times we have to regenerate the summary, and how much editing the final version needs. That gives us a clearer picture of summarization performance than a single “fast” or “slow” label ever could. In practice, GPT-4 often feels slower at the first step, but it can return a cleaner draft that reduces downstream work.

This is where throughput matters too. Throughput means how much work a model can complete in a given amount of time, like how many summaries it can produce in an hour. If you are summarizing one document for personal use, throughput may not matter much. But if you are processing many reports, meeting notes, or customer messages, a free LLM might win on raw volume, while GPT-4 wins on consistency per document.

The hidden trick is to measure cost and speed together, not separately. A model that is cheap but requires heavy human review can become expensive in labor. A model that is a little slower but produces a strong first draft can actually be faster in real life, because it reduces the number of correction loops. That is especially important in GPT-4 vs free LLMs comparisons, where the summary quality can change the amount of work you do after the model finishes.

You also want to look at batch behavior, which is what happens when you run many summaries back to back. Some free LLMs are fine for a few test runs but become less predictable when the workload grows, while GPT-4 may hold steadier under repeated use. That matters because GenAI summarization often starts as a one-off experiment and then quietly turns into a workflow. If the model cannot keep pace with your real workload, the fastest demo in the world will not help you later.

So the most useful habit is to time the whole process like a small experiment. Use one sample passage, one prompt, one output format, and one review pass, then compare the money spent, the minutes lost, and the edits needed before you decide which model fits. When you do that, GPT-4 vs free LLMs stops being a vague debate and becomes a concrete tradeoff between speed, cost, and the amount of human repair each summary demands.

Add Human Review

Once the summary exists, we arrive at the part that quietly decides whether it is useful: human review. How do we know when a GenAI summary is good enough to trust? OpenAI’s guidance is a clear reminder that language model output is non-deterministic, can change across model snapshots, and still needs measured oversight, especially when the result will be used in a real workflow. In the context of GPT-4 vs free LLMs, that means the model is not the final judge; a person still needs to read the draft with a careful eye.

This is where the story of summarization becomes less about generation and more about verification. A model can give us a neat paragraph, but neat is not the same thing as faithful, and OpenAI’s GPT-4 report explicitly warns that great care should be taken when using model outputs in high-stakes contexts, with human review being one possible protocol. That warning matters because a summary can look polished while quietly changing a date, smoothing over a disagreement, or omitting the one detail the reader needed most. Human review gives us a second pass that the model cannot provide for itself.

The easiest way to think about human review is as the editor at the end of the table. The model does the first draft work, and the reviewer checks whether the draft still matches the source text, the summarization goal, and the tone we asked for earlier. OpenAI’s prompt engineering guidance recommends clear instructions, explicit boundaries around source text, and use of Markdown or XML tags to separate context from content, which makes review easier because the reviewer knows exactly what the model was supposed to follow. When we add human review, we are not fixing a broken process; we are completing one.

In practice, the review step should feel lightweight but disciplined. A reviewer can scan for unsupported claims, check whether names and numbers survived intact, and ask one simple question: does this summary still answer the original need we defined at the start? OpenAI’s model optimization guidance says developers should write evals, measure performance, and keep tuning because model behavior can shift over time, which is another reason human review cannot be a one-time checkbox. The real value here is consistency: we want the summary to stay reliable even when the input changes, the model version changes, or the task becomes messier than the example we tested.

This is also where GPT-4 vs free LLMs starts to feel more practical than theoretical. GPT-4 may reduce the amount of cleanup a reviewer has to do because it follows instructions more reliably and handles long context better, while a free LLM may still be perfectly useful for a first pass that a human will inspect closely anyway. That difference is not about prestige; it is about how much trust you can place in the first draft before someone steps in. If your workflow already expects human review, a lighter model may be enough for simple summaries, but the moment accuracy matters, the review burden and the model choice should be considered together.

So the real habit to build is not “trust the model” or “distrust the model,” but “trust the process.” We let the model draft, we let the prompt constrain, and then we let a person confirm that the summary still matches the source and the goal. That final human review turns GenAI summarization from a fast guess into a dependable workflow, and it gives us the confidence to choose between GPT-4 and free LLMs based on risk, not optimism.