Identify Hang-Up Triggers

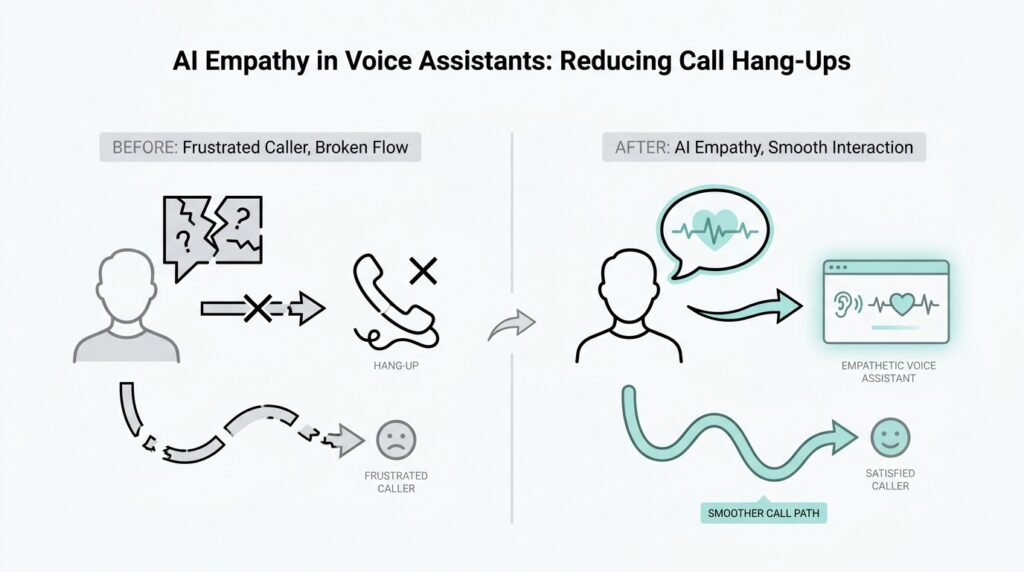

When a caller hangs up, it usually does not happen out of nowhere. More often, the call hang-ups begin with a small friction point that feels minor to us but feels huge to the person on the line. If we want AI empathy in voice assistants to make a real difference, we first have to learn where that friction starts, because the earliest warning signs are often hiding in ordinary moments like a long pause, a repeated question, or a tone that sounds a little too mechanical. What makes people hang up on a voice assistant in the first place? That is the question we need to answer before we can reduce the drop-off.

The easiest way to think about hang-up triggers is to picture a conversation like walking through a doorway together. If the door sticks, the person on the other side notices immediately, even if the problem seems small from our side. In voice support, those sticking points can include long wait times, slow responses, confusing prompts, or a system that asks for information the caller already gave. Each one creates a tiny burst of frustration, and those bursts add up fast.

One of the most common triggers is silence. When a voice assistant pauses too long after a caller speaks, the caller starts wondering whether the system heard them at all. That uncertainty can feel worse than an actual error, because people do not know whether to repeat themselves, wait, or give up. In AI empathy in voice assistants, even a short delay can change how safe and understood the interaction feels, which is why response timing matters as much as the words themselves.

Another trigger is repetition without recognition. Imagine explaining your problem to three different people and hearing the same scripted question each time. That is how a caller feels when the assistant forgets previous answers or loops back to the beginning. This kind of break in conversational memory is one of the clearest hang-up triggers, because it tells the caller, “You are not being heard as an individual.”

Tone matters just as much as timing. A voice assistant can say the right thing and still sound cold, rushed, or dismissive. When the language feels rigid, people often sense that the system cares more about following a script than helping them reach a solution. That is where AI empathy in voice assistants becomes practical, not decorative: the goal is not to pretend to be human, but to sound attentive, calm, and responsive enough that the caller stays engaged.

We also need to watch for moments of confusion in the flow of the call. If the assistant gives too many choices at once, uses unfamiliar terms, or jumps ahead before the caller is ready, the conversation begins to feel like a maze. For beginners, this is where terminology can trip things up, so it helps to remember that a prompt is simply the system’s question or instruction, and a handoff is when the call moves from the assistant to a human agent. Poor prompts and awkward handoffs are both classic hang-up triggers because they make the caller work harder than expected.

The most useful way to identify these triggers is to listen for patterns, not just isolated complaints. If many callers hang up after a certain question, after a second failed attempt, or right before a transfer, that is a signal worth paying attention to. We are not looking for one dramatic failure; we are looking for the small repeated moments where confidence slips. Once we can name those moments clearly, we can design smarter responses, smoother transitions, and more human-feeling support that keeps the conversation moving instead of letting it end too soon.

Map Emotional Caller Journeys

Now that we know where the friction starts, the next step is to trace how it spreads. A caller journey is the path a person takes from the first greeting to the final outcome, and an emotional journey is the changing mix of feelings they carry along that path. When we map emotional caller journeys, we stop treating call hang-ups like random exits and start seeing them as the last step in a story that already felt heavy. That shift matters for AI empathy in voice assistants, because empathy works best when it responds to feelings at the right moment, not after the caller has already left.

How do you map an emotional caller journey when the caller never says, “I’m frustrated,” out loud? We start by listening for emotional signals in the flow of the call. Those signals show up in the pace of speech, the length of pauses, the number of repeated attempts, and the point where the voice changes from patient to tense. In other words, we are not only mapping what the caller did, but also what the caller likely felt while doing it.

The easiest way to picture this is to imagine a road trip with a weather map layered on top. The road is the conversation itself, while the weather is the caller’s emotional state. Early on, the road may feel smooth and the weather calm, but one confusing prompt can turn the sky cloudy, and a second failure can bring a storm. This is why call hang-ups often make more sense when we see them as the end of a worsening emotional sequence instead of a single bad moment.

A useful emotional caller journey usually has a few recognizable stages. At the beginning, the caller often arrives hopeful, even if they are already stressed about the problem that brought them in. Then comes the test: the assistant asks a question, offers a choice, or requests a detail, and the caller decides whether the interaction feels helpful or tiring. If the assistant repeats itself, misses context, or sounds stiff, the caller moves from hopeful to cautious, then from cautious to irritated, and finally to the quiet decision to leave. That progression is important because AI empathy in voice assistants should answer each stage differently, not with the same tone every time.

This is where the mapping becomes practical. We can mark the moments when trust rises, holds steady, or slips away. Trust rises when the assistant remembers context, confirms understanding, and moves the conversation forward without making the caller repeat themselves. Trust slips when the system sounds uncertain, asks for unnecessary information, or makes the caller feel like they are working harder than the machine. Once we see those turning points clearly, we can design better responses that reduce call hang-ups before they happen.

Emotional mapping also helps us spot the difference between a minor annoyance and a breaking point. A caller may tolerate one slow response, but not three in a row. They may forgive one confusing menu option, but not a transfer that forces them to start over. That pattern tells us something valuable: people do not hang up only because of one mistake, but because the call starts to feel emotionally expensive. When we understand that cost, we can shape AI empathy in voice assistants to lower it with reassurance, clarity, and steadier conversational pacing.

The real payoff comes when we treat this journey as a design tool, not a reporting exercise. Once we can name the emotional highs and lows, we can decide where the assistant should slow down, where it should confirm understanding, and where it should hand off with more care. That gives us a clearer way to reduce call hang-ups, because we are no longer reacting to the final dropout alone. We are reading the whole emotional path that led there, and that gives us a much better chance of keeping the caller with us for the next step.

Write Empathetic Voice Copy

When a caller reaches a voice assistant, the words they hear first can either lower their shoulders or tighten them. That is why empathetic voice copy matters so much: it is the written language a system speaks out loud, and it often decides whether a caller keeps going or gives up. In AI empathy in voice assistants, the goal is not to sound theatrical or overly warm; it is to sound like a steady guide who understands the moment the caller is in.

If you have ever wondered, “How do I make a voice assistant sound empathetic without sounding fake?” the answer starts with restraint. Empathetic copy works best when it acknowledges the caller’s situation in plain language, then moves forward with purpose. A line like “I can help with that” does more than fill space; it tells the caller they have not arrived in a void. That small signal of recognition can soften the pressure that often leads to call hang-ups.

The next step is to write for the caller’s mind, not for the system’s logic. A voice assistant may know the menu perfectly, but the caller is usually thinking, “What happens next?” Good empathetic voice copy answers that question before frustration has a chance to grow. It uses short, clear sentences, familiar words, and one idea at a time, because a caller under stress does not want to decode a script while also solving a problem. This is where AI empathy in voice assistants becomes practical: clarity itself feels caring.

Tone is the quiet bridge between helpful and cold. When a phrase sounds rushed, absolute, or robotic, the caller can feel pushed instead of supported. When it sounds calm, respectful, and human in rhythm, the interaction feels easier to trust. That does not mean pretending the assistant has feelings; it means choosing language that respects the caller’s feelings, especially when the call is already difficult.

Empathetic voice copy also needs little moments of reassurance. These are the short phrases that tell the caller they are still on track, such as confirming what the system heard, explaining why a question matters, or warning before a transfer happens. Think of reassurance as the handrail on a staircase: the stairs still exist, but the handrail makes the climb feel safer. Without that support, even a useful process can feel like one more barrier and contribute to call hang-ups.

A strong voice script also avoids making the caller carry extra work. If the system already has a detail, it should not ask for it again unless there is a real reason. If a step is optional, the copy should say so. If a transfer is coming, the assistant should prepare the caller instead of surprising them. These are small choices, but they are exactly where AI empathy in voice assistants turns from an abstract idea into something the caller can feel in real time.

The best voice copy also leaves room for recovery when things go wrong. A failed recognition, a misunderstood answer, or a delay does not have to end the conversation if the assistant responds with patience and ownership. Phrases that calmly acknowledge the problem and offer a clear next step keep the interaction moving instead of freezing it in place. That matters because callers often stay engaged not when everything goes perfectly, but when the system handles the imperfect moment without making them feel blamed.

In practice, writing empathetic voice copy means listening for emotion in every line. We are not only asking, “Is this accurate?” We are also asking, “Would this feel supportive if I were tired, confused, or frustrated?” That simple shift changes the whole script. It helps reduce call hang-ups by making the conversation feel lighter, clearer, and more human, one spoken sentence at a time.

Cut Waits With Smart Routing

The moment a caller feels stuck, patience starts to drain fast. That is why smart routing matters so much: it is the system’s way of sending a caller to the right place, at the right time, with the fewest unnecessary pauses. In AI empathy in voice assistants, cutting waits is not only about speed; it is about making the caller feel guided instead of bounced around. When people ask, “How do you cut waits with smart routing?” they are really asking how to remove the hidden friction that makes a call feel longer than it should.

The first move is to decide what the caller actually needs before we send them anywhere. That need is often called the caller’s intent, which means the reason they reached out in the first place, like billing help, a password reset, or a refund question. A smart routing system listens for clues in the opening exchange, then uses that signal to choose the best path instead of dropping the caller into a generic queue, which is the waiting line for support. When AI empathy in voice assistants uses intent well, the caller spends less time repeating themselves and more time moving toward a real answer.

This is where the routing feels a little like a good host at a busy event. A rushed host sends everyone to the same crowded room and hopes for the best, while a careful host listens, asks one good question, and points each guest to the right table. Smart routing does the same thing by matching the caller’s issue with the right agent, the right self-service flow, or the right escalation path. That match matters because long waits often happen when the system sends people to the wrong place first and makes them start over.

One practical way to reduce call hang-ups is to route by urgency as well as topic. Urgency means how quickly the caller needs help, and it can show up in the language they use, the kind of account they have, or whether they are facing an active problem. A lost card, for example, should not sit in the same line as a general account question. When the assistant recognizes that difference and routes accordingly, it shows AI empathy in voice assistants in a very concrete way: it respects the caller’s time and the pressure they are under.

Smart routing also works best when it remembers what the caller already shared. If someone has already said their ZIP code, account type, or problem category, the system should pass that information forward instead of making the caller repeat it like a broken record. This is often called context, which means the surrounding details that help a conversation make sense. Context-aware routing trims the awkward pauses that happen when a caller is transferred into a new conversation with none of the earlier pieces intact, and that loss of continuity is a common reason people hang up.

Another piece of the puzzle is setting clear rules for when the assistant should stay in charge and when it should hand off. A handoff is the moment the call moves from the voice assistant to a human agent, and if that step happens too late, the caller may feel trapped; if it happens too early, they may feel like they were sent away without effort. The best routing systems use the assistant as a filter, not a wall. They let the voice assistant handle simple requests quickly, then pass more complex or emotional cases to a person before frustration builds.

We also need to think about load balancing, which means spreading calls across available agents so no one route gets overloaded while another sits empty. This may sound technical, but the caller experiences it as fairness and speed. If one queue is backing up, smart routing can quietly shift traffic to a shorter line or an available specialist, which keeps the conversation moving and lowers the chance of a drop-off. In AI empathy in voice assistants, that kind of invisible support can feel like care, even though it is really careful system design.

The real strength of smart routing is that it reduces the moments when callers have to wait, guess, or explain themselves twice. Instead of treating the line as a holding area, we use routing to shape a smoother path from greeting to resolution. That is how call hang-ups start to fall: not by promising perfect service, but by removing the delays that make callers feel forgotten. From here, the next step is to look at how the assistant can keep that path steady once the caller is actually in motion.

Add Callback And Human Handoffs

Sometimes the best way to keep a caller from hanging up is to stop asking them to wait in the first place. A callback, meaning a scheduled return call, gives the person a break while preserving their place in line, and a human handoff is the moment the assistant passes the conversation to a live agent. In AI empathy in voice assistants, those two moves often matter more than one more polished prompt, because they tell the caller, in plain terms, that their time and frustration have been noticed.

When the queue starts to stretch, a callback can feel like a kind exit instead of a dead end. AWS documents describe callbacks as a way for customers to keep their position in queue without staying on the call, and the system can preserve the original call start time rather than treating the callback request as a fresh place in line. That detail sounds small, but it changes the emotional script: the caller does not feel like they are starting over, which helps reduce the pressure that often leads to call hang-ups.

The real empathy comes from how we present the choice. If we say, “Hold and wait,” the caller hears a demand; if we say, “We can call you back and keep your place,” they hear a plan. Amazon Connect’s callback options also support retry timing and queue handling, which means the system can be designed to respect both the caller’s patience and the team’s capacity. So when people ask, “How do you reduce call hang-ups without making the caller feel brushed aside?” a well-timed callback is often part of the answer.

Human handoffs need the same care, just at a different moment. Google Cloud defines handoff as moving a conversation from a virtual agent to a human agent, and Amazon Connect says a contact can be transferred from a bot to an agent with all context preserved. That is the key phrase to hold onto: all context preserved means the human should not walk in blind. The caller should not have to repeat their story like a broken record, because repetition is one of the fastest ways to make AI empathy in voice assistants feel fake.

Think of the handoff like opening a door while carrying the caller’s belongings with you. The assistant should pass along the issue, the steps already tried, and any sign that the caller is tired, upset, or in a hurry. Twilio’s voice guidance similarly emphasizes richer call context before routing people to agents, which matches the practical goal here: the human should arrive prepared, not improvising from scratch. That one change turns the handoff from a hard transfer into a warm one.

The best systems use callback and human handoffs together, not as competitors but as escape valves for different kinds of strain. Callback helps when the problem is time; human handoff helps when the problem is complexity, emotion, or a situation the assistant should not keep stretching out. Used this way, AI empathy in voice assistants becomes very concrete: we lower the caller’s effort, we reduce the feeling of being trapped, and we make the next step obvious before frustration has time to grow.

So as you design this part of the journey, ask two simple questions: did we offer a callback soon enough, and did the handoff carry enough context for the human to continue without restarting? If either answer is no, the caller has to do extra emotional work, and that extra work is often what turns a difficult call into a hang-up. When we get both answers right, callback and human handoffs stop being backup plans and start becoming some of our most practical tools for keeping people engaged.

Track Abandonment And Sentiment

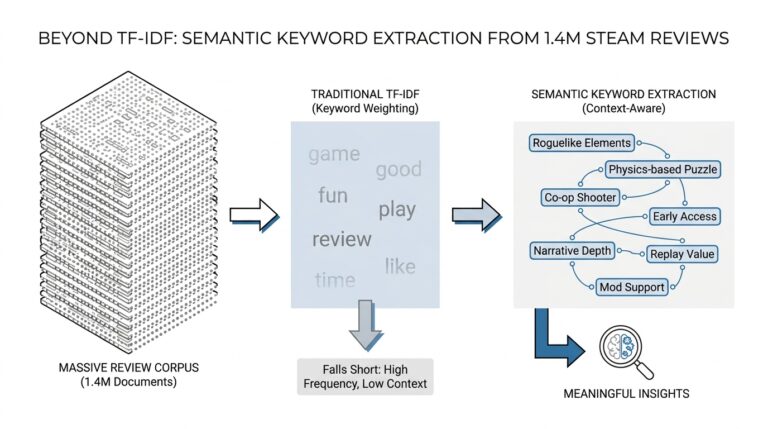

Once we start spotting triggers, journeys, and handoffs, the next question becomes much more practical: how do we know whether any of it is working? That is where abandonment and sentiment tracking come in, because they turn a vague feeling like “people seem frustrated” into something we can actually watch, compare, and improve. If you have ever wondered, how do you measure call hang-ups before they become a bigger problem?, this is the part of the process where the answer starts to take shape.

Abandonment rate is the simplest place to begin. It means the share of calls that end before the caller reaches the outcome they wanted, and it gives us a blunt but useful signal that something in the experience is pushing people away. On its own, that number does not explain the whole story, but it acts like a smoke alarm: if it rises, we know the conversation is losing people somewhere. In AI empathy in voice assistants, that is a warning worth taking seriously, because call hang-ups usually build from small moments long before the final disconnect.

Sentiment tracking adds the emotional layer. Sentiment means the feeling behind the words, such as calm, neutral, irritated, or upset, and we can estimate it by listening to language, pacing, repeated objections, and changes in tone. Think of abandonment as the exit sign and sentiment as the weather along the road; one tells us where people left, and the other helps us understand what it felt like to keep going. When we track both together, we stop guessing whether a caller left because they were confused, impatient, or angry, and we start seeing the shape of the problem more clearly.

The real value shows up when we connect sentiment shifts to specific moments in the call. A caller may sound fine during the greeting, then become sharper after a long pause, a repeated prompt, or a transfer that came too late. That pattern tells us something important: the issue is often not the entire conversation, but the exact point where trust started to slip. AI empathy in voice assistants works best when we can pair those emotional dips with the design choices that caused them, because then we can fix the right part of the journey instead of repainting the whole house.

A useful dashboard should show both the number and the story. We want to see where abandonment spikes, which call paths lose people most often, and which moments tend to produce negative sentiment before a drop-off happens. That might mean looking at transfers, repeated recognition failures, long silences, or confusing options, then asking whether the same pattern shows up again and again. This is where sentiment tracking becomes more than a report; it becomes a map of pressure points that helps us reduce call hang-ups with targeted changes.

It also helps to compare caller groups, because not every caller experiences the system the same way. First-time callers may sound uncertain sooner, while returning callers may get frustrated faster if they expect the assistant to remember them. A frustrated caller and a confused caller may both hang up, but they leave for different reasons, and good AI empathy in voice assistants needs to recognize that difference. When we segment abandonment and sentiment by issue type, queue, or caller history, the patterns become far more useful than a single overall score.

The best teams do not treat these signals as a scoreboard for blame. They use them like a conversation with the system itself, asking where the experience felt heavy, where it stayed steady, and where it broke trust. That mindset matters because a high abandonment rate is not just a performance problem; it is often a sign that callers felt unheard, rushed, or forced to do too much work. When we read sentiment alongside abandonment, we can see those emotional costs early enough to respond with clearer copy, smarter routing, or a better handoff.

And that is the real payoff of tracking both. We are no longer waiting for a hang-up to tell us something went wrong; we are watching the buildup in real time and using it to shape the next interaction. In practice, that means AI empathy in voice assistants becomes measurable, not mythical, and the system can keep improving because we know what callers felt, when they felt it, and where the conversation began to slip.