Data Ingestion Basics

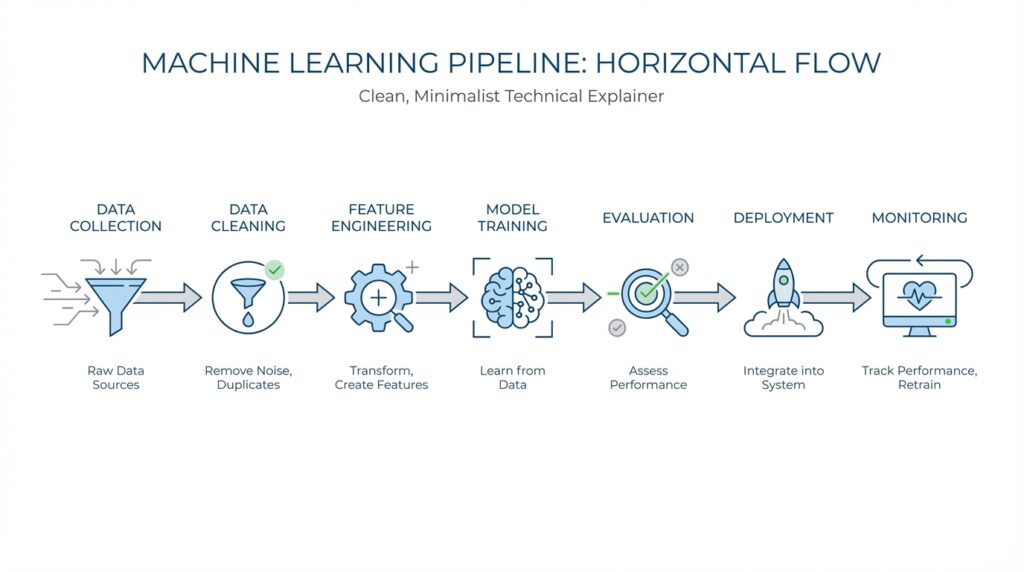

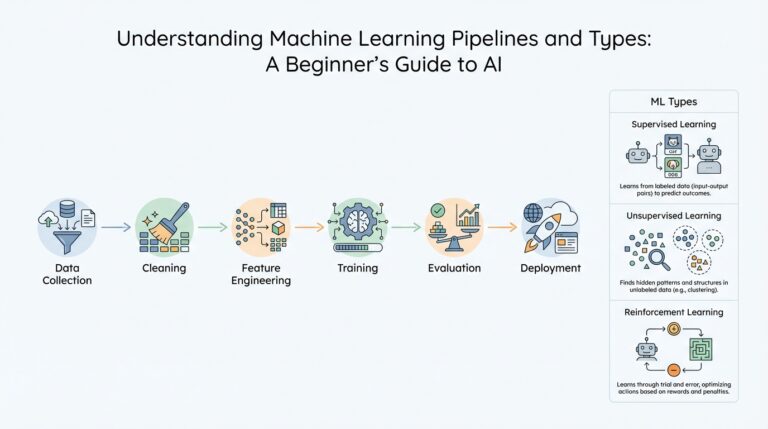

Building on this foundation, data ingestion is the part of the machine learning pipeline where raw information first enters your system. It is the bridge between the places data lives, such as databases, application logs, files, or sensors, and the place where your model can actually use it. How do you get data from all those different sources into one reliable workflow without creating a mess? That is the question ingestion answers, and it matters because even the smartest model cannot learn from data it never receives.

At a beginner level, think of data ingestion like bringing ingredients into a kitchen before cooking begins. Some ingredients arrive in a box once a week, while others come fresh throughout the day, and both need a place to land before the recipe can start. In the same way, data ingestion collects records from source systems and moves them into a storage layer, a processing layer, or both. This step often includes extracting the data, checking that it looks usable, and loading it where the rest of the pipeline can find it.

The next thing to understand is that not all data arrives the same way. In batch ingestion, you collect data in chunks at scheduled times, like pulling yesterday’s sales records every night. In streaming ingestion, data arrives continuously, like a live feed from a website, payment system, or device sensor. Batch is often easier to manage, while streaming gives you fresher data; choosing between them depends on how quickly your model needs new information and how much complexity you are ready to handle.

This is where quality checks become part of the story, not an afterthought. If a column changes name, a file arrives empty, or a date is formatted differently than expected, the ingestion step should catch it before the problem spreads through the pipeline. We call these checks validation, which means confirming that the data matches the rules you expect. Good data ingestion also records provenance, which is a simple way of saying it keeps track of where the data came from, when it arrived, and what happened to it along the way.

That recordkeeping may sound small, but it saves you later when something looks strange in a model prediction. If you know which source produced a record, which version of the schema it used, and which transformation touched it, you can trace a bug much faster. This is especially important in a machine learning pipeline because model behavior often changes when the incoming data changes, even a little. Strong ingestion practices give you a clearer picture of whether the model is drifting, the source data is changing, or the pipeline itself is failing.

So what should you look for when designing data ingestion for a real project? Start by asking where the data comes from, how often it changes, and how reliable each source is. Then decide whether you need batch or streaming, what checks should run before data enters the pipeline, and where you will store logs for troubleshooting. Once those pieces are in place, data ingestion stops being a hidden plumbing task and becomes the steady first step that keeps the rest of the workflow trustworthy, searchable, and ready for the next stage.

Clean and Preprocess Data

Building on this foundation, the next challenge is turning incoming data into something a machine learning model can actually learn from. Raw data often looks complete at first glance, but once you open it up, you find gaps, duplicates, odd formats, and values that do not belong. How do you turn a messy pile of records into reliable training material? That is where data cleaning and preprocessing step in, and they often determine whether a machine learning pipeline feels stable or fragile.

The first part of the work is data cleaning, which means removing or fixing problems in the dataset before modeling begins. You may notice missing values, which are empty fields where information should be, or duplicates, which are repeated rows that can quietly bias results. You may also find outliers, meaning unusually large or small values that can distort patterns, like a salary entry with an extra zero or an age recorded as 999. This stage is a little like sorting produce before cooking: you inspect what is bruised, mislabeled, or out of place so the final dish does not inherit those flaws.

Once the obvious issues are under control, preprocessing prepares the data for the model’s way of thinking. Different algorithms prefer data in different forms, so preprocessing often includes standardizing numbers, encoding categories, and reshaping text or dates into useful signals. Feature scaling, for example, puts numeric values on a similar range so one feature does not dominate another simply because its numbers are larger. One-hot encoding is another common step, and it means turning a category like “red,” “blue,” or “green” into separate binary indicators, or 0-and-1 flags, that a model can read more easily.

This is where many beginners start to see why data preprocessing is not a cosmetic step. A model does not understand that “NY,” “New York,” and “N.Y.” might refer to the same place unless we make that relationship explicit. It also does not know that temperature in Celsius and distance in kilometers are very different kinds of numbers unless we treat them carefully. If you have ever asked, “Why does my model perform well on one dataset but poorly on another?”, inconsistent preprocessing is often one of the first places to look. In practice, the cleaner and more consistent the input, the less confusion the model has to carry forward.

Now that we understand the main transformations, we also need to think about timing. In a well-designed machine learning pipeline, preprocessing should be built so it behaves the same way during training and during deployment. That means the rules you use to clean, scale, and encode the training data must also be applied to new data later, or the model may see a different world than the one it learned from. This is why teams often save preprocessing steps as part of the pipeline itself, rather than doing them manually in a notebook and hoping they are remembered later.

As we discussed earlier with data ingestion, traceability matters, and that same idea carries into preprocessing. You want to know which columns were changed, which values were filled, which records were dropped, and which transformations were applied. That record becomes incredibly useful when a prediction looks strange and you need to determine whether the issue started in the source data, the cleaning rules, or the preprocessing logic. Once this stage is handled with care, the pipeline stops feeling like a string of repairs and starts feeling like a dependable path from raw data to model-ready features.

Engineer Useful Features

Building on this foundation, we now face the part of the machine learning pipeline where ordinary data starts to become something the model can actually use well. This is feature engineering, which means shaping raw information into features, or measurable pieces of input, that help a model spot patterns more clearly. How do you turn a stream of cleaned records into signals that actually matter? That is the question here, and it is where a lot of model quality is won or lost.

Think of it like packing for a trip. You could throw every item into one suitcase and hope for the best, or you could organize clothes, chargers, and toiletries so the right thing is easy to find at the right time. Feature engineering does that for data: it organizes information so the model is not overwhelmed by noise or distracted by details that do not help. A raw timestamp, for example, might become hour of day, day of week, or whether the moment falls on a weekend, because those smaller pieces often tell a more useful story than the original field alone.

This is where we begin asking a practical question: which parts of the data actually carry meaning for the prediction? In a churn model, or a model that predicts whether a customer will leave, a simple count of support tickets may be more useful than the full support log. In a fraud model, the ratio between a purchase amount and a customer’s usual spending may matter more than the purchase amount by itself. These engineered features do not invent new reality; they make patterns easier for the machine learning pipeline to see.

Taking this concept further, we also need to decide how to represent information that does not arrive in a model-friendly shape. Categories, for example, are labels like city names or product types, and many models cannot read those labels directly. We may turn them into one-hot features, which are 0-and-1 columns that show whether a category is present, or use frequency-based encodings that show how common a category is. Text can become word counts or simple indicators, and dates can become elapsed time since an event, because a model often learns better from structure than from raw strings.

Now that we understand the basic building blocks, the real craft is choosing features that are useful instead of merely interesting. A feature is useful when it adds signal, supports the target, and is available at the moment of prediction. That last part matters a lot, because data leakage, which means accidentally using information that would not exist in real life when the model makes a prediction, can make results look far better than they truly are. If you include tomorrow’s outcome in today’s training data, the model may seem brilliant during development and fail the moment it meets the real world.

We also want features that behave consistently across training and deployment, because the model should not learn one version of the world and then face another. That is why teams often reuse the same feature engineering logic in both places, rather than rebuilding it by hand each time. A well-designed machine learning pipeline keeps those transformations close to the data flow, so features are created the same way whether the input comes from a historical dataset or a live system. When that consistency is missing, even a strong model can stumble because the shape of the input changed underneath it.

As we discussed earlier with preprocessing, recordkeeping still matters here, because every transformation should be explainable later. You want to know which features were created, which formulas were used, and which source fields they came from, so you can trace a surprising prediction back through the pipeline. That traceability helps you debug problems, compare model versions, and decide whether a feature is genuinely useful or only looks helpful in a notebook. Once you approach feature engineering this way, it stops feeling like guesswork and starts becoming a careful process of turning raw material into reliable signal.

Train and Tune Models

Building on this foundation, we finally reach the moment where the machine learning pipeline stops preparing ingredients and starts cooking. Training a model means showing it examples so it can learn patterns, while tuning means adjusting the model’s settings so it performs better on new data. How do you know when a model is learning the right lesson instead of memorizing the training set? That question sits at the heart of model training and hyperparameter tuning, and it is where careful work begins to pay off.

The first step is to split the data into roles, because not every record should teach the model in the same way. The training set is the portion the model learns from directly, while the validation set is a separate slice we use to check how well it is doing during development. You can think of it like practicing for a presentation: the training set is your rehearsal notes, and the validation set is the friendly audience that tells you whether the message is landing. This separation matters because a model that scores perfectly on the data it already saw may still struggle when it meets unfamiliar examples.

Once the data is split, the model learns by adjusting its internal parameters, which are the values it uses to make predictions. During training, the model compares its prediction to the correct answer, measures the mistake with a loss function, which is a formula that tells us how far off the prediction was, and then improves itself using an optimizer, which is the method that updates those parameters. That may sound abstract, but the idea is familiar: if you miss a target, you look at how far off you were and then aim a little differently next time. In a machine learning pipeline, this loop repeats many times until the model settles into a useful pattern.

This is where tuning enters the story. Hyperparameters are settings you choose before training begins, such as learning rate, tree depth, or the number of layers in a neural network, and they are different from learned parameters because the model does not discover them on its own. Tuning means testing different hyperparameter values to see which combination gives the best validation performance. Imagine adjusting the knobs on a radio: the song is already there, but you need to find the exact position that makes it clear. In practice, teams often use grid search, which tries a fixed set of combinations, or random search, which samples different options more efficiently.

As we discussed earlier with preprocessing and feature engineering, consistency matters here too, because the model can only learn well if the training process stays controlled. Overfitting is one of the biggest risks, and it happens when a model learns the training data too closely, including its noise and quirks. Underfitting is the opposite problem, where the model is too simple to capture the real pattern. Regularization, which adds a penalty to overly complex models, and early stopping, which ends training when validation performance stops improving, are both common ways to keep the model balanced instead of brittle.

Now that we understand the main idea, the practical question becomes: how do you train and tune models without getting lost in endless experiments? The answer is to measure each run carefully and compare the same metrics every time, such as accuracy for classification or mean squared error for prediction tasks. If one model looks better on training data but worse on validation data, that is a sign to pause and inspect the setup rather than celebrate too soon. In a mature machine learning pipeline, model training is less about chasing a single lucky result and more about building a repeatable process that tells you what genuinely works.

Taking this concept further, you also want the training process itself to be reproducible. That means saving the exact data split, the chosen hyperparameters, the model version, and the performance results so you can explain why one configuration won. This record becomes especially valuable when you return later and ask whether a newer run truly improved the model or only looked better by accident. Once that discipline is in place, training and tuning stop feeling like trial and error and start becoming a guided search for the model that is ready to move forward.

Evaluate Pipeline Performance

Building on this foundation, evaluating a machine learning pipeline means looking at the whole path, not only the final model score. You are asking a bigger question now: did the pipeline move data, train the model, and serve predictions in a way that is accurate, fast, and dependable? That matters because a model can look strong in a notebook while the surrounding workflow quietly slows down, breaks, or drifts out of date. In practice, pipeline evaluation helps you see whether the system works as a system, not just as a single algorithm.

The first thing we measure is output quality, because that is the most visible sign that the machine learning pipeline is doing its job. If this is a classification task, you may look at accuracy, precision, recall, or F1 score, which is a balanced metric that combines precision and recall. If this is a prediction task, you may check error measures such as mean absolute error or mean squared error, which show how far predictions are from the truth. These metrics tell you whether the pipeline is producing useful answers, but they do not yet tell you whether it is producing them in a healthy way.

That is where process performance comes in. How long does the pipeline take to run, how much compute does it use, and does it behave the same way when the data grows? A workflow that trains well on a small sample but slows to a crawl on a full dataset is not ready for real use. So when people ask, “How do you evaluate a machine learning pipeline in practice?”, the answer starts with both model quality and operational health, because one without the other leaves you guessing.

Now that we understand the output and the process, we can look at the checkpoints in between. A good evaluation compares each stage of the pipeline, from ingestion and preprocessing to feature creation and model training, so you can see where performance improves or falls apart. For example, you might notice that the model score is fine, but preprocessing takes most of the runtime, or that feature generation creates a delay before prediction can happen. That kind of detail is valuable because it tells you whether to improve the data flow, the transformation logic, or the model itself.

Taking this concept further, you also want to test the pipeline against new and changing data. A machine learning pipeline can appear stable during development and still fail later if the incoming data shifts in shape, scale, or meaning. That is why teams watch for data drift, which means the input data has changed from what the model saw during training, and concept drift, which means the relationship between inputs and outcomes has changed. When those shifts show up, a pipeline evaluation should reveal them early, before the model starts making confident but unreliable predictions.

Another useful lens is reliability. A strong pipeline should handle missing values, delayed inputs, unexpected formats, and occasional service hiccups without collapsing. You can think of this like testing a bridge by checking not only whether cars cross it on a sunny day, but also whether it still holds up in wind, rain, and heavy traffic. In a machine learning pipeline, that means looking at failure rates, retries, logging quality, and whether the system gives clear signals when something goes wrong.

Cost also belongs in the conversation, even though beginners often leave it out at first. A pipeline that uses fewer resources, finishes faster, and needs less manual cleanup is usually easier to maintain and scale. This is especially important when you move from experimentation to production, because the same workflow may run many times a day or serve many users at once. If a small improvement in preprocessing cuts several minutes from each run, that can add up quickly across a busy environment.

As we discussed earlier with feature engineering and model training, reproducibility matters here too. You want to compare the same machine learning pipeline under the same conditions, using versioned data, versioned code, and the same evaluation metrics every time. That way, when performance changes, you can tell whether the cause was a new feature, a new dataset, or a genuine improvement in the workflow. With those measurements in place, you are ready to move from guessing about performance to understanding it with enough clarity to make the next improvement worthwhile.

Deploy and Monitor Models

Building on this foundation, we now reach the point where a model leaves the safety of training and starts doing real work. Model deployment is the step where you place a trained model into a production environment, which means the live system where people, apps, or services can actually use it. How do you take a model that looks promising in development and make it available without breaking the rest of the machine learning pipeline? That is the practical challenge here, and it is where careful planning matters more than raw model accuracy.

Before anything goes live, we need to think about how predictions will be delivered. In one common setup, the model sits behind an API, or application programming interface, which is a way for software to ask for a prediction and receive a response. In another setup, the model runs in batches, meaning it scores many records at scheduled times instead of responding instantly. The choice depends on the business need: a fraud check may require immediate answers, while a daily sales forecast can arrive later. In both cases, deployment is not just about placing code on a server; it is about fitting the model into a working machine learning pipeline that can serve predictions reliably.

This is where environment consistency becomes important. A model that worked in a notebook may fail in production if it expects a different library version, a different feature format, or a different way of handling missing data. To reduce that risk, teams often package the model, its preprocessing logic, and its dependencies together so they travel as one unit. Think of it like sending a meal kit instead of loose ingredients: if the recipe, tools, and instructions stay together, there are fewer surprises when it is time to cook. In modern machine learning pipeline design, this packaging step often includes containers, which are lightweight bundles that keep software behavior more predictable across machines.

Once the model is live, the story changes from building to watching. Monitoring is the process of tracking how the deployed model behaves over time so you can tell whether it is still healthy. You may watch prediction latency, which is the time it takes to return an answer, error rates, and the volume of requests the system receives. You may also compare new input data to the data the model saw during training, because a model can drift out of usefulness even when the code itself has not changed. What causes that slow decline? Often it is not a bug in the model at all, but a shift in the world around it.

That is why model monitoring needs both technical and statistical signals. Technical signals tell you whether the service is up, fast, and stable. Statistical signals tell you whether the data itself still looks familiar. For example, if a feature that used to fall between 0 and 10 suddenly starts arriving between 0 and 100, the model may still respond, but its predictions may become less trustworthy. This is one of the reasons monitoring matters so much in a machine learning pipeline: the model does not know that the world changed, but we can detect the change and react before users feel the impact.

Taking this concept further, good monitoring also includes business feedback. A prediction may look fine on a dashboard yet still fail to help the person using it. If a recommendation model predicts items no one clicks, or a risk model flags too many safe cases, the business outcome tells a deeper story than the raw score alone. That is why teams often connect model logs, user outcomes, and ground truth labels, which are the real answers used to judge whether predictions were correct. When you combine those pieces, monitoring becomes a way to answer a simple but important question: is the model still solving the problem it was built to solve?

As we discussed earlier with evaluation, traceability matters here too, because you want to know which model version made each prediction and which data version fed it. If performance drops, versioned records help you tell whether the cause was a model change, a data shift, or a problem in the serving layer. This is also where alerting becomes useful. An alert is a notification that tells the team when a metric crosses a threshold, like a spike in errors or a sudden drop in prediction confidence. In practice, alerts keep the machine learning pipeline from drifting silently into failure.

A strong deployment plan also includes a rollback path, which means a safe way to return to a previous model version if the new one behaves badly. That may sound cautious, but it is one of the most reassuring habits you can build. Instead of hoping every release works perfectly, you prepare for the moment when reality behaves differently than the test environment. When that happens, rollback gives you time to investigate without leaving users stuck with a broken experience.

When you put deployment and monitoring together, the machine learning pipeline stops being a one-time project and becomes a living system. You ship a model, observe how it performs, learn from what happens, and improve the next version with better evidence. That rhythm is what makes production machine learning feel less like a gamble and more like an ongoing conversation between data, code, and the real world.